Introduction

A voice bot that passes a demo is not the same as one that survives production. Most failures happen in the gap between the two: latency spikes under real traffic, clipped speech from poor VAD tuning, or dialogue breakdowns when a user says something unexpected.

Open-source tools have dramatically closed the cost and complexity gap versus proprietary platforms, which charge multiples above raw component costs for dashboards and vendor margins. Building with open-source ASR (like Whisper), TTS (like Piper or XTTS), and orchestration frameworks, teams can reach sub-500ms latency while keeping full data sovereignty — a hard requirement for HIPAA and GDPR compliance.

This guide walks through assembling the core pipeline (ASR → logic → TTS), the configuration parameters that separate stable deployments from fragile prototypes, and how to diagnose the failure patterns that sink most production rollouts. Whether you're building your first voice agent or replacing a costly proprietary API, you'll leave with the techniques needed to ship something users actually rely on.

TL;DR

- Assemble four core components — ASR, VAD (voice activity detector), LLM/logic engine, and TTS — connected through a streaming orchestrator

- Sub-500ms end-to-end latency is mandatory; anything slower breaks conversational flow and causes users to talk over the bot

- Self-hosting open-source tools satisfies HIPAA and GDPR requirements by keeping audio on your infrastructure

- Poor VAD tuning, missing timeout fallbacks, and under-tested conversation flows cause most production failures

- AI-to-AI testing catches dialogue failures that unit tests miss entirely

How to Build a Reliable Voice Bot with Open Source Tools

Step 1: Configure Your Speech-to-Text (ASR) and VAD Layer

Choose an ASR engine based on your deployment constraints. For highest accuracy on clean audio, use Whisper Large-v3, which achieves a 2.01% word error rate on LibriSpeech clean test sets. For edge or offline deployments where GPU access is limited, switch to Vosk (40MB models run on Raspberry Pi with 9.85% WER) or quantized Whisper variants via faster-whisper, which delivers up to 4x speedups with INT8 quantization while maintaining acceptable accuracy.

Set up server-side Voice Activity Detection. Silero VAD processes 30ms audio chunks in under 1ms and is the industry standard for detecting when users finish speaking. Device-side VAD alone fails at scale due to limited compute and higher error rates on background noise—server-side VAD provides consistent, reliable end-of-turn detection across all user environments.

Configure ASR with domain-specific prompts to reduce hallucinations on silence or background noise:

Whisper prompt: "Professional business conversation. No music, no background chatter. Common terms: [YourProductName], [CompanyName], account, appointment."

This 224-token prompt steers the model toward your vocabulary and suppresses irrelevant transcriptions.

If building for healthcare or finance, ensure your ASR service runs locally or on a self-hosted server. Audio leaving your infrastructure violates HIPAA technical safeguards and complicates GDPR Article 44 data transfer requirements. Self-hosted Whisper or Vosk deployments solve this entirely.

Step 2: Set Up Your Dialogue Logic (LLM or Intent Engine)

For open-ended conversational use cases, configure an LLM with a concise system prompt:

You are a customer support agent for [Company]. You can answer questions about billing, technical support, and account management. You cannot provide legal advice, medical information, or access user passwords. If asked about topics outside your scope, politely redirect: "I can help with [X, Y, Z]. For [other topic], please contact [department]."

Vague prompts cause hallucinations and off-topic responses. Be explicit about what the bot can and cannot do.

For structured, task-oriented flows (appointment booking, intake forms), consider a rule-based engine like Rasa or Botpress instead of a pure LLM. Predictability matters more than flexibility when collecting structured data—users expect consistent slot-filling behavior, not creative responses.

Set a conversation history window appropriate to your context. Research shows LLMs experience the "Lost in the Middle" phenomenon, where they struggle to recall information buried in long contexts. Keep your system prompt plus conversation history under 2,048 tokens (approximately 1,500 words) to preserve both memory and response speed.

Implement a sliding window that retains the system prompt and the last 4-6 turns, dropping older exchanges.

Integrate tool calls or action handlers for backend operations. If your LLM needs to check schedules, retrieve account data, or trigger workflows, expose these as callable functions rather than hardcoding logic. This keeps the dialogue layer clean and testable.

Step 3: Add Text-to-Speech (TTS) with Streaming Output

Select a TTS engine based on quality versus resource trade-offs:

- Piper: Lightweight, CPU-friendly inference (RTF ~0.2 on Intel i7); ideal for edge deployments where GPU access is unavailable

- XTTS-v2: Near-human naturalness on GPU with sub-200ms time-to-first-chunk streaming latency; supports voice cloning with just 6 seconds of reference audio

Enable streaming TTS output so the first audio chunk is sent before the full response generates. Streaming reduces perceived latency by 300-500ms: users hear the bot start speaking while the LLM is still generating later sentences.

Test TTS intelligibility by running generated audio back through your ASR and comparing against input text. Flag mispronounced domain terms (product names, medical jargon) and correct them using SSML tags:

<phoneme alphabet="ipa" ph="ˈdoʊɡrə">Dograh</phoneme>

Step 4: Connect the Pipeline and Configure the Orchestrator

With ASR, dialogue logic, and TTS configured, the final step is connecting them into a coherent pipeline.

Assemble components behind a WebSocket-based orchestrator that streams audio in from the client, routes through VAD → ASR → LLM → TTS, and streams audio out. WebSocket avoids per-request connection overhead built into HTTP REST, which is critical for real-time voice where every millisecond counts. Frameworks like Pipecat provide production-grade orchestration with parallel processing: the LLM generates later sentences while TTS vocalizes earlier ones. Platforms like Dograh AI offer a pre-integrated open-source pipeline that handles this orchestration layer out of the box.

Define explicit timeout and fallback behaviors:

- ASR returns empty: "I didn't catch that—could you repeat?"

- LLM exceeds 2-second response SLA: Play pre-recorded filler audio ("Let me check on that...")

- TTS fails mid-stream: Fall back to cached audio or terminate gracefully

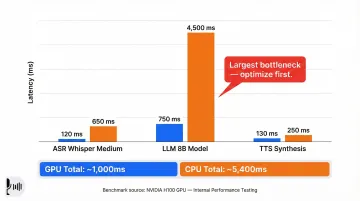

Run a latency audit. Benchmark each component separately to find bottlenecks:

| Component | Typical Latency (GPU) | Typical Latency (CPU) |

|---|---|---|

| ASR (Whisper Medium) | 49ms | 1,420ms |

| LLM Generation (8B model) | 670ms | 3,200ms |

| TTS Synthesis | 286ms | 800ms |

| Total | ~1,000ms | ~5,400ms |

Benchmarks from low-latency voice agent evaluation on NVIDIA H100.

The LLM stage is typically the largest contributor. Optimize here first: use quantized models, reduce context size, or switch to a managed low-latency provider like Groq ($0.05 per 1M input tokens for Llama 3.1 8B).

Key Parameters That Affect Reliability

The difference between a prototype and a production-ready voice bot usually comes down to four variables: ASR model size, VAD sensitivity, LLM context length, and streaming configuration. Most teams tune for accuracy but neglect latency, silence handling, and streaming—resulting in agents that transcribe perfectly but feel unnatural or unresponsive.

ASR Model Size vs. Latency Trade-Off

Larger ASR models have lower word error rates but add hundreds of milliseconds per transcription. On CPU, Whisper Large can consume over 3 seconds—exhausting your entire latency budget before the LLM even runs.

| Model | Parameters | WER (LibriSpeech Clean) | CPU Time (13min audio) | GPU Time (13min audio) |

|---|---|---|---|---|

| Whisper Small | 244M | 3.4% | 2m 37s | 42s |

| Whisper Medium | 769M | 2.9% | 5m 18s | 1m 15s |

| Whisper Large-v3 | 1,550M | 2.0% | 12m+ | 1m 3s |

Data from faster-whisper benchmarks on Intel i7-12700K CPU and NVIDIA RTX 3070 Ti GPU.

Use the smallest model that meets your WER threshold. For customer support (clean phone audio), Medium is usually sufficient. For noisy environments or accented speech, Large delivers better accuracy at the cost of latency—offset with GPU acceleration or quantization.

VAD Sensitivity and Chunk Size

VAD that's too aggressive clips the end of user utterances, causing incomplete transcriptions ("I need help with my acco—"). Too permissive and responses stall while the bot waits for silence that never comes cleanly. Silero VAD exposes two tunable parameters:

- Chunk size: 30ms audio windows (default); shorter windows increase CPU load without accuracy gains

- Silence threshold (

min_silence_duration_ms): Default 100ms; how long after speech ends before triggering end-of-turn

Overly short thresholds (50ms) cause the bot to interrupt mid-sentence. Increase to 150-200ms for non-native speakers who pause mid-thought more frequently.

LLM Context Window and System Prompt Length

Every token in the system prompt plus conversation history consumes inference time and reduces room for actual conversation. A bloated 800-token prompt may cause the LLM to drop early instructions as context fills, causing it to ignore constraints mid-dialogue.

Two practical limits to enforce:

- System prompt cap: Keep under 400 tokens to preserve at least 1,600 tokens for conversation history within a 2,048-token context window

- Latency trade-off: IBM's Time to First Token research shows prompt processing (prefill phase) is often the largest latency contributor—cutting 50% of a prompt may only reduce TTFT by 1-5%, but it protects conversational coherence over longer sessions

Streaming vs. Batch at Each Stage

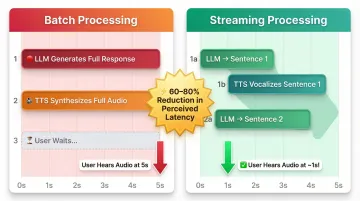

Batch processing compounds latency at every stage. If your LLM generates a 40-word response in 2 seconds and TTS takes another 3 seconds to synthesize the full audio, the user waits 5 seconds before hearing a single word.

Streaming allows TTS to start vocalizing the first sentence while the LLM generates the rest—cutting perceived latency by 60-80%. Not all tools support this natively:

- Streaming-ready: XTTS-v2, Piper (with

--sentencemode), faster-whisper, Groq API - Batch-only by default: OpenAI Whisper API (streaming requires custom implementation), some local LLM runners

Choose components with native streaming APIs and configure your orchestrator to handle partial outputs.

Common Mistakes When Building Open Source Voice Bots

Most open source voice bot failures trace back to a handful of repeatable mistakes. Catching them early prevents the silent crashes, runaway LLM behavior, and latency spikes that kill user trust.

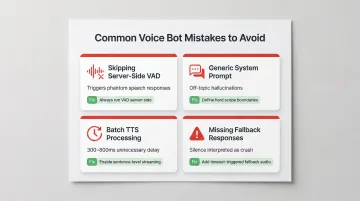

Skipping Server-Side VAD

Relying on the client device for voice activity detection is one of the fastest ways to break a voice bot in production. Background music, keyboard sounds, or HVAC noise cause ASR to transcribe gibberish or miss end-of-turn signals entirely. The result: the bot responds to phantom speech or waits indefinitely for input that already ended.

Always run VAD server-side, where you control both the audio quality and the detection algorithm.

Using a Generic System Prompt

LLMs without explicit scope constraints will attempt to answer anything — "What's the weather?" or "Tell me a joke" included. This creates unpredictable behavior and wastes tokens on responses the bot was never designed to give.

Define hard boundaries in the system prompt. For example: "You are a billing support agent. You cannot provide technical troubleshooting or account password resets." Explicit refusal instructions keep the bot predictable and on-task.

Treating TTS as a Batch Step

Generating the full text response before starting TTS synthesis adds 300–800ms of unnecessary latency. Users feel the delay even when they can't quantify it.

Configure TTS to stream starting at the first sentence or sentence fragment. Total generation time stays the same, but users perceive the bot as dramatically more responsive.

Missing Fallback Responses for Pipeline Failures

When any component times out or returns an error, the bot goes silent. Users interpret silence as a crash and hang up. Every pipeline stage needs graceful degradation:

- Pre-recorded audio ("I'm having trouble connecting, please hold")

- Simplified fallback logic when the LLM is unavailable

- Timeout thresholds that trigger a response rather than infinite waiting

Troubleshooting Issues in Your Voice Bot Pipeline

Most pipeline failures in production are not model quality issues—they are integration failures, configuration gaps, or edge cases in real user speech that only surface at scale.

Bot Responds to Background Noise or Its Own Audio Output (Echo)

Likely cause: Device-side VAD is not filtering non-speech audio, or the microphone is picking up speaker output (acoustic echo).

What to check:

- Verify VAD silence threshold is calibrated to the deployment environment (office vs. call center vs. home)

- Implement acoustic echo cancellation at the client layer using WebRTC AEC3 or RNNoise before audio is sent to the server

- Test with recordings that include TV audio, music, or multiple speakers to confirm VAD doesn't trigger on background voices

Bot Clips the End of User Utterances, Causing Incomplete Transcriptions

Likely cause: End-of-turn silence threshold is set too short, triggering ASR before the user finishes speaking.

What to check:

- Increase

min_silence_duration_msin your VAD config from 100ms to 150-200ms - Test against recordings of your target user population, particularly non-native speakers who pause mid-sentence more frequently

- Profile real calls to measure how long users naturally pause between phrases—calibrate your threshold accordingly

LLM Response Drifts Off-Topic or Forgets Earlier Instructions Mid-Conversation

Likely cause: System prompt is too long and the combined token count of prompt + conversation history exceeds the model's effective context window, causing early instructions to be dropped.

How to fix it:

- Audit total token count per turn using your LLM provider's tokenizer

- Trim system prompt to under 400 tokens—keep only essential instructions

- Implement a sliding window that preserves the system prompt and last 4-6 turns but drops older history

- If using a 4K context model, reserve at least 2K tokens for conversation history and 2K for prompt + generation

End-to-End Latency Exceeds 1 Second Consistently

Likely cause: One or more components are processing in batch mode rather than streaming, or ASR/LLM is running on CPU without hardware acceleration.

What to check:

- Profile each stage separately to identify the bottleneck (ASR, LLM, or TTS)

- Enable streaming at ASR and TTS—verify the orchestrator handles partial outputs

- If on CPU, quantize the ASR model (INT8 via faster-whisper) or switch to a smaller variant (Medium instead of Large)

- Consider a managed inference provider for the LLM stage if local inference is too slow

- Consider a managed inference provider for the LLM stage if local inference is too slow

- For teams targeting sub-500ms response times, Dograh AI provides an open-source pipeline with integrated ASR, LLM, and TTS streaming — its LoopTalk testing framework can also simulate real conversation scenarios to catch latency regressions before they reach production

Once you've resolved component-level bottlenecks, the next step is validating your pipeline end-to-end under realistic load conditions.

Alternatives to Building Everything from Scratch

Assembling and maintaining every open-source component individually is engineering-intensive. Depending on your team's bandwidth and goals, three alternatives exist at different abstraction levels — each suited to a different trade-off between control and speed.

Use a Voice Pipeline Orchestration Framework (e.g., Pipecat, EchoKit)

Best for teams comfortable writing code but unwilling to hand-wire low-level WebSocket routing, VAD integration, and streaming coordination from scratch. Pipecat (BSD-2-Clause license) and EchoKit (GPL-3.0) provide structured scaffolds — you connect components rather than build the plumbing.

The catch: you still select, host, and maintain each AI model (ASR, LLM, TTS) yourself. Debugging across abstracted layers adds complexity, and these frameworks reduce integration time without eliminating operational overhead.

Use a Pre-Integrated Open-Source Voice AI Platform (e.g., Dograh AI)

Best for use cases requiring the highest conversational naturalness — barge-in, emotion detection, audio-native understanding — where cost is not the primary constraint. OpenAI Realtime API pricing runs approximately $0.06/min for audio input and $0.24/min for audio output.

The trade-offs are significant at scale:

- Cost: 10–50x more expensive per minute than self-hosted open source

- Compliance: No self-hosting option means audio leaves your infrastructure, disqualifying it for HIPAA-regulated or security-sensitive deployments

- Lock-in: No ability to swap models or customize pipeline behavior

Frequently Asked Questions

What are the best open-source tools for building a voice bot pipeline?

The recommended stack includes Whisper (or Vosk for edge devices) for ASR, Silero VAD for voice activity detection, a local LLM via LlamaEdge or managed via Groq for dialogue, and Piper (CPU) or XTTS-v2 (GPU) for TTS, all connected via a streaming WebSocket orchestrator like Pipecat.

How do I keep end-to-end voice bot latency under 500ms?

Enable streaming at every stage (ASR, LLM, TTS), use a smaller quantized ASR model on GPU, and profile each component individually to identify bottlenecks before optimizing. The LLM is typically the slowest stage: reduce context size or switch to a faster provider.

Can an open-source voice bot be HIPAA or GDPR compliant?

Yes. Self-hosted stacks achieve compliance because audio and transcription data never leave your infrastructure. You'll still need access controls, encryption at rest and in transit, and a documented data handling policy, but the compliance path is significantly cleaner than with proprietary API providers.

What is the difference between streaming and batch processing in a voice bot?

Batch processing waits for complete output before passing to the next stage, adding latency at each step. Streaming passes partial outputs immediately, allowing TTS to start speaking the first sentence while the LLM is still generating the rest. This alone reduces perceived latency by 60–80%.

How do I handle interruptions and barge-in in an open-source voice bot?

Barge-in requires the orchestrator to detect new user speech while the bot is still speaking (via continuous VAD monitoring), stop TTS playback immediately, and re-route the new audio through the ASR pipeline. Open-source frameworks require explicit configuration to enable this; it's off by default.

How should I test a voice bot before deploying it to production?

Use AI-to-AI testing — a second LLM simulating real callers — to catch dialogue failures, combined with latency profiling under concurrent load. Unit testing individual components misses the conversation-level edge cases that only surface during multi-turn interactions.