Introduction

Replacing a single contact center agent costs between $10,000 and $20,000—and with industry attrition at 31.2% annually, those costs compound fast. At the same time, customers expect instant responses, 24/7 availability, and personalized service every time they call.

Voice AI is rapidly becoming the answer to these challenges. Gartner predicts that by 2028, at least 70% of customers will use a conversational AI interface to start their customer service journey. The global AI voice generator market, valued at $3.0 billion in 2024, is expected to reach $20.4 billion by 2030.

That growth hasn't gone unnoticed in regulated industries. Healthcare providers, legal firms, financial institutions, and defense contractors are turning to open source, self-hosted voice AI—not just for cost reasons, but because proprietary cloud platforms create real compliance risk. Self-hosted solutions offer:

- Full data sovereignty with no third-party cloud exposure

- Transparent pricing with no hidden per-call fees

- Built-in flexibility to meet HIPAA, GDPR, and PCI DSS requirements

This guide breaks down the leading open source voice AI platforms for production use—what they can handle, how they deploy, and which regulated environments they're actually built for.

TLDR

- Voice AI automates phone-based workflows using STT, LLM, and TTS working together to handle support calls, qualify leads, and schedule appointments

- Open source platforms cut per-minute charges, eliminate vendor lock-in, and give businesses full data control for HIPAA and GDPR compliance

- Solutions range from plug-and-play platforms to component-level tools that require engineering to assemble

- Choosing the right solution depends on compliance needs, technical resources, call volume, and integration requirements

- Dograh AI deploys agents in under two minutes — SOC 2, HIPAA, GDPR, and PCI DSS certified, with no platform fees

What Is Voice AI for Business Automation?

Voice AI for business refers to intelligent systems that conduct natural, real-time phone conversations using a three-layer pipeline: Speech-to-Text (STT) transcribes spoken words, a Large Language Model (LLM) processes meaning and generates responses, and Text-to-Speech (TTS) converts responses back to voice output.

That's a sharp departure from older Interactive Voice Response (IVR) systems. Traditional IVR relies on rigid menu trees — "Press 1 for sales, press 2 for support" — that frustrate customers and drive abandonment rates of 12-20%. AI voice agents understand natural language, adapt to context, and handle requests end-to-end without scripted menus.

Voice AI doesn't merely answer phones — it performs complete workflows: updating CRM records, scheduling appointments, qualifying leads, processing payments, and escalating complex issues to human agents with full conversation context. AI-handled voice interactions cost approximately $0.20, compared to $5.50 for human-only calls.

Voice AI vs. IVR vs. Chatbots

| Dimension | Legacy IVR | AI Voice Agent | Text Chatbot |

|---|---|---|---|

| Interaction Mode | Touch-tone menus | Natural voice conversation | Text-based messaging |

| Containment Rate | 20-40% | 50-75% | Varies widely |

| First Contact Resolution | 65-75% | 85-95% | 60-80% |

| Adaptability | Static, requires reprogramming | Dynamic, learns from context | Dynamic, text-only |

| Best Use Cases | Simple routing | Complex support, sales, scheduling | Asynchronous support |

The gap in containment and resolution rates comes down to workflow depth — and that's what makes choosing the right platform so consequential.

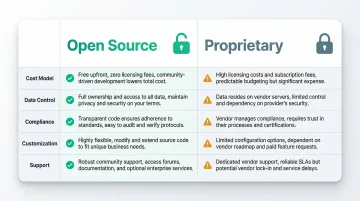

Open Source vs. Proprietary Voice AI: Why the Difference Matters

Proprietary voice AI platforms operate on pay-as-you-go models that compound costs across multiple service layers:

- Twilio: $0.0140/min for outbound calls + $0.10/min for AI Assistant processing + $0.0500/min for transcription

- Bland AI: $0.11–$0.14/min for AI processing + $0.015 per outbound call attempt

- Retell AI: $0.07–$0.31/min for the agent + $0.055/min for voice infrastructure

This practice—charging separately for telephony, STT, TTS, and LLM usage—is often called "double billing." For high-volume business users, these layered charges erode margins and make cost forecasting difficult.

Beyond pricing, proprietary platforms create vendor lock-in. According to IDC, 42% of AI migrations to hyperscalers fail, with remediation and data egress costs averaging $5M to $10M per incident. Once your conversational flows, integrations, and customer data are embedded in a proprietary system, switching providers becomes prohibitively expensive. You cannot easily change model providers, customize behavior, or move your data without vendor cooperation.

Compliance and Data Sovereignty

Regulated industries cannot send sensitive call data to third-party cloud platforms. Under HIPAA, when a Cloud Service Provider creates, receives, maintains, or transmits electronic protected health information (ePHI), HIPAA requires a Business Associate Agreement (BAA)—even if the provider encrypts data and lacks decryption keys. GDPR Article 28 mandates that data processors operate under binding contracts with explicit authorization for sub-processors.

PCI DSS strictly prohibits storing sensitive authentication data such as CVV codes after authorization, including in digital audio recordings. For healthcare, legal, defense, and finance organizations, self-hosted open source voice AI is the only deployment model that puts audit trails, data retention controls, and encryption directly under organizational control—not a vendor's.

Customization and Model Flexibility

Open source licenses like BSD 2-Clause allow full commercial use and modification without royalties, making it straightforward to build proprietary workflows on top of the platform. That flexibility extends to the AI stack itself.

Proprietary platforms lock you into their chosen models. Open source platforms let you swap individual components—using a fine-tuned domain-specific LLM for medical conversations, for example, or replacing the default TTS with a custom voice model. A cardiology practice using a specialized clinical LLM will achieve measurably better transcription accuracy than one constrained to a general-purpose model.

Open Source vs. Proprietary Comparison

| Factor | Open Source | Proprietary |

|---|---|---|

| Cost Model | Infrastructure + AI API costs; no platform fees | Platform fees + per-minute charges for STT/TTS/LLM |

| Data Control | Full control; self-hosted or private cloud | Vendor-controlled cloud infrastructure |

| Compliance | Self-hosted achieves HIPAA/GDPR compliance | Requires BAA/DPA; limited control |

| Customization | Swap models, modify workflows, extend functionality | Limited to vendor-provided options |

| Support | Community + optional commercial support | Vendor support included in pricing |

Top Business Use Cases for Voice AI Automation

McKinsey reports that AI deployments can reduce total customer service interactions by 40-50%, and Intercom's Fin Voice already resolves 53% of calls end-to-end on average. Those numbers reflect what's happening across industries, not just contact centers. Voice AI now handles everything from sales outreach to HR self-service.

Customer Support and Inbound Call Handling

Voice AI handles high-volume inbound support by answering frequently asked questions, authenticating callers, tracking order status, and routing complex issues to human agents with full conversation context. The system operates 24/7, eliminating wait times during peak hours or off-business hours. First Contact Resolution rates climb to 85-95% compared to 65-75% for traditional IVR systems.

Sales and Lead Qualification

Outbound voice AI calls prospect lists, asks qualification questions, detects intent and sentiment, and books meetings directly into CRM systems without human sales reps involved. Some platforms add emotion detection and objection handling, adjusting responses based on how a prospect sounds in real time. The speed advantage alone is significant: responding to leads within 5 minutes increases conversion rates by 21x compared to delayed follow-up.

Appointment Scheduling

In healthcare, legal, and real estate, voice AI handles inbound scheduling requests, confirmations, rescheduling, and reminders. Predictive model-driven live telephone outreach reduced no-show rates from 36% to 33% in one healthcare study. Automated reminder systems in real estate reduce missed appointments by approximately 90%, freeing administrative staff for higher-value work.

Payment Reminders and Finance Operations

Voice AI automates accounts receivable and collections through outbound calls for payment reminders, invoice follow-up, and payment option presentation. Agentic AI implementations have cut Days Sales Outstanding (DSO) by 20% within 90 days and automated 90% of collections follow-up. Any deployment handling payment data must meet PCI DSS requirements, which platforms like Dograh AI support out of the box.

HR and Internal Operations

Internal use cases include employee self-service for payroll queries, leave balance inquiries, and policy questions. Voice AI conducts initial candidate screening calls and schedules interviews, reducing HR administrative burden. One organization reported an 88% reduction in contract processing time and freed up 12,000 work hours through HR automation.

Best Open Source Voice AI Tools for Business

Rather than listing every available tool, this section covers the open source ecosystem (from complete production platforms to component-level tools) so you can assess the level of assembly your team actually needs.

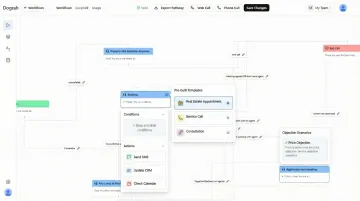

Dograh AI

Dograh AI is a 100% open source (BSD 2-Clause), self-hostable voice AI platform built for production-grade deployments. Agents go live in under 2 minutes using a no-code workflow builder with pre-built templates. The platform delivers sub-500ms latency and supports multi-agent conversational flows with 45+ minute context retention.

A few capabilities set it apart from other open source options:

- Cuts manual QA effort with LoopTalk, an AI-to-AI testing framework that runs simulated customer scenarios autonomously

- Applies NEPQ-based sales methodology with emotion detection and dynamic pauses for more natural conversations

- Drag-and-drop workflow builder designed for non-engineers, no code required

- Supports bring-your-own-keys for all major AI models with full configurability

- Certified for SOC 2, HIPAA, GDPR, and PCI DSS — one of the only open source platforms deployable in regulated industries without additional compliance work

- No platform fees: you pay only infrastructure and AI API costs, with no double billing

Dograh's self-hosting option provides complete data sovereignty, essential for healthcare, legal, and financial services organizations.

Vapi

Vapi is a developer-focused, API-native orchestration layer that connects STT, LLM, and TTS components into a custom voice stack. It targets sub-500ms latency and gives technical teams significant flexibility over component selection. The trade-off: reaching production readiness requires more engineering effort, and built-in compliance certifications are absent — so Vapi fits best when you have DevOps and ML engineering resources already in place.

Bolna AI

Bolna (MIT License) is a telephony agent framework for teams building voice agents on top of existing infrastructure. It handles end-to-end orchestration across ASR, LLM, and TTS providers over WebSockets, offering solid flexibility for developers who are comfortable with setup overhead. Compared to application-ready platforms, it requires more technical expertise to get to a production-ready state.

Open Source Component Tools

For teams with specific requirements no existing platform meets, building from components is also an option. The main pieces of the stack:

- OpenAI Whisper for Speech-to-Text (MIT License)

- Piper, Kokoro, or StyleTTS2 for Text-to-Speech (Coqui TTS shut down in January 2024)

- Llama 3 or Mistral for LLM reasoning

This approach gives you maximum flexibility and control. It also requires significant engineering effort, ongoing maintenance, and none of the production-ready packaging that complete platforms provide: no built-in compliance certifications, no monitoring, no testing frameworks. For most businesses, component assembly is worth considering only when a specific technical requirement genuinely cannot be met by an existing platform.

How to Choose and Deploy Your Open Source Voice AI Solution

Key Evaluation Criteria

Evaluate platforms across five key factors:

- Compliance requirements — Does the platform support self-hosting with documented certifications (SOC 2, HIPAA, GDPR)? Can you maintain data sovereignty and audit trails?

- Latency — Human conversational turn-taking features a modal gap of around 200ms. ITU-T G.114 recommends one-way network delay not exceed 400ms. For natural conversation, target sub-500ms end-to-end latency.

- Integration depth — Does it integrate with your existing CRM (Salesforce, HubSpot), telephony (Twilio, SIP trunks), and calendar systems (Google Calendar, Calendly)? Are these pre-built or require custom configuration?

- Model flexibility — Can you swap LLMs, fine-tune models for your industry, or bring your own API keys? Proprietary platforms lock you into their choices.

- Total cost of ownership — Factor in infrastructure costs, AI API usage, engineering time for setup and maintenance, and ongoing operational overhead. Open source eliminates platform fees but shifts hosting and support responsibility to your team.

For regulated industries, also apply NIST AI RMF 1.0 and ISO/IEC 23053 to assess vendor risk management and system lifecycle processes — these frameworks surface gaps that standard vendor questionnaires miss.

Deployment Options: Cloud vs. Self-Hosted

Your deployment model determines how much control you retain over data, infrastructure, and compliance. Two primary paths exist:

| Cloud-Hosted | Self-Hosted | |

|---|---|---|

| Setup speed | Fast — managed by vendor | Slower — requires your infrastructure |

| Data control | Shared responsibility | Full data sovereignty |

| Best for | Teams without strict residency rules, or early pilots | HIPAA, defense, EU-only data mandates |

| Key requirement | Verify SLA and uptime commitments | Demand Docker/Kubernetes deployment docs, not just source code |

Cloud-hosted works well for organizations testing voice AI before committing to infrastructure. Self-hosted is required for the strictest compliance regimes — and when evaluating vendors, comprehensive deployment documentation matters as much as the code itself.

Getting Started: What the First 30 Days Look Like

A practical deployment sequence:

- Define your target use case and success metrics — Start narrow (e.g., qualify inbound leads for one product line) rather than broad

- Select your platform — Based on compliance, technical resources, and integration needs

- Configure your first agent — Use templates or workflow builders to create scripted flows

- Integrate with core systems — Connect CRM, telephony, and calendar systems; test data flow

- Run simulated testing — Use AI-to-AI testing tools like LoopTalk to validate behavior before live calls

- Go live with limited volume — Start with 10-20% of call volume or a specific use case

- Monitor and iterate — Track containment rates, resolution rates, latency, and customer satisfaction; refine prompts and workflows based on real performance

With the right platform, the first agent can be live in minutes, not months. The most common failure point isn't technical — it's the gap between a promising pilot and a production-ready system. Platforms that include built-in analytics, AI-to-AI testing, and workflow monitoring close that gap before it becomes a sunk cost.

Frequently Asked Questions

How is AI used in process automation?

AI automates repetitive business processes by understanding natural language inputs, making decisions through an LLM, and triggering actions like CRM updates, appointment bookings, or payment processing without human intervention. Voice AI extends this capability to phone-based workflows.

Is AI cold calling illegal?

AI-driven outbound calls are legal in most jurisdictions when compliant with regulations like the TCPA (USA), GDPR (Europe), and CASL (Canada). The FCC's 2024 Declaratory Ruling classifies AI-generated voices as "artificial" under TCPA, requiring prior express consent, caller identification, and opt-out mechanisms. Consult legal counsel for your specific region and use case.

What is open source voice AI and how does it differ from proprietary solutions?

Open source voice AI platforms publish their source code publicly under licenses like BSD 2-Clause or MIT, allowing businesses to self-host, modify, and extend the platform. Unlike proprietary tools that lock data and model choices to vendor infrastructure, open source provides transparency, customization, and freedom from platform fees.

Can open source voice AI be HIPAA and GDPR compliant?

Yes. Self-hosted open source platforms like Dograh AI are designed for compliance, keeping all call data within the business's controlled environment. They support audit trails, data retention controls, encryption, and Business Associate Agreements required by HIPAA. GDPR compliance is achieved through data sovereignty and processor contracts.

How much does it cost to run an open source voice AI solution?

Open source platforms eliminate licensing fees, but businesses pay for infrastructure, AI model inference (STT/LLM/TTS APIs), and engineering time. Costs vary by call volume and stack configuration, and typically run well below proprietary alternatives — especially for organizations processing 100k+ minutes per month.

What technical expertise is needed to deploy an open source voice AI platform?

Requirements vary by platform. Complete platforms like Dograh AI offer no-code/low-code interfaces and can be deployed by non-engineers in minutes. Assembling a custom stack from components like Whisper, Piper, and Llama requires DevOps, ML engineering skills, and ongoing maintenance expertise.