Introduction

Voice-first AI has become the interface layer enterprises demand—but most proprietary platforms come with steep per-minute billing, double-charging traps, and vendor lock-in that makes production deployment prohibitively expensive for any team without venture capital runway. The BYOK (Bring Your Own Key) model, advertised with base rates as low as $0.05 per minute, masks the true cost: customers pay underlying STT, LLM, and TTS provider fees on top of platform charges, pushing real costs to $0.15–$0.30+ per minute. For high-volume enterprise deployments, this billing structure becomes unsustainable.

In 2026, mature open-source alternatives cover the full stack—speech recognition, language models, text-to-speech, and orchestration—and production-grade self-hosting is well within reach for teams with modest infrastructure experience.

Self-hosted frameworks eliminate platform fees entirely, give regulated industries complete data sovereignty for HIPAA and GDPR compliance, and put engineering teams back in control of their cost structure and technical roadmap.

Here's what you need to know to deploy without unnecessary overhead.

TL;DR

- Open-source voice AI in 2026 covers the full STT → LLM → TTS pipeline with no platform fees

- Sub-500ms response times are achievable with the right architecture and model selection—critical for natural conversations

- Self-hosted deployments give regulated industries complete data sovereignty for HIPAA and GDPR compliance

- The biggest hidden cost: double billing where platforms charge separately from underlying model providers

- Open-source platforms now support multi-turn context, function calling, interruption handling, and no-code workflow builders

The Voice AI Stack: What You're Actually Deploying

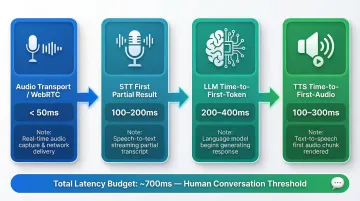

Every production voice agent runs on a three-component pipeline: Speech-to-Text (STT) converts incoming audio to text, a Large Language Model (LLM) processes the transcription and generates a response, and Text-to-Speech (TTS) converts that response back to audio. Each component contributes to total latency and quality trade-offs.

To achieve natural conversation (under 1 second perceived latency), the latency budget must be strictly managed:

| Pipeline Stage | Target Latency Budget | Notes |

|---|---|---|

| Audio Transport (WebRTC) | < 50ms | Requires global, low-latency media network |

| STT (First Partial Result) | 100–200ms | Requires streaming STT |

| LLM Time-to-First-Token | 200–400ms | Largest allocation—hardest to compress |

| TTS Time-to-First-Audio | 100–300ms | Requires streaming synthesis |

Voice Activity Detection: The Unsung Component

Voice Activity Detection (VAD) gates when audio gets transcribed, reduces wasted computation, and directly controls whether the agent cuts users off or creates uncomfortable dead air. Teams that overlook VAD tuning typically see poor conversation quality in production.

That said, relying solely on VAD for turn-taking is problematic—it triggers on any audio resembling speech, failing to distinguish genuine interruptions from backchannels like "uh-huh" or background noise.

Speech-to-Speech: The Emerging Alternative

End-to-end Speech-to-Speech (S2S) models eliminate the intermediate STT and TTS hops, achieving 200-300ms latency and preserving emotional prosody. However, as of 2026, S2S systems still trail STT-LLM-TTS pipelines in function calling reliability, reasoning depth, and hallucination control—most production deployments still use the three-component pipeline.

The Orchestration Layer

Given that pipeline, something still has to hold the conversation together. The orchestration layer is the middleware that manages conversation state, handles multi-turn context, triggers function calls (calendar lookups, CRM writes, transfers), and enforces guardrails. It's often where the most engineering effort is spent—and where proprietary platforms extract the most margin.

Three deployment approaches:

- Own the full stack (core infrastructure teams prioritizing maximum flexibility)

- Use an open-source framework like Pipecat or Dograh AI to reduce build time

- Abstract all complexity away for end customers (application-layer teams)

The right choice depends on internal engineering capacity and how quickly the team needs a working agent in production.

Top Open-Source Voice-First Platforms in 2026

Pipecat

Pipecat is a Python-based open-source framework (BSD-2-Clause license) well-suited for prototyping and web-deployed voice agents. It orchestrates STT, LLM, and TTS services in async Python pipelines and integrates with WebRTC for real-time streaming via its RTVI protocol.

Keep these constraints in mind before committing to Pipecat in production:

- Runs server-side only; no native mobile execution

- Requires containerized deployment (Docker on cloud)

- Python's Global Interpreter Lock (GIL) introduces concurrency overhead at scale

Treat it as a strong prototyping tool, but plan for migration if mobile-native or edge deployment is on your roadmap.

LiveKit Agents

LiveKit Agents is an open-source agent framework (Apache-2.0 license) built on LiveKit's real-time communication infrastructure. It supports multi-party audio, room-based session management, and WebRTC transport, making it solid for use cases involving multiple speakers or human-agent handoffs.

A standout feature is Adaptive Interruption handling: it analyzes acoustic signals to identify intentional barge-ins and rejects 51% of VAD-based false positives—keeping conversations natural without misfires.

Dograh AI

Dograh AI is an open-source, self-hostable voice AI platform (BSD 2-Clause license) built for production-ready agents with sub-500ms latency. It covers the full orchestration layer with pre-integrated major AI models, a no-code/low-code workflow builder, and zero platform fees. Agents can be deployed in under 2 minutes from the GitHub repo.

Two key differentiators for regulated industries:

- Self-hosting options enabling full HIPAA, GDPR, PCI DSS, and SOC 2 compliance with complete data sovereignty

- LoopTalk—an AI-to-AI testing framework that simulates real-world customer scenarios autonomously, cutting manual testing effort before go-live

Dograh avoids double billing by offering transparent pricing without hidden STT/TTS/LLM charges layered on top—a meaningful cost advantage for teams running high call volumes.

Open-Source Model Layer (STT and TTS)

Speech-to-Text:

- Whisper large-v3 shows 10-20% error reduction compared to large-v2;

faster-whisperreimplementation is up to 4x faster - Mistral Voxtral Realtime (4B parameters, Apache 2.0) uses natively streaming architecture with sub-200ms delays and 8.72% WER on FLEURS benchmark at 480ms delay

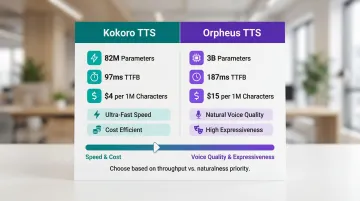

Text-to-Speech:

| Model | Parameters | TTFB | Pricing (Serverless) |

|---|---|---|---|

| Kokoro TTS | 82M | 97ms | $4 per 1M characters |

| Orpheus TTS | 3B | 187ms | $15 per 1M characters |

Kokoro wins on speed and cost; Orpheus trades slightly higher latency for richer, more expressive voice output. Choose based on whether throughput or naturalness is the priority for your use case.

The Hard Technical Problems in Production Voice AI

Latency

WebRTC alone introduces approximately 250ms of round-trip latency before any backend processing begins, creating a ~500ms baseline even under ideal network conditions. The generally accepted human conversation threshold is around 700ms total—which means every component in the pipeline has a limited budget to spend.

Speculative execution reduces average response time by sending the LLM request before end-of-turn detection reaches full confidence, at the cost of occasional redundant inference calls. This is a concrete engineering trade-off teams should evaluate for their use case.

LLM inference acceleration: Speculative decoding techniques like EAGLE-3 provide 3.0x-6.5x speedup compared to vanilla autoregressive generation. Speculative Decoding (SSD) achieves up to 5x faster decoding by parallelizing speculation and verification.

Interruption Handling and Turn Detection

Interruption handling is harder than it appears. When a user speaks mid-response, the system must:

- Detect that speech has occurred and classify it (filler, redirect, or correction)

- Decide whether to cancel the TTS stream mid-sentence

- Retain enough state to resume or revise the response cleanly

Poor handling here is the most common reason agents feel robotic in production.

Half-duplex vs. full-duplex systems: Half-duplex allows one party to speak at a time; full-duplex requires more sophisticated overlap detection and parallel state management but delivers noticeably more natural conversation flow.

Modern frameworks solve this with semantic models. Pipecat's Smart Turn Detection uses an open-source ML model to recognize natural conversational cues and intonation patterns, moving beyond VAD's acoustic triggers.

Hallucinations and Guardrails

Hallucinations in voice AI aren't just factual errors—they include voice-specific failures: mispronouncing brand names, saying account numbers incorrectly, or producing inappropriate tone. In regulated industries (healthcare, finance, legal), these failures carry real liability and require prompt-level guardrails, RAG grounding, and post-generation validation layers.

The SHALLOW benchmark reveals that in noisy datasets like CHiME6, conversational overlap and acoustic degradation drive uniformly high phonetic hallucination scores across all tested models — a persistent challenge no current system fully solves.

Background Noise and Speaker Diarization

Real calls happen in noisy environments with multiple background speakers, hold music, and IVR tones. Robust VAD and speaker diarization are non-negotiable for accurate transcription. Teams should look for STT models trained on diverse acoustic conditions rather than clean studio audio.

The pyannote.audio toolkit (community-1 pipeline) brings significant improvements in speaker counting and assignment, processing an hour of audio in 37 seconds on an H100 GPU.

Function Calling and Workflow Orchestration

Solving the acoustic and signal-layer problems above is necessary but not sufficient. Production voice agents also need to do more than talk—they must:

- Call APIs mid-conversation (CRM lookups, calendar writes, transfer logic)

- Manage multi-step branching workflows

- Decide when to escalate to a human

The orchestration layer that handles this is often the most complex part of the build and the area where open-source platforms provide the most engineering leverage. Dograh AI's drag-and-drop workflow builder supports webhooks for internal API workflows, multi-agent routing to reduce hallucination, and decision tree logic—all configurable without writing orchestration code from scratch.

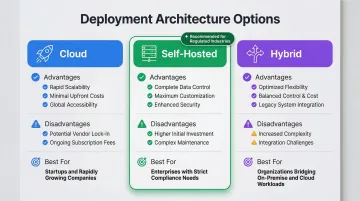

Deployment Architecture: Cloud, Self-Hosted, or Hybrid

Three deployment options with concrete trade-offs:

| Deployment Model | Advantages | Disadvantages | Best For |

|---|---|---|---|

| Cloud | Fast scaling, managed infrastructure | Shared-responsibility compliance, potential data residency issues | Startups, fast iteration cycles |

| Self-Hosted | Full data sovereignty, strict regulatory compliance (HIPAA, GDPR) | Internal infra investment, uptime responsibility | Healthcare, legal, finance, defense |

| Hybrid | Burst capacity in cloud, sensitive data on-prem | Complex architecture, requires coordination | Enterprises with mixed workloads |

Compliance Layer for Regulated Industries

For healthcare, legal, and financial services teams, self-hosting is the only architecture that provides a complete audit trail, prevents PHI/PII from transiting third-party infrastructure, and satisfies regulator requirements.

Three standards shape what that architecture must enforce:

- WebRTC/SRTP: RFC 8827 requires all WebRTC media channels to be secured via DTLS-SRTP — no exceptions.

- HIPAA: Covered entities must apply reasonable safeguards to protect PHI during audio-only telehealth sessions.

- PCI DSS: Sensitive authentication data must not be stored after authorization, even when encrypted.

Dograh AI's self-hosted deployment satisfies all three — giving teams full control over data flow, hosting region, and audit logging without routing sensitive data through third-party infrastructure.

Infrastructure Sizing for Self-Hosting

Minimal viable deployment requires dedicated GPU capacity for concurrent streams:

| GPU | ASR Streams | LLM Concurrent | TTS Streams |

|---|---|---|---|

| L4 | 50 | 20–30 | 100 |

| A100 | 100 | 75–100 | 250 |

| H100 | 200+ | 150–200 | 400+ |

Production speech-to-text typically runs on A100s, since ASR workloads benefit more from memory bandwidth than raw compute. The LLM layer is where most teams over-provision. Benchmarking with speculative decoding can surface 3–6x efficiency gains before any hardware upgrades are needed.

Reducing Overhead Without Cutting Corners

"Low overhead" in practice for voice AI means eliminating redundant billing layers and reducing time-to-deploy without sacrificing reliability. It includes:

- Developer hours spent on pipeline assembly

- Model integration maintenance

- Testing effort

- Hidden per-minute platform fees compounding at scale

The build vs. buy spectrum:

- Fully DIY (assembling Whisper + open LLM + Kokoro manually) offers maximum control but requires deep infrastructure expertise

- Pre-integrated open-source platform like Dograh AI trades some configuration flexibility for a production-ready path measured in minutes, not weeks — agents deployable in 2 minutes, no-code workflow builder, pre-integrated model options

Pipeline assembly is only part of the overhead equation — what you test before launch matters just as much.

Testing Infrastructure as an Overhead Factor

Manual QA of voice agents is slow and misses edge cases. Dograh's LoopTalk simulates real caller scenarios through AI-to-AI testing, catching conversation failures before launch that manual scripts would never surface.

Frequently Asked Questions

What is the minimum infrastructure needed to self-host a production voice AI agent?

A minimal deployment requires a single server with GPU capacity (L4 or A100), sufficient memory for concurrent model inference, and WebRTC/SIP networking. Dograh AI's open-source platform reduces this footprint further through shared model inference across concurrent sessions, keeping hardware requirements lean even at scale.

How does self-hosted voice AI compare to cloud platforms for latency?

Self-hosted deployments can match or beat cloud latency when co-located with the application layer, since they eliminate cross-provider network hops. The trade-off is infrastructure management responsibility—teams own uptime, scaling, and debugging.

Can open-source voice AI meet HIPAA and GDPR compliance requirements?

Yes. Self-hosted open-source deployments can fully satisfy HIPAA, GDPR, and PCI DSS requirements because all data processing stays within the organization's own infrastructure with no third-party data transit. This gives complete audit trails and data sovereignty.

What are the hidden costs in proprietary voice AI platforms that open-source avoids?

Per-minute platform fees, double billing on STT/TTS/LLM usage (BYOK traps), vendor lock-in switching costs, and the inability to audit or control the underlying model stack. Open-source eliminates platform fees entirely and puts you in direct control of provider costs.

How do I handle natural interruptions and overlapping speech in a voice AI agent?

Combine VAD tuning, semantic turn detection, and stateful conversation management to decide whether to cancel, revise, or resume a response mid-interruption. Full-duplex systems with adaptive interruption handling (such as LiveKit's approach) reduce perceived lag and make conversations feel more human.

Which open-source STT and TTS models are best for production deployments in 2026?

For STT, faster-whisper delivers a 4x speedup over base Whisper, while Voxtral Realtime hits sub-200ms streaming at 8.72% WER. For TTS, choose Kokoro (97ms TTFB) for speed or Orpheus (187ms TTFB) when output quality is the priority.