Introduction

AI voice generation has evolved from a research novelty to a production necessity in 2026. Regulated industries like healthcare, legal, and finance now demand open-source alternatives that deliver enterprise-grade voice quality without sacrificing data control. 63% of organizations are now more likely to adopt sovereign cloud services due to geopolitical and regulatory pressures, forcing teams to rethink their reliance on proprietary TTS platforms.

Proprietary platforms like ElevenLabs and OpenAI TTS create three critical problems:

- Vendor lock-in that limits infrastructure flexibility

- Unpredictable per-character billing that scales dangerously with usage (Google Chirp 3: HD costs $30 per 1 million characters, while Amazon Polly's Long-Form voices hit $100 per 1 million characters)

- Inability to self-host for HIPAA, GDPR, and SOC 2 compliance

Open-source models have reached the point where self-hosted deployments are genuinely competitive with commercial APIs — on naturalness, latency, and multilingual range. This guide covers the top open-source AI voice generators in 2026, evaluated on voice quality, licensing terms, self-hosting readiness, and compliance fit.

TLDR

- Open-source TTS models in 2026 rival proprietary tools in realism, latency, and multilingual support—without platform fees

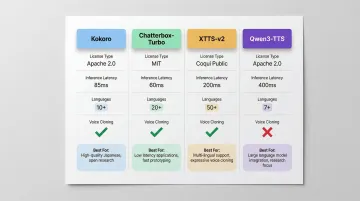

- Leading models include Kokoro (Apache 2.0, lightweight), Chatterbox-Turbo (MIT, emotion control), XTTS-v2 (17 languages, voice cloning), and Qwen3-TTS (sub-100ms latency)

- For production voice agents, platforms like Dograh AI provide open-source deployment with built-in HIPAA, GDPR, and SOC 2 compliance

- Key selection criteria: license type, self-hosting support, latency, language coverage, voice cloning capability

- The right model depends on your deployment environment, use case, and compliance requirements

What Are Open-Source AI Voice Generators?

Open-source AI voice generators are software models and platforms that synthesize realistic human speech from text input, made freely available via permissive licenses like Apache 2.0, MIT, or BSD. Unlike proprietary APIs such as ElevenLabs or OpenAI TTS that charge per character and restrict data handling, teams can inspect, modify, and self-host the code entirely.

Two Archetypes Dominate the Space

Standalone TTS models (such as Kokoro, XTTS-v2) output audio from text. These are modular components you integrate into existing systems.

Full voice AI platforms (such as Dograh AI) orchestrate TTS, STT, LLMs, and telephony into deployable voice agents. They deliver end-to-end conversational experiences. A standalone TTS model won't handle phone calls or multi-turn conversations without significant engineering work—full platforms cover that gap out of the box.

Market Momentum Behind Open-Source TTS

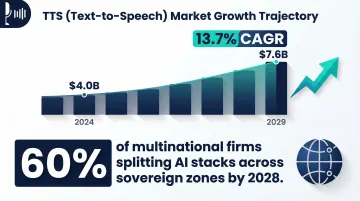

The numbers tell a clear story about where the market is headed:

- The global TTS market was valued at $4.0 billion in 2024 and is projected to reach $7.6 billion by 2029 — a 13.7% CAGR

- 60% of multinational firms are expected to split AI stacks across sovereign zones by 2028 to manage regulatory fragmentation

- That sovereignty pressure directly favors open-source deployments, where teams control where data lives and how models run

Best Open-Source AI Voice Generators in 2026

The tools below cover a range of use cases: standalone TTS synthesis, real-time voice cloning, multilingual generation, and full voice agent deployment. Each was evaluated on voice realism, latency, language support, license flexibility, active maintenance, and fit for production environments — not just GitHub star counts.

Kokoro

Kokoro is an 82-million-parameter open-source TTS model released under the Apache 2.0 license. Downloaded 9.8 million times last month on Hugging Face, its small footprint makes it ideal for cost-sensitive or edge deployment scenarios.

Key Differentiators:

- Delivers speech quality rivaling much larger models despite only 82M parameters

- Runs on CPU-only setups — decoder-only architecture (StyleTTS2 + ISTFTNet) skips encoders and diffusion, keeping VRAM requirements minimal

- Apache 2.0 license — no commercial use restrictions

| Feature | Details |

|---|---|

| License & Deployment | Apache 2.0 — fully open for commercial and non-commercial use; self-hostable on modest hardware, including CPU-only setups |

| Voice Cloning Support | Limited native voice cloning; best suited for fixed-voice synthesis rather than zero-shot voice adaptation |

| Language Support | Multiple languages (8 languages, 54 voices in v1.0); primarily English-focused |

Chatterbox-Turbo (Resemble AI)

Chatterbox-Turbo is a 350M-parameter open-source TTS model by Resemble AI, released under the MIT license. It uses a distilled one-step decoder — reducing generation from ten diffusion steps to a single step — making it one of the fastest and most hardware-efficient open-source TTS models in production use.

Key Differentiators:

- First open-source TTS model with emotion exaggeration control — enables dynamic, expressive speech output

- Claimed 75ms inference latency — fast enough for real-time conversational applications

- Native paralinguistic tags: supports [laugh], [cough], and other non-verbal sounds

- Adapts to a speaker's voice from short reference audio clips

- All audio output includes an imperceptible PerTh watermark by default — worth flagging for privacy-sensitive deployments

| Feature | Details |

|---|---|

| License & Deployment | MIT License — commercial use permitted; self-hostable with relatively low VRAM; output audio is watermarked via PerTh |

| Voice Cloning Support | Yes — speaker adaptation via short reference clip; supports cross-voice synthesis |

| Language Support | English only for Turbo variant; Chatterbox-Multilingual available separately for 23+ languages |

XTTS-v2 (Coqui)

XTTS-v2 is Coqui's most advanced TTS model and the most downloaded TTS model overall on Hugging Face with 6.38 million downloads last month. Originally developed by Coqui (which shut down in early 2024), the open-source community continues to maintain it.

Key Differentiators:

- Replicates voice timbre, emotional tone, and speaking style from a 6-second audio clip across 17 languages

- Achieves under 200ms streaming latency on consumer-grade GPUs via pure PyTorch implementation

- License carries a hard constraint: the Coqui Public Model License (CPML) restricts commercial use by default, and since Coqui no longer exists, there is no path to negotiate a commercial license

| Feature | Details |

|---|---|

| License & Deployment | Coqui Public Model License — non-commercial use only by default; commercial use requires negotiated terms; self-hostable |

| Voice Cloning Support | Yes — cross-lingual voice cloning from 6-second audio samples; replicates emotional tone and style |

| Language Support | 17 languages including English, Spanish, French, German, Portuguese, and more |

Qwen3-TTS (Alibaba Cloud)

Qwen3-TTS is an open-source TTS system from Alibaba Cloud's Qwen team, released in early 2026. It gained rapid community attention for achieving sub-100ms voice cloning latency (97ms end-to-end) from just 3 seconds of reference audio.

Key Differentiators:

- 97ms end-to-end latency via Dual-Track hybrid streaming — among the fastest zero-shot cloners available

- Clones a voice from a 3-second reference clip with no fine-tuning required

- Covers 10 languages with natural-sounding output across multilingual contexts

- Apache 2.0 license — fully open for commercial use

| Feature | Details |

|---|---|

| License & Deployment | Apache 2.0 — fully open for commercial and non-commercial use; self-hostable |

| Voice Cloning Support | Yes — zero-shot voice cloning from 3-second audio reference clips with no retraining required |

| Language Support | 10 languages; exact language list available in official Qwen3-TTS documentation |

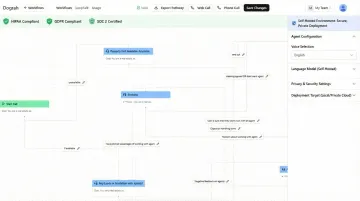

Dograh AI

The four tools above are TTS models — they synthesize speech. Dograh AI operates at a different layer entirely. It's an open-source voice agent platform (BSD 2-Clause license) built by ex-CTOs and YC alumni that combines STT, TTS, LLM orchestration, and telephony into a single self-hostable stack for production deployments.

Key Differentiators:

- Self-hosting delivers native HIPAA, GDPR, SOC 2, and PCI DSS compliance — no third-party data exposure

- Sub-500ms end-to-end latency supports real-time, natural-sounding conversations

- No platform fees or STT/TTS/LLM markup — you bring your own API keys and pay providers directly

- No-code/low-code workflow builder gets a voice agent live in under 2 minutes

- Retains 45+ minutes of conversational context across multi-turn dialogues

- Handles inbound calls, transfers, multi-agent workflows, and CRM integrations — not just audio output

| Feature | Details |

|---|---|

| License & Deployment | BSD 2-Clause (fully open source); cloud or self-hosted; compliant with HIPAA, GDPR, SOC 2, PCI DSS out of the box |

| Voice Cloning Support | Integrates with pre-configured TTS providers; voice customization and agent persona configuration supported |

| Language Support | Multi-language support via integrated LLM and TTS models; supports 30+ languages by default with expansion via custom STT/TTS provider selection |

How to Choose the Right Open-Source AI Voice Generator

Start with License and Deployment Requirements

Clarify whether your use case is commercial or research-only:

- Commercial builds: Kokoro (Apache 2.0), Chatterbox-Turbo (MIT), and Qwen3-TTS (Apache 2.0) are safe choices

- Research-only: XTTS-v2 (Coqui PML) is restricted to non-commercial use

- Regulated industries: Only self-hostable options with compliance certifications are viable. For HIPAA or GDPR compliance, platforms like Dograh AI offer built-in compliance frameworks.

Match the Tool Type to Your Actual Need

| Use Case | Best Fit | Why |

|---|---|---|

| Static audio files or narration | Standalone TTS model (Kokoro, Qwen3-TTS) | Simple output pipeline, no real-time requirements |

| Real-time, interactive voice agents | Full platform (Dograh AI) | TTS alone leaves you building STT, LLM orchestration, telephony, and conversation management yourself |

Picking a bare TTS model for an agent deployment is a structural mismatch. The gap between generating audio and running a production voice agent is significant.

Evaluate Latency and Voice Quality Against Hardware Constraints

Production real-time applications need sub-200ms first-audio latency. Test models on your actual deployment hardware, not just cloud benchmarks:

- Voice cloning requirements: How long a reference clip is needed? Qwen3-TTS requires 3 seconds, XTTS-v2 requires 6 seconds.

- Multilingual support: Does the model cover your user base's languages?

- Hardware testing: Run benchmarks on your target infrastructure to measure P95 and P99 tail latencies under realistic concurrent load

For comparative quality benchmarks, the TTS Arena leaderboard on Hugging Face is a practical place to start before committing to a model.

Conclusion

Open-source voice AI in 2026 is production-ready. Kokoro and Chatterbox-Turbo cover lightweight and expressive TTS needs, XTTS-v2 and Qwen3-TTS handle multilingual voice cloning, and platforms like Dograh AI bridge the gap between raw voice synthesis and enterprise-grade voice agents.

Pick based on what actually matters for your deployment: compliance posture, hardware budget, latency tolerance, and whether you need voice output or a full voice agent. Leaderboard rankings won't tell you that. Run your own tests on representative text and hardware before committing.

If the next step is moving from a TTS model to a production voice agent—without platform fees, data exposure, or vendor lock-in—explore Dograh AI on GitHub or join the Slack community to connect directly with the founding team.

Frequently Asked Questions

What is the most realistic AI voice generator?

Fish Audio S2 Pro ranked highest on EmergentTTS-Eval and Audio Turing Test benchmarks with an 81.88% win rate and 0.515 posterior mean. That said, "realism" is subjective. Community leaderboards like TTS Arena on Hugging Face offer useful side-by-side comparisons if you want to evaluate for your specific use case.

What are the best open source text-to-speech models?

The top open-source TTS models vary by use case:

- Kokoro — Apache 2.0 licensed, optimized for speed and efficiency

- Chatterbox-Turbo — MIT licensed, built-in emotion control

- XTTS-v2 — strong multilingual support with voice cloning

- Qwen3-TTS — fast cloning with low latency

License type, language coverage, and whether you're deploying for research or production all affect which fits best.

What is the best open source AI voice assistant?

For a complete voice assistant, you need more than a TTS model — you need a platform that handles conversation flow, STT, and orchestration. Dograh AI is built specifically for this, offering deployable voice agents out of the box. Standalone TTS models like Kokoro or XTTS-v2 require additional engineering to function as full assistants.

Which website is best for AI voice generator?

Hugging Face is the go-to hub for discovering and downloading open-source TTS models, with a live TTS Arena leaderboard for benchmarking. If you need no-code voice agent deployment rather than raw models, Dograh AI provides a browser-based interface with self-hosting support.

Which AI voice is most used?

XTTS-v2 (Coqui) leads open-source downloads on Hugging Face with 6.38 million downloads last month. MeloTTS-English also ranks near the top. Proprietary voices from OpenAI reach a wider consumer audience, but they are closed-source.