Introduction: What Is Self-Hosted Voice AI and Why Does It Matter?

Teams building voice AI face a critical choice: send audio data to a third-party cloud or run the entire stack on infrastructure you control. For organizations handling sensitive conversations—healthcare consultations, legal intakes, financial transactions—this decision directly impacts compliance risk and operational costs.

Self-hosted voice AI means deploying the complete voice pipeline—speech-to-text (STT), a large language model (LLM), and text-to-speech (TTS)—on servers you own or control. Unlike managed cloud platforms that route every spoken word through external APIs, self-hosting keeps audio recordings, transcripts, and model outputs inside your network perimeter.

The business case for self-hosting has changed sharply. Average US data breach costs reached $10.22 million in 2025, with "Shadow AI" incidents adding an extra $670,000 per breach. Cloud voice platforms charge $0.18–$0.33 per minute when you factor in STT, TTS, LLM, and telephony fees—costs that compound rapidly at scale.

This guide covers:

- Why self-hosting is accelerating in 2026

- The components that make up a production voice stack

- How cloud and self-hosted solutions compare on cost and compliance

- Which platforms lead the market

- A practical setup framework for deploying your first agent

TLDR

- Self-hosted voice AI puts STT, LLM, and TTS entirely under your control—no data leaves your environment

- It is the only viable path for HIPAA, GDPR, and PCI DSS compliance in regulated industries

- Cloud voice AI starts faster but carries unpredictable per-minute costs and vendor lock-in

- Four components make it work: a Whisper-class speech-to-text model, open-source LLM, TTS engine, and voice pipeline orchestration

- Dograh AI ships a BSD 2-Clause open-source framework with pre-integrated models—agents deploy in under 2 minutes

Why Self-Hosted Voice AI Is Gaining Momentum

Regulations like HIPAA, GDPR, and PCI DSS impose strict controls over where patient, financial, and personal data is processed and stored. When every spoken word flows through a third-party cloud API, meeting those requirements becomes legally complex. Key compliance obligations include:

- HIPAA: Covered entities must obtain written Business Associate Agreements (BAAs) from any party handling Protected Health Information (45 CFR 160.103)

- GDPR Article 28: Controllers must use processors that provide "sufficient guarantees" for appropriate technical measures (GDPR Article 28)

Self-hosting eliminates third-party processor risk by keeping data entirely within your own infrastructure.

Beyond regulatory mandates, enterprises in healthcare, defense, legal, and finance increasingly require that audio, transcripts, and model outputs never leave their network perimeter. This data sovereignty pressure is reshaping infrastructure decisions: 61% of Western European CIOs say geopolitics will drive them toward local or regional cloud providers.

Real-world deployments reflect this shift. The UK's NHS uses Trusted Research Environments like OpenSAFELY, where researchers analyze data on secure servers without it ever leaving the environment. The US Department of Defense mandates DevSecOps reference designs for deploying LLMs to ensure data provenance and traceability.

The Cost Structure Problem With Cloud Voice AI

Cloud voice platforms advertise low base rates—often $0.05 per minute—but the true cost compounds when you add STT, TTS, LLM, and telephony. Vapi's total cost can reach $0.18–$0.33 per minute when all components are factored in. Retell AI's infrastructure fee starts at $0.055/minute, with TTS adding $0.015/minute and LLM adding $0.04/minute, pushing totals to $0.07–$0.31 per minute.

The underlying APIs introduce separate charges:

| Service | Component | Pricing |

|---|---|---|

| Google Speech-to-Text V2 | STT | $0.016/min (up to 500k mins) |

| AWS Transcribe | STT | $0.024/min (US East) |

| ElevenLabs | TTS | $0.06–$0.17 per 1k characters |

| Google Cloud TTS | TTS | $16–$30 per 1M characters |

Self-hosting converts these variable, unpredictable per-call costs into fixed infrastructure expenses. Once you provision hardware or a private cloud environment, marginal cost per additional call trends toward zero—a different financial model that scales without compounding cost.

Control, Customization, and Avoiding Vendor Lock-In

Proprietary cloud platforms use custom APIs, model configurations, and pricing tiers that make migration expensive. Industry analysts warn that incumbent vendors are tightening API access to monetize platforms, imposing rate limits and higher fees. Forrester notes that enterprise application vendors are using entrenched positions to push high-margin AI products, which "dramatically increases vendor lock-in and strategic risk".

Self-hosted open-source solutions—released under permissive licenses like Apache 2.0 or BSD—ensure you own your stack, voice configurations, and conversation logic permanently. This portability preserves auditability and prevents you from being constrained by proprietary pricing changes.

Core Components of a Self-Hosted Voice AI Stack

A production voice AI system requires four layers:

- STT layer — Converts incoming audio to text

- LLM layer — Interprets input and generates responses

- TTS layer — Converts text responses back to audio

- Orchestration layer — Manages conversation state, routing, integrations, and context retention

Each layer has distinct latency, accuracy, and resource tradeoffs worth understanding before you start building.

Speech-to-Text (STT) Layer

OpenAI Whisper (and derivatives like Faster-Whisper) sets the accuracy benchmark for self-hosted STT. Faster-Whisper, built on CTranslate2, delivers up to 4x faster inference than the original model with identical accuracy. INT8 quantization reduces Whisper large-v3's VRAM footprint to under 3GB while cutting inference time by over 50%.

Faster-Whisper Benchmarks (Large-v2/v3, 13-min audio):

| Implementation | Precision | VRAM | Time |

|---|---|---|---|

| OpenAI Whisper | FP16 | 4708 MB | 2m 23s |

| Faster-Whisper | FP16 | 4525 MB | 1m 03s |

| Faster-Whisper | INT8 | 2926 MB | 59s |

For real-time voice agents, STT must return results fast enough to avoid perceptible delay. Model size (tiny, base, large-v3) trades speed against accuracy — tiny runs on CPU for low-stakes transcription; large-v3 is warranted when accuracy directly affects downstream LLM quality.

LLM and TTS Layers

Use smaller instruction-tuned models (7B–13B parameters, 4-bit quantized) for latency-sensitive voice deployments. Larger models improve response quality but add hundreds of milliseconds of inference time. The target threshold for natural conversation is sub-500ms end-to-end latency. Using Q4_K_M 4-bit quantization, an 8B model like Llama 3.1 requires approximately 6.2 GB of VRAM.

LLM Inference Benchmarks (Llama 3 8B, Q4_K_M):

| GPU | VRAM | Tokens/Sec |

|---|---|---|

| RTX 3090 | 24 GB | 111.74 |

| RTX 4090 | 24 GB | 186.21 |

| A100 | 80 GB | 125.00 |

TTS options for self-hosted production include:

- Kokoro-82M — Ultra-lightweight, 82M parameters, sub-0.3s latency, <2GB VRAM

- FishSpeech — 4B parameters, 80+ languages, zero-shot voice cloning, 12–24GB VRAM

- F5-TTS — Multilingual, 2–3GB VRAM, MIT license

- MaskGCT — 315M–695M parameters, ~10GB VRAM, multilingual

The Orchestration Layer

The three model layers handle transcription, reasoning, and speech — but orchestration is what turns them into a usable agent. It manages turn-taking logic, interruption handling (barge-in), conversation context across 10+ turns, telephony integration (SIP/WebRTC), tool calls, CRM lookups, and compliance logging. Without a dedicated orchestration layer, teams typically spend several weeks engineering this plumbing before writing a single line of agent logic.

A complete voice pipeline can run concurrently on a single 24GB GPU. Combining Faster-Whisper Large-v3 INT8 (~2.9GB), a 4-bit 8B LLM (~6.2GB), and Kokoro-82M TTS (~2GB) consumes approximately 11.1GB of VRAM, leaving ample headroom for KV cache and context windows. That headroom matters in practice — it means you can run multiple concurrent sessions or swap in a larger LLM without adding a second GPU.

Self-Hosted vs. Cloud Voice AI: A Direct Comparison

| Criterion | Self-Hosted | Cloud API |

|---|---|---|

| Data Privacy & Compliance | Full data sovereignty; no third-party processors | Data leaves your network; requires BAAs |

| Cost Model | Fixed infrastructure cost; near-zero marginal cost as volume grows | Per-minute fees that compound at volume |

| Latency | Sub-500ms achievable on adequate hardware | 200–800ms telephony + API delays |

| Customization/Control | Full model tuning, voice personas, integrations | Limited to API parameters |

| Time to Deploy | Minutes to hours (with platforms); days (DIY) | Near-instant setup |

| Vendor Lock-in Risk | None; open-source portability | Proprietary APIs and pricing tiers |

| Scalability | Linear with infrastructure | Scales automatically but costs scale linearly |

Where Cloud Voice AI Has the Edge

Managed cloud platforms—Vapi, Retell, Bland AI, ElevenLabs—offer genuine advantages: minimal infrastructure setup, managed uptime, and access to the latest proprietary models without MLOps overhead. For early-stage products or teams without dedicated DevOps, that's a real time-to-market advantage.

Where Self-Hosting Wins Decisively

Self-hosting pulls ahead in the situations that matter most:

- Regulated data environments — HIPAA, GDPR, and PCI DSS compliance is non-negotiable when audio contains protected information

- High-volume deployments — Per-minute cloud fees become prohibitive at scale; self-hosting offers predictable infrastructure costs

- Custom requirements — Organizations needing model fine-tuning, proprietary voice personas, or deep integration with internal systems unavailable via public APIs

The choice isn't always binary. A hybrid approach works well in practice: run self-hosted orchestration and your agent layer on-premise, then route LLM inference to external APIs only where the tradeoff makes sense. Teams get cost control where volume demands it and model quality where the use case requires it.

Top Self-Hosted Voice AI Platforms and Models in 2025

The open-source model landscape includes:

- STT: Faster-Whisper (large-v3) leads in accuracy — 22.1k GitHub stars

- TTS: Kokoro-82M for lightweight deployments (9.8M Hugging Face downloads); FishSpeech for higher quality and voice cloning (29.6k stars)

- LLMs: Llama 3 (29.3k stars, 1.3M monthly downloads for 8B-Instruct) and Mistral (10.8k stars, 776k monthly downloads for 7B-v0.1) dominate self-hosted production deployments

Dograh AI (open-source under BSD 2-Clause, also known as Bolna AI) sits above the model layer as a production-ready, self-hostable voice agent platform. It pre-integrates major STT, LLM, and TTS models and includes a no-code/low-code workflow builder for multi-agent conversational flows with context retention across 45+ minute conversations.

The platform is SOC 2, HIPAA, GDPR, and PCI DSS compliant, and deploys agents in approximately 2 minutes.

The key differentiator from raw model self-hosting: Dograh handles the orchestration layer by default—turn-taking, barge-in, tool calls, state management, retries, SIP/WebRTC telephony, and compliance logging—so teams skip the custom integration work required to wire those components together independently.

That orchestration value also shapes the pricing model. Unlike proprietary cloud platforms — Vapi at $0.05/min base + components, Retell at $0.055/min infra + $0.07–$0.31 total, Bland AI at $0.09/min — Dograh charges no platform fees. Users bring their own API keys for STT, TTS, and LLM, paying providers directly.

Setting Up Your Self-Hosted Voice AI Stack: Key Steps

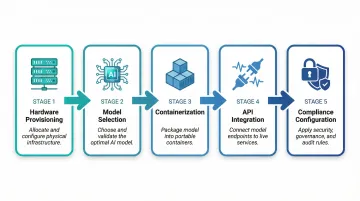

Most teams can get a self-hosted voice AI stack running in less than a week. The process breaks into five distinct phases:

- Hardware or cloud infrastructure provisioning — Minimum GPU VRAM for STT + LLM + TTS running concurrently (~11GB for optimized stack; 24GB GPU recommended)

- Model selection and download — Choose model sizes based on latency vs. quality requirements

- Containerization — Use Docker Compose to run STT, LLM, TTS, and orchestration services as isolated, restartable containers

- API integration — Connect the stack to telephony (SIP trunk, WebRTC) or web interfaces

- Compliance configuration — Enable audit logging, at-rest encryption, and network isolation policies

Teams without deep MLOps experience can bypass much of the custom integration work by using a pre-integrated platform. Dograh AI, for example, packages models, orchestration, workflow logic, and compliance controls into a deployable open-source repository — reducing setup from days of custom integration to a single deployment command, with agents configurable in under 2 minutes through a no-code workflow builder.

Choosing infrastructure is the next decision. GPU server costs vary significantly depending on VRAM requirements and provider:

Public GPU Server Pricing (Retrieved April 2026):

| Provider | GPU Model | VRAM | Monthly Cost |

|---|---|---|---|

| Hetzner (GEX44) | RTX 4000 SFF Ada | 20 GB | ~$200 |

| RunPod | RTX 4090 | 24 GB | ~$245 |

| Lambda Labs | A100 PCIe | 40 GB | ~$1,433 |

Ongoing operational considerations:

- Update STT and TTS models regularly to avoid drift between model versions and your production environment

- Plan VRAM allocation before deployment — running STT, LLM, and TTS concurrently on a single GPU causes contention without explicit memory budgeting

- Monitor end-to-end response times continuously; natural conversation requires staying under 500ms

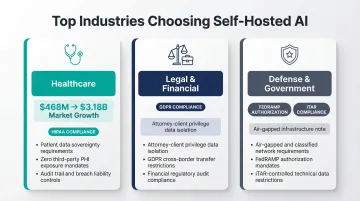

Which Industries Benefit Most from Self-Hosting Voice AI

Self-hosting delivers the clearest ROI in three verticals where data control isn't optional:

Healthcare — HIPAA requires covered entities to have BAAs and data processing agreements. Cloud APIs often cannot provide adequate PHI protection guarantees, making self-hosting the safest path for patient-facing voice applications. The global AI voice agents in healthcare market is projected to expand from $468 million in 2024 to $3.18 billion by 2030 (37.79% CAGR).

Legal and financial services — Attorney-client privilege and financial data confidentiality create strong incentives to keep conversation data on-premise. Under GDPR Chapter V, international data transfers require adequate safeguards, making self-hosting the most straightforward compliance path.

Defense and government — Regulatory frameworks like FedRAMP and ITAR often mandate that AI processing occur within controlled, air-gapped, or sovereign infrastructure.

Compliance isn't the only driver. SMBs and mid-market companies are increasingly self-hosting for cost reasons alone. At moderate call volumes — typically thousands of monthly minutes — self-hosting beats cloud API costs even before compliance benefits enter the equation. Key factors that accelerate the crossover:

- Fixed infrastructure costs fall below variable API spend

- No per-minute or per-call vendor markups

- ROI crossover typically arrives within 6–12 months of deployment

Frequently Asked Questions

What hardware do I need to self-host a voice AI system?

Small-scale deployments run on a single NVIDIA GPU with 12GB+ VRAM (e.g., RTX 3060); enterprise setups require 24GB+ VRAM servers. CPU-only runs are possible but costly in latency—an 8B LLM on CPU yields ~5.6 tokens/second versus ~40.6 tokens/second on an entry-level RTX 4060.

Is self-hosted voice AI HIPAA compliant?

Self-hosting enables HIPAA compliance by keeping PHI in your controlled environment, but you still need encryption, access controls, audit logging, and BAA execution support. Dograh AI meets these requirements out of the box with SOC 2, HIPAA, GDPR, and PCI DSS certifications.

How does self-hosted voice AI latency compare to cloud solutions?

Self-hosting eliminates network round-trips: H100 inference averages 18ms latency versus ~350ms for cloud endpoints. Cloud pipelines also accumulate 200–800ms in telephony delays plus 40–300ms in STT and 50–250ms in TTS before a response reaches the caller.

What is the difference between self-hosting TTS and a full voice AI agent?

Self-hosting TTS means running a text-to-audio model on your infrastructure. A full voice agent adds STT (audio to text), LLM reasoning (response generation), conversation state management, telephony integration, and workflow logic on top of TTS—requiring orchestration to coordinate these components.

Can I mix self-hosted and cloud models in the same voice AI pipeline?

Yes, hybrid architectures are common. You can run self-hosted orchestration and STT while routing LLM inference to an external API. Dograh AI supports configurable model backends with BYOK (bring-your-own-keys), letting you choose STT, LLM, and TTS vendors independently per component.

How much does it cost to self-host voice AI versus using a cloud API?

Self-hosting carries higher upfront cost but near-zero marginal cost per call at scale, while cloud APIs charge per minute and compound at volume. The crossover typically hits at 600–1,000 minutes/month—a $200/month GPU server undercuts cloud rates of $0.18–$0.33/min beyond that threshold.