Introduction

AI voice assistants are everywhere—Alexa handles shopping requests, Google Assistant manages calendars, cloud-based phone bots field customer calls. Yet every commercial option routes your voice data through third-party servers, creating compliance, privacy, and cost risks for businesses in regulated industries.

The stakes are real. Amazon faced FTC and DOJ charges in 2023 for retaining children's Alexa voice recordings indefinitely and allowing 30,000 employees access to audio clips. Google settled for $68 million over claims its assistant recorded communications without consent.

For healthcare providers, legal firms, and financial institutions subject to HIPAA, GDPR, or PCI DSS, those aren't edge cases. They're exactly the scenarios your infrastructure needs to prevent.

Self-hosting puts that control back in your hands. Building a capable AI voice assistant with open-source components is achievable, but results vary based on architecture choices, model configuration, and pipeline integration. This guide covers what to prepare, the exact build steps, and the mistakes that derail most first attempts.

TL;DR

- Three components power every self-hosted voice assistant: STT, a local LLM, and TTS — connected by an orchestration layer

- Self-hosting keeps voice data inside your infrastructure, making it the default choice for HIPAA, GDPR, and PCI DSS compliance

- Minimum viable hardware is 16GB RAM, 8-core CPU, and a GPU with 8GB VRAM — CPU-only works but adds noticeable latency

- The most common failure: using base models instead of instruction-tuned variants and underestimating hardware requirements until after deployment

- Dograh AI ships STT, LLM, and TTS pre-integrated into a deployable self-hosted stack, cutting setup time significantly

What You Need Before Building a Self-Hosted AI Voice Assistant

Before writing a single line of configuration, verify that your hardware, software, and compliance posture are all in place. Gaps in any of these three areas are where most self-hosted builds stall.

Hardware and Infrastructure Requirements

CPU-only minimum:

- 8-core processor

- 32GB RAM

- 20GB dedicated storage for model weights and audio components

GPU-accelerated (recommended):

- NVIDIA RTX-class GPU with 8–16GB VRAM

- Required for sub-500ms end-to-end latency in most configurations

Model weights range from 4GB for small quantized variants to 70GB+ for large models — 20GB storage is the floor, not a target. Once your hardware is confirmed, verify your software dependencies before loading any models.

Software and Model Prerequisites

Required software dependencies:

- Linux-based OS (Ubuntu 22.04+ recommended)

- Python 3.9 or later

- Docker or equivalent container runtime

- Ollama or similar model serving layer

- Microphone/audio interface for local testing

Model selection is where many builders make a costly mistake: only instruction-tuned models with tool-calling support — such as Llama 3 8B Instruct, Qwen3, or Deepseek-R1 — enable the assistant to perform actions, not just answer questions. Base completion models will not work for this purpose.

For regulated industries, software readiness also means confirming your deployment satisfies data residency rules before you build, not after.

Compliance and Data Residency Readiness

For healthcare, legal, finance, or government use cases, confirm before building that your deployment environment satisfies data residency requirements:

- All model inference, audio processing, and logging must occur within the regulated boundary

- Verify no telemetry or external API calls will be made by default

- Monitor network traffic using firewall rules or network monitoring tools to confirm no unexpected outbound connections

- Encrypt conversation logs at rest

How to Build a Self-Hosted AI Voice Assistant

Step 1: Define Your Architecture and Choose Your Component Stack

Two architecture patterns:

- Fully air-gapped on-premise — all components self-hosted, no external API calls (required for strict HIPAA/GDPR data sovereignty)

- Hybrid — self-hosted LLM and TTS with optional cloud STT (faster deployment but creates compliance gaps)

Map the three required pipeline components:

- STT engine converts audio to text

- LLM reasoning layer interprets intent and generates responses

- TTS engine converts text back to speech

Every gap in this chain must be filled before the assistant can function end-to-end.

Step 2: Set Up the Speech-to-Text (STT) Engine

Install OpenAI Whisper or Faster-Whisper as your STT layer. Faster-Whisper delivers 4x speedup over the reference implementation while maintaining accuracy, achieving identical Word Error Rates with significantly reduced inference time.

Model size trade-offs:

- Base/Small variants: Faster inference on modest hardware (good for general conversation)

- Medium/Large variants: Better accuracy for accented speech and domain-specific vocabulary

For specialized deployments, domain fine-tuned Whisper models exist. Medical vocabulary fine-tuning reduced Word Error Rate from 63% to 32% on just 8.5 hours of training data.

Configuration decision: Run Whisper as a standalone service (better for multi-user or telephony setups) or embed within the voice loop script (simpler for single-user deployments).

Step 3: Configure the Local LLM with Ollama

Pull a tool-calling-capable model via Ollama:

ollama pull llama3

Or for lower hardware requirements:

ollama pull qwen3

The model must be instruction-tuned and support structured JSON output for tool calling — general-purpose base models will not handle agent tasks correctly.

Write a system prompt defining:

- The assistant's role and persona

- Behavioral constraints (politeness, response length, domain boundaries)

- Available tools and when to invoke them

Be explicit about tool invocation rules in your system prompt — vague instructions here produce inconsistent JSON output and unreliable agent behavior in production.

With your LLM layer configured, the next step is giving it a voice.

Step 4: Integrate the Text-to-Speech (TTS) Engine

Choose a TTS engine based on your hardware and quality requirements:

| Engine | Strengths | Hardware | Voice Quality |

|---|---|---|---|

| Piper | Lightweight, CPU-friendly, used by Home Assistant | Runs on Raspberry Pi 5 (~0.54s inference) | Good naturalness for most use cases |

| Coqui XTTSv2 | High naturalness, cross-language voice cloning | GPU-accelerated for <200ms latency | Superior voice quality, ideal for customer-facing deployments |

Voice naturalness directly impacts user trust and adoption. For business-facing deployments, invest in higher-quality TTS.

Configure interruption handling: A voice assistant that finishes speaking before accepting new input creates poor UX. Buffer and stream TTS output to reduce perceived latency.

Step 5: Connect the Voice Pipeline and Enable Agent Capabilities

The orchestration loop:

- Audio capture

- STT (speech-to-text)

- LLM inference

- Tool execution (if applicable)

- TTS (text-to-speech)

- Audio output

Test each component independently before chaining to isolate failure points.

Enable tool calling:

The LLM outputs structured JSON function calls:

{

"tool": "schedule_callback",

"args": {

"phone": "+1-555-0123",

"time": "2026-04-15T14:00:00Z"

}

}

The orchestration layer intercepts and executes these calls, enabling the assistant to trigger CRM updates, appointment booking, and data lookups. This is what moves a voice assistant from answering questions to actually doing work inside your systems.

Add memory and RAG for business context:

- Persistent key-value store for session data

- Vector store (ChromaDB or similar) for RAG-enabled access to knowledge bases, customer records, or call scripts

This enables personalization and contextual accuracy across sessions. Once all five components are wired together and tested, you have a fully self-hosted voice agent — no third-party APIs in the loop, no data leaving your environment.

Key Variables That Affect Your Voice Assistant's Performance

Two setups using identical components can produce dramatically different results. The culprit is rarely the component teams blame first — most often it's STT latency or audio quality, not the LLM. These three variables account for the majority of real-world performance gaps.

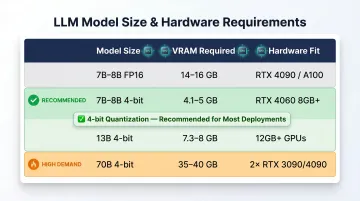

LLM Model Size and Quantization

Larger models produce more accurate, context-aware responses but require more VRAM and longer token generation times. Quantized models (4-bit, 8-bit) shrink the memory footprint with minimal quality loss — and they often outperform smaller full-precision models on the same hardware.

A 2024 evaluation found that quantizing a larger LLM to a similar size as a smaller FP16 LLM generally performs better across benchmarks—for example, a 4-bit Llama-2-13B outperforms an FP16 Llama-2-7B.

| Model Size | Precision | VRAM Required | Hardware Fit |

|---|---|---|---|

| 7B-8B | FP16 | 14-16 GB | RTX 4090, A100 |

| 7B-8B | 4-bit (Q4_K_M) | 4.1-5 GB | RTX 4060 (8GB+) |

| 13B | 4-bit (Q4_K_M) | 7.3-8 GB | 12GB+ GPUs |

| 70B | 4-bit (Q4_K_M) | 35-40 GB | 2x RTX 3090/4090 |

For edge or on-device deployments, 4-bit quantization is the practical path to running capable models without high-end server hardware.

End-to-End Pipeline Latency

Voice interactions have a hard perceptual threshold. Response times beyond 2 seconds feel unnatural; delays above 0.6–0.7 seconds start to feel robotic. Every pipeline stage contributes to this cumulative budget — there's no single component to optimize in isolation.

Target latency budget for streaming pipelines:

- Audio transport (WebRTC): <50ms

- STT (first partial result): 100-200ms

- LLM Time-to-First-Token (TTFT): 200-400ms

- TTS Time-to-First-Byte (TTFB): 100-300ms

- Total perceived latency: <1 second

The highest-leverage optimization here is streaming TTS output: begin speaking the first sentence while the LLM is still generating the rest. This alone cuts perceived latency more than any component swap. Sub-500ms total latency requires both GPU acceleration and streaming output — neither alone gets you there.

Audio Quality and STT Accuracy

STT accuracy is the entry point of the entire pipeline. Nothing downstream can correct a mistranscription — a garbled input produces a garbled response, regardless of model quality. Background noise, microphone bandwidth, speaker accents, and domain-specific vocabulary all compound into accuracy loss.

Performance remains stable until approximately 3 dB Signal-to-Noise Ratio, below which degradation accelerates sharply. Microphone bandwidth has a measurable impact: narrowband capture (300 Hz–3.4 kHz) yields ~25% word error rate at 10 dB SNR, while super-wideband (20 Hz–20 kHz) drops that to ~12%.

For medical or legal deployments, fine-tuned Whisper models consistently outperform general-purpose models on specialized vocabulary — a standard Whisper model will stumble on drug names and legal Latin where a domain-tuned variant holds accuracy.

Common Mistakes and How to Troubleshoot Them

Most build failures trace back to the same handful of errors. Identifying them early saves hours of debugging. The four patterns below cover the most common failure points across model selection, hardware, error handling, and compliance.

Choosing a Base LLM Instead of an Instruction-Tuned Model

Symptom: The assistant ignores tool calls, produces unstructured output, or fails to follow system prompt instructions.

Cause: Using a base (completion) model instead of an instruction-tuned variant that understands chat format and JSON function-calling schema.

Fix: Switch to a model tagged as "instruct" or "chat" in Ollama's model library. Verify tool-calling support by testing with a simple JSON-output prompt before integrating into the pipeline.

Underestimating Hardware for Real-Time Inference

Symptom: The assistant responds correctly but with 5-10 second delays, making it unusable in conversation.

Cause: Running a large model on CPU-only hardware or insufficient VRAM forcing the model to use system RAM (slow memory).

Fix:

- Switch to a smaller quantized model (Phi-3.5 Mini or Llama 3 8B q4) for CPU environments

- Upgrade to a GPU with sufficient VRAM to hold the model entirely in memory

- Test each stage in isolation to confirm which step is the bottleneck

No Error Handling in the Orchestration Loop

Symptom: The pipeline crashes silently when STT returns an empty string, the LLM output is malformed JSON, or the TTS engine fails to render.

Cause: Without exception handling at each stage, one failure breaks the entire session with no useful feedback.

Fix: Wrap each pipeline stage in error handlers with fallback responses — for example, "I didn't catch that, could you repeat?" — so a single failure doesn't collapse the session. Log all intermediate outputs (raw transcription, LLM response, tool call JSON) separately for debugging.

Skipping Compliance Validation Until After Deployment

Symptom: A technically functional assistant that logs audio files to a default directory, sends model requests to an external API during testing, or stores conversation transcripts unencrypted.

Cause: Teams typically discover regulatory exposure only during audit — after the architecture is already locked in.

Fix:

- Audit all network calls using a packet sniffer or firewall rules to confirm zero external traffic

- Encrypt conversation logs at rest

- Verify deployment satisfies data residency requirements by jurisdiction

When to Self-Host vs. Use a Managed Voice AI Platform

Self-hosting isn't always the right choice. The decision depends on regulatory requirements, internal engineering capacity, and the scale and complexity of voice interactions needed.

Self-hosting makes clear sense when:

- Your industry mandates on-premise data processing (HIPAA, GDPR, PCI DSS, defense/government)

- You need full auditability of every voice interaction

- You expect high call volume and want to avoid per-minute cloud pricing that scales unpredictably

- You need to customize the voice pipeline (custom wake words, domain-specific STT fine-tuning, proprietary business logic in tool calls)

The cost case is concrete: cloud STT pricing ranges from $0.016–$0.075 per minute, and at scale that compounds fast. For workloads processing 10 billion tokens per month over three years, cloud API costs reach ~$3.33M versus ~$1.43M for on-premise—a 57% reduction.

A managed platform is preferable when:

- Your team lacks the engineering bandwidth to maintain infrastructure

- You need to deploy in days rather than weeks

- Your use case is general-purpose and doesn't involve sensitive data

For teams that want self-hosting control without building everything from scratch, Dograh AI offers an open-source stack under the BSD 2-Clause license. It's HIPAA/GDPR-compliant, targets sub-500ms latency, and can be deployed in minutes — a practical middle ground for engineering teams that need compliance without a months-long infrastructure project.

Frequently Asked Questions

What hardware do I need to run a self-hosted AI voice assistant?

Minimum specs: 16GB RAM, 8-core CPU for slower inference. Recommended: GPU with 8-16GB VRAM for real-time performance. The LLM model size is the primary hardware driver—larger models require proportionally more VRAM.

Can a self-hosted AI voice assistant be HIPAA or GDPR compliant?

Yes, self-hosted setups can meet HIPAA and GDPR requirements because no data leaves your infrastructure. Compliance still requires auditing components for external calls, encrypting stored audio and transcripts, and confirming the deployment environment falls within the regulated boundary.

What is the difference between a self-hosted and cloud-based AI voice assistant?

Cloud-based assistants process voice data on vendor servers, creating privacy and compliance risks. Self-hosted setups run all inference locally, giving the operator full control over data, model behavior, and infrastructure costs.

Which LLM works best for a self-hosted voice assistant?

Instruction-tuned models with tool-calling support: Llama 3 8B Instruct, Qwen3, or Phi-3.5 Mini for lower hardware requirements. Model choice depends on available VRAM, required latency, and whether the assistant needs to perform actions or only answer questions.

How do I reduce latency in a self-hosted AI voice assistant?

The three highest-impact optimizations:

- Use a GPU to run inference

- Select a smaller quantized model

- Stream TTS output while the LLM is still generating

Together, these can bring end-to-end response time below 500ms.

How much does it cost to self-host an AI voice assistant?

Costs consist of a one-time hardware purchase (GPU server or cloud VM) plus ongoing infrastructure expenses like electricity or hosting — with no per-minute API fees. Cloud-based voice AI platforms charge per call or per minute, making costs difficult to predict as usage grows.