Introduction

Most teams building voice automation hit the same wall: proprietary platforms charge per call, lock your data behind their infrastructure, and leave you one pricing change away from a broken budget. Open-source tooling now makes it possible to build production-grade voice bots entirely on your own stack — without those dependencies.

This guide addresses developers, technical founders, and engineering leads in compliance-sensitive industries: healthcare, legal, and financial services. If you need full data control, want to avoid vendor lock-in, or face unpredictable per-call billing from managed platforms, the open-source path gives you a concrete way out.

You'll learn what an open-source voice bot is, why it matters for regulated environments, how to architect and build one step by step, what factors determine real-world performance, and the most common mistakes teams make when deploying voice AI in production.

TL;DR

- An open-source voice bot combines STT, an LLM, and TTS — all with production-ready open-source options available now

- Building from scratch gives full control over data residency, cost structure, and customization—critical for regulated industries with strict data residency requirements

- Orchestrating components to hit sub-500ms latency is the real engineering challenge — not just assembling them

- Open-source doesn't mean free—STT processing, LLM inference, and TTS generation have infrastructure costs you must plan for

- Consider a framework like Dograh AI's BSD-licensed platform: it handles orchestration out of the box while remaining fully self-hostable and open source

What Is an Open-Source Voice Bot?

A voice bot is software that holds spoken conversations with humans by combining automatic speech recognition, AI-driven response generation, and speech synthesis. It differs fundamentally from IVRs (rule-based menu systems with touch-tone navigation) and text chatbots (which lack any voice channel).

"Open-source" in this context means the codebase is publicly available, self-hostable, and modifiable under a permissive license such as BSD, MIT, or Apache. Proprietary API services charge per call and give you no visibility into — or control over — the underlying logic. Open-source changes that equation entirely.

Every voice bot requires three functional layers:

- STT (Speech-to-Text): Converts spoken words to text — OpenAI Whisper is a common open-source choice

- LLM (Reasoning Layer): Processes intent and generates contextual responses — Llama and Mistral are popular self-hostable options

- TTS (Text-to-Speech): Synthesizes text back into natural speech — Coqui and Piper handle this well

Each layer has mature open-source options today. The real challenge isn't finding components — it's assembling them into a stack that performs reliably under production load.

Why Build Your Voice Bot with Open Source?

Data Sovereignty and Regulatory Compliance

When STT, LLM inference, and TTS all run on your own infrastructure, audio of customer calls never leaves your environment. This is non-negotiable for:

- HIPAA-regulated healthcare conversations: Cloud Service Providers processing electronic Protected Health Information (ePHI) must sign a Business Associate Agreement

- GDPR-sensitive EU data: The European Data Protection Board explicitly classifies voice data as biometric personal data, requiring valid legal bases under Articles 6 and 9

- Government and defense use cases: Contractual restrictions often prohibit third-party processing entirely

Recent enforcement shows regulators are serious: Italy's Garante fined OpenAI €15 million for GDPR violations, and France's CNIL fined Clearview AI €20 million for unlawful biometric processing.

Cost Structure: Fixed Infrastructure vs. Variable Per-Minute Fees

Proprietary voice platforms charge per minute of audio, per LLM token, and per character of TTS, costs that compound unpredictably at scale. Open-source shifts you to fixed infrastructure costs you can optimize yourself, changing unit economics at volume.

Cost Comparison at 10,000 Minutes/Month:

| Service | Managed API Pricing | Self-Hosted (AWS g6.xlarge) |

|---|---|---|

| STT | $144 (Google Cloud @ $0.0144/min) | Included in compute |

| LLM | ~$200–400 (token-based, varies by usage) | Included in compute |

| TTS | ~$30–50 (character-based) | Included in compute |

| Infrastructure | N/A | $438/month (~$0.60/hour × 730 hours) |

| Total | $374–594+ (variable, scales linearly) | $438 (fixed, scales with volume tiers) |

At higher volumes, the fixed infrastructure model becomes significantly more economical — and predictable.

Customization and Control

Cost savings are only part of the picture. Open-source gives you capabilities that no-code platforms cap at template-level configuration:

- Fine-tune STT on domain-specific vocabulary: medical terminology, legal citations, financial products

- Train custom TTS voices for brand consistency across all customer interactions

- Modify conversation logic at the code level with no platform restrictions

Avoiding Vendor Lock-In

Beyond customization, there's a durability risk with proprietary platforms: API deprecations, pricing changes, and feature removals are entirely outside your control. Anthropic retired Claude Opus 3 on January 5, 2026, forcing migrations. OpenAI deprecated gpt-4-32k and gpt-4-vision-preview, requiring unplanned engineering work. Open-source eliminates this single point of failure.

The Honest Investment Required

You own the maintenance, model updates, infrastructure scaling, and security patching. Teams should assess engineering capacity realistically before committing to a fully custom build versus adopting an open-source voice AI platform like Dograh AI. Dograh AI is BSD-licensed and self-hostable, with production-ready components that handle orchestration so your team can focus on the application layer rather than infrastructure plumbing.

How to Build a Voice Bot from Scratch: Architecture and Process

The end-to-end pipeline flows: audio in → STT → context-aware LLM response → TTS → audio out, with session state management running in parallel and a telephony layer wrapping the entire stack. Latency accumulates at every hop—your target is sub-500ms total response time for conversations that feel natural.

Step 1: Choose Your STT Engine

You face a choice between self-hosted open-source STT and managed STT APIs. Self-hosted keeps audio on-premise but requires GPU compute for real-time speed; managed APIs are faster to start but reintroduce data-sharing and per-minute costs.

Leading Open-Source STT Options:

| Engine | Model | WER (LibriSpeech) | Languages | Self-Hosting Notes |

|---|---|---|---|---|

| Whisper | large-v3 | 2.01% | 99+ | Requires GPU; optimize with faster-whisper (CTranslate2) for 4x speedup |

| Whisper | turbo | 7.75% | 99+ | Faster but less accurate; ~6GB VRAM |

| Vosk | en-us-0.22 | 5.69% | 20+ | Runs on Raspberry Pi; models as small as 50MB |

Whisper is the most accurate but demands optimization for production. Faster-whisper using CTranslate2 reduces transcription time from 2m23s to 17s on the same hardware and cuts VRAM usage by 30-40% with INT8 quantization.

Step 2: Select Your Language Model and Dialogue Logic

The LLM interprets intent, maintains multi-turn context, and generates responses. The trade-off is between open-weight models (Llama, Mistral, Phi) run locally versus API-accessed models.

Open-Weight Model Options:

| Model | Parameters | Context Window | Notes |

|---|---|---|---|

| Llama 3 | 8B, 70B | 8,192 tokens | Grouped Query Attention for efficiency |

| Mistral 7B | 7.3B | 8,192 tokens | Sliding Window Attention reduces memory |

| Phi-3 Mini | 3.8B | 4K-128K tokens | Optimized for mobile/edge deployment |

Voice-Specific Constraints:

- Prompt for short, conversational responses—no markdown, no lists

- Include natural speech patterns like "um," "ah," and SSML

<break>tags to prevent robotic TTS - Manage context windows carefully for calls exceeding 20-30 minutes

- Connect to backend systems (CRM, calendar, database) via tool calls or function calling

For local execution, Ollama provides a runtime framework supporting Llama 3, Mistral, and Phi-3 with GPU acceleration—a practical starting point before committing to full infrastructure.

Step 3: Integrate a TTS Engine

The TTS landscape offers several production-ready options. When comparing engines, focus on three dimensions: prosody naturalness, time-to-first-audio-byte, and voice cloning support.

Open-Source TTS Comparison:

| Engine | Quality (MOS) | Latency | Voice Cloning |

|---|---|---|---|

| Coqui XTTSv2 | ~4.2 (English) | <200ms streaming | Yes (3-second sample) |

| StyleTTS2 | +0.28 CMOS vs. human recordings | ~500ms (T4 GPU) | Yes (zero-shot) |

| Piper TTS | Mid-range natural | RTF < 1.0 (Raspberry Pi) | Yes (fine-tuning required) |

The latency numbers above assume streaming output—sending audio chunks as text generates, cutting response latency from seconds to milliseconds. Without streaming, even a low-MOS engine will feel sluggish in live calls. Voice consistency and pronunciation of domain-specific terms (drug names, legal citations) require model fine-tuning or custom lexicons.

Step 4: Set Up Telephony and the Voice Channel

Telephony is the most underestimated component. Your bot must connect to a phone number or WebRTC endpoint, handle inbound/outbound calls, manage audio codecs (µ-law or G.711 over SIP), and handle call control events (answer, transfer, hang-up).

Open-Source Telephony Options:

- Asterisk: Supports WebRTC via PJSIP and External Media ARI for real-time audio streaming

- FreeSWITCH: Uses mod_sofia for SIP and mod_verto for WebRTC

- Twilio Programmable Voice: Managed bridge with SIP/WebSocket integration

The SIP/WebSocket integration pattern connects telephony to your STT-LLM-TTS pipeline, routing raw audio over UDP or TCP to your processing layer.

Step 5: Orchestrate, Test, and Deploy

The orchestration layer is a real-time event loop that:

- Receives audio frames from telephony

- Streams to STT

- Feeds transcripts to the LLM

- Streams TTS output back

- Manages barge-in (user interrupting mid-response)

Testing Regimen:

- Unit tests per component (STT accuracy, LLM response quality, TTS naturalness)

- Integration tests for full call flows

- Simulation-based testing that replays realistic conversation scenarios

Dograh AI's open-source LoopTalk framework provides AI-to-AI testing that simulates real customer scenarios, catching edge cases before production and reducing manual testing effort by up to 90%.

Key Factors That Affect Your Open-Source Voice Bot's Performance

Latency and Its Compounding Sources

Human conversation features turn-taking gaps of 200-300ms. Standard cascading AI pipelines (STT → LLM → TTS) often exceed 1,500ms, making conversations feel broken.

How to Achieve Sub-500ms Latency:

- Use streaming STT and streaming TTS (not batch processing)

- Keep LLM system prompts lean (shorter context = faster inference)

- Cache common responses to eliminate redundant generation

- Choose quantized model variants for faster inference

LLM Quantization Performance:

| Format | VRAM (7B Model) | Quality Retention | Throughput |

|---|---|---|---|

| FP16 (Baseline) | ~14 GB | 100% | ~461 tok/s |

| AWQ (4-bit) | ~4.2 GB | 98-99% | ~741 tok/s |

| GGUF (Q4_K_M) | ~4.5 GB | 97-99% | ~80-120 tok/s |

4-bit quantization reduces VRAM requirements by 70% while maintaining near-identical quality.

Speech Recognition Accuracy in Domain-Specific Environments

Accents, background noise, domain vocabulary (medical terms, ticker symbols, legal jargon), and poor microphone quality all degrade STT accuracy. Errors cascade into incorrect LLM responses.

A 2025 JAMIA Open study evaluating STT models on noisy emergency medical dialogues found Whisper v3 Turbo achieved the lowest medical Word Error Rate, while Vosk underperformed substantially in "inside crowded" noise conditions.

Mitigation Strategies:

- Fine-tune Whisper on domain-specific audio datasets

- Use custom vocabulary boosting where supported

- Build fallback handling for low-confidence transcriptions

- Implement noise suppression preprocessing

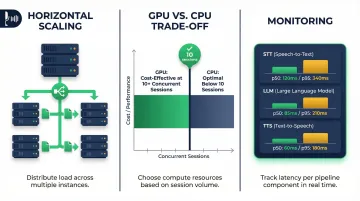

Infrastructure and Scaling Architecture

Once your components are tuned, the system as a whole introduces a separate challenge. A single bot session requires continuous audio streaming, real-time inference, and low-latency network paths — demands that are fundamentally different from batch API calls.

Scaling Patterns:

- Horizontal scaling: run multiple instances behind a load balancer to distribute concurrent sessions without single-point bottlenecks

- GPU vs. CPU trade-offs: GPU inference pays off at roughly 10+ concurrent sessions; below that, CPU-based quantized models (GGUF) are more cost-effective

- Monitoring: track per-call latency percentiles (p50, p95) and error rates per component (STT, LLM, TTS) separately to pinpoint bottlenecks quickly

For instance, AWS g6.xlarge instances (NVIDIA L4, 24GB VRAM) cost approximately $0.80/hour and can handle 8-12 concurrent voice sessions depending on model size and response complexity.

Common Mistakes and Misconceptions When Building Voice Bots from Scratch

Mistaking Component Quality for System Quality

Teams often select the highest-accuracy STT model in benchmarks but fail to account for the latency it introduces in streaming mode. Or they choose an expressive TTS model that sounds great but takes 800ms to generate first audio—making the bot feel broken.

The quality that matters in production is latency-weighted quality, not isolated benchmark scores. A slightly less accurate STT that responds 300ms faster will feel better to users.

Underbuilding Conversation State Management

Many first-pass implementations treat each turn as independent—sending only the latest user message to the LLM without conversation history or session context. The result:

- Cannot handle follow-up questions or contextual references

- Lose track of what was agreed earlier in the call

- Repeat information the user already provided

Robust context management—conversation memory, entity tracking, intent carryover—is a structural requirement, not an afterthought.

Assuming Open-Source Compliance Is Automatic

Self-hosting the stack is a prerequisite for HIPAA/GDPR compliance, but not sufficient on its own. 45 CFR 164.312 requires technical safeguards including:

- Encryption in transit and at rest

- Access controls and audit logging

- Automatic logoff mechanisms

- PII redaction from call transcripts

- Explicit data retention policies

Teams frequently deploy a self-hosted bot and assume compliance is covered by virtue of not using a third-party API. It isn't—OCR audits and HIPAA enforcement actions routinely cite exactly these gaps, with fines reaching $1.9M per violation category.

Frequently Asked Questions

What are the best open-source tools for building a voice bot?

For STT, use Whisper (with faster-whisper optimization) or Vosk for lightweight deployments. For LLMs, run Llama or Mistral via Ollama. For TTS, Coqui or Piper offer production-ready quality. The "best" choice depends on your latency requirements and available compute.

Can an open-source voice bot be HIPAA or GDPR compliant?

Self-hosting is necessary but not sufficient. You must also implement encryption (in transit and at rest), PII redaction, access controls, audit logging, and data retention policies to achieve actual compliance.

How do I reduce latency in an open-source voice bot?

Use streaming (not batch) STT and TTS, choose quantized LLM variants optimized for inference speed, and architect the pipeline to run STT and TTS initiation in parallel with LLM processing where possible.

How long does it take to build a production voice bot from scratch?

A basic proof-of-concept takes days to a week for a developer familiar with the stack. A production-ready system with telephony integration, error handling, monitoring, and compliance controls typically takes 4–12 weeks, depending on team size and scope.

What is the difference between building a voice bot from scratch and using an open-source voice AI platform?

Building from scratch means assembling and maintaining every component individually. An open-source platform like Dograh AI pre-integrates the orchestration layer and telephony, ships with tested conversation flows, and still allows full self-hosting and code access. You give up some low-level flexibility but get to production weeks faster.

What programming language is best for building a voice bot?

Python is the primary choice given the breadth of AI/ML library support (Transformers, faster-whisper, LangChain). However, Go or Node.js handle real-time audio streaming and telephony layers better, where concurrency and low overhead matter more than ML ecosystem access.