Introduction

AI voice assistants have evolved from science fiction to business necessity. Healthcare providers automate appointment scheduling, sales teams qualify leads across hundreds of calls, and support operations handle thousands of customer inquiries—all through conversational voice AI. According to Grand View Research, the global AI voice agents market is projected to grow from $2.54 billion in 2025 to $35.24 billion by 2033.

In practice, building one is harder than it sounds. A voice assistant that performs well in testing can fail in production due to latency spikes, transcription errors, or context loss.

The gap between a functional prototype and a production-ready system comes down to component selection, pipeline architecture, and deployment planning. This guide covers the exact prerequisites, step-by-step build process, critical performance variables, common pitfalls, and when to build versus buying a platform.

TL;DR

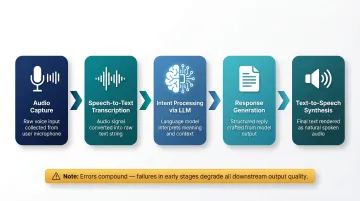

- Build a functional AI voice assistant using three core components: Speech-to-Text (STT), a language model (LLM), and Text-to-Speech (TTS)

- Follow the pipeline: capture audio → transcribe → process intent → generate response → synthesize speech

- Optimize for latency (under 500ms), ASR accuracy, and LLM context retention to drive real-world performance

- Plan compliance requirements (HIPAA, GDPR, PCI DSS) before choosing cloud or self-hosted deployment

- Choose between building from scratch (4–12 months), managed platforms, or open-source options like Dograh AI — deployable in days with full data control

What You Need Before Building an AI Voice Assistant

Teams that skip defining scope and selecting compatible components often spend weeks re-architecting later. Getting infrastructure, scope, and compliance requirements sorted upfront prevents the most common and costly delays.

Component and Infrastructure Requirements

Minimum system requirements depend on deployment model:

- Cloud deployments reduce hardware demands but introduce data privacy tradeoffs; self-hosted requires significant VRAM—dual RTX 4090s (48GB total) can sustain 50+ concurrent streams of a 14B model using vLLM

- Microphone input works for customer-facing applications; phone-based systems need telephony integration via SIP trunks or WebRTC

- Real-time streaming needs low-latency connections; ITU-T G.114 recommends one-way delays under 400ms for interactive voice applications

Key software dependencies:

- STT engine: OpenAI Whisper (self-hosted), Deepgram, Google Cloud Speech-to-Text, or Amazon Transcribe

- LLM or NLU layer: Open-source options like Llama 3.1 or hosted APIs (GPT-4o, Claude)

- TTS engine: ElevenLabs, Azure Neural TTS, AWS Polly, or open-source HiFi-GAN

- Orchestration layer: Ties the pipeline together—handles turn-taking, state management, and component handoffs; platforms like Dograh AI or custom middleware built on LangGraph are common choices

Scope and Compliance Readiness

Define the assistant's primary use case before touching code:

- Customer support call handling

- Appointment scheduling

- Sales outreach and lead qualification

- Internal helpdesk automation

Each use case shapes conversation flow design and latency requirements differently. A support bot handling frustrated customers needs sub-500ms response times; an outbound sales assistant has more tolerance for a half-second pause.

Identify regulatory requirements upfront:

- HIPAA for healthcare voice data—when processing electronic Protected Health Information (ePHI), your Cloud Service Provider requires a Business Associate Agreement

- GDPR for EU user interactions—voice data requires lawful processing basis and likely a Data Protection Impact Assessment

- PCI DSS for payment-adjacent conversations—sensitive authentication data cannot be stored after authorization

These requirements determine whether cloud or self-hosted deployment is even permissible for your use case. Locking in your deployment model now—before a line of code is written—avoids the most expensive kind of rework.

How to Build an AI Voice Assistant: Step-by-Step

The pipeline consists of five sequential stages. Each stage outputs the input to the next, and failures compound if earlier stages are misconfigured.

Step 1: Define Purpose, Use Case, and Conversation Scope

Specify what the assistant must handle and what it must hand off to a human agent. Narrow scope produces better-performing assistants faster.

Map out 3-5 primary conversation flows:

- Greeting and intent detection

- Information gathering (appointment details, customer problem)

- Action execution (schedule booking, ticket creation)

- Confirmation and closing

- Edge case: caller goes off-script or asks out-of-scope questions

Define fallback behavior for edge cases. Will the assistant apologize and transfer, attempt clarification, or log the interaction for review?

Step 2: Select Your Technology Stack

Choose an ASR engine based on accuracy and deployment requirements:

- Deepgram Nova-3: 6.84% WER on streaming audio with sub-300ms latency

- Google Cloud Chirp 3: Supports 100+ languages with automatic detection

- Self-hosted Whisper Large-v3: Strong multilingual accuracy; use Faster-Whisper (CTranslate2) to cut inference time and VRAM usage

Select an LLM that handles multi-turn context:

Single-turn models lose the conversation thread after one exchange. For sustained dialogue, compare these options:

- GPT-4o: 128,000 token context window

- Claude 3.5 Sonnet: 200,000 token context window

- Llama 3.1 (8B / 70B / 405B): 128,000 tokens; open-source and self-hostable

Confirm the model supports domain-specific vocabulary relevant to your industry before committing.

Choose a TTS engine for voice quality:

Neural TTS sounds more human but costs more compute; standard TTS is faster but less natural. Key options:

- ElevenLabs: ~75ms model inference, but real-world Time-to-First-Audio averages 400–600ms due to network latency

- Azure Neural TTS (on-device): ~100ms latency by eliminating network hops entirely

Use a pre-integrated voice AI platform to skip the wiring:

Rather than connecting each component manually, platforms like Dograh AI come with ASR, LLM, and TTS pre-integrated under an open-source BSD 2-Clause license — no platform fees, and agents deployable in minutes instead of weeks.

Step 3: Design the Conversational Flow

Structure flows to account for voice-specific constraints. Unlike chat, users cannot scroll back—responses must be concise, sequential, and offer natural re-prompt paths.

Define intent categories and map responses:

- Greeting: Welcome user, identify reason for call

- Query: Answer questions, retrieve information

- Action request: Schedule appointment, update record

- Escalation: Transfer to human agent

Include interruption handling so the assistant recovers gracefully if the user speaks mid-response.

Decide on turn-taking logic:

- How the assistant detects when the user stopped speaking (silence detection, voice activity detection)

- How long it waits before prompting again

- Whether it supports barge-in (user interrupts assistant)

Step 4: Train, Configure, and Integrate

Feed domain-specific vocabulary to your ASR engine:

Medical terms for healthcare, legal terminology for law firms, product names for e-commerce—this reduces transcription error rates. Research shows every ~5% increase in WER causes approximately a 2–2.5% drop in intent classification accuracy.

Configure the LLM with a system prompt:

Define persona, scope boundaries, escalation triggers, and response format constraints. Test how the model handles ambiguous inputs before moving to integration.

Connect to backend systems:

Integrate with CRM (Salesforce, HubSpot), scheduling software (Calendly, Google Calendar), and knowledge bases via APIs. Confirm API response times won't create perceptible lag—sub-200ms API responses are ideal.

Step 5: Test, Stress-Test, and Refine

Conduct structured usability testing:

- Common conversation paths

- Edge cases (user confusion, off-topic questions)

- Noisy audio inputs

- Varying accents and speaking speeds

Stress-test under concurrent call load:

A response that performs well in isolation may degrade under simultaneous traffic. Production end-to-end latency typically ranges from 1000–3200ms; optimized pipelines achieve 670–1450ms.

Gather feedback and iterate:

Log conversation transcripts (where compliant) to identify where the assistant misunderstands intent or gives incorrect responses. Retrain or refine prompts based on those findings.

Key Parameters That Affect Your AI Voice Assistant's Performance

Picking the right components is only half the job. How you tune and connect them determines whether your assistant actually feels natural to users — or frustrates them into dropping off.

Latency (End-to-End Response Time)

Why it matters: Voice interactions feel unnatural once delay exceeds ~1 second. Research indicates responses must stay under 800ms to maintain conversational flow, with 500ms as the preferred target.

What controls it: Cumulative delay across ASR transcription, LLM inference, and TTS generation. Optimize each stage:

- Streaming STT: Reduces transcription latency by processing audio incrementally rather than waiting for complete utterances

- Smaller/faster LLM variants: Deploying 8B-14B models with continuous batching frameworks like vLLM maintains Time-to-First-Token under 300ms

- Caching common TTS phrases: Pre-generate frequently used responses to eliminate synthesis delay

ASR Accuracy and Vocabulary Coverage

Why it matters: If the speech-to-text layer misreads words, the LLM receives corrupted input and generates irrelevant responses. Research in clinical voice AI has shown that substitution errors — changing "there is some extra bleeding" to "there isn't some extra bleeding" — barely register in WER scores but completely invert meaning in high-stakes contexts.

What controls it: Model size, domain fine-tuning, noise handling, and accent coverage. Deepgram Nova-3 achieves 5.4% WER on mixed audio, while OpenAI Whisper Large-v3 reaches 4.2% WER on clean speech.

LLM Context Window and Conversation Memory

Why it matters: Voice assistants handling multi-turn conversations need the LLM to retain earlier context. Without this, the assistant "forgets" what was already discussed, creating frustrating user experiences.

How to optimize it: Three variables determine how well your assistant holds context across a conversation:

- Context window size: Llama 3.1 and GPT-4o both support 128,000 tokens — sufficient for extended multi-turn sessions

- Conversation history formatting: Structure prior turns consistently so the LLM parses them without confusion

- Session state management: Persist context between turns at the application layer to avoid mid-conversation resets

Voice Quality and TTS Naturalness

Why it matters: Robotic or monotone TTS makes users disengage faster — particularly in customer service and sales contexts where trust is established in the first few seconds of a call.

What controls it: Model architecture (neural vs. concatenative TTS), speaking rate configuration, pause insertion, and whether the voice matches your brand's style or the tone expected in your industry.

Common Mistakes When Building an AI Voice Assistant

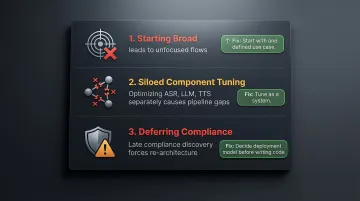

Most voice assistant builds fail for the same reasons — and nearly all of them are avoidable with early planning. Watch for these three:

Starting broad instead of scoped. Building a general-purpose assistant first results in bloated, unfocused conversation flows that handle nothing well. Start with one well-defined use case and expand only after it performs reliably.

Treating ASR, LLM, and TTS as separate problems. All three must be tuned as a system. Teams that optimize each component in isolation often find the real bottleneck is between them — unformatted LLM output fed directly to TTS creates awkward, unnatural speech.

Deferring compliance to post-build. Choosing a cloud ASR provider that routes audio through third-party servers — then discovering the use case requires HIPAA compliance — forces expensive re-architecture. Compliance must be a deployment decision made early, not retrofitted after the fact.

Should You Build from Scratch or Use a Platform?

The decision exists on a build-vs.-buy-vs.-configure spectrum. Building every component from scratch gives maximum flexibility but requires significant engineering time. Using a managed cloud platform is fast but creates vendor lock-in, usage-based cost unpredictability, and data sovereignty risks. Open-source self-hosted platforms sit between those two extremes.

Build from Scratch

When it makes sense: Highly specialized use cases with unique requirements not served by existing platforms, or organizations with dedicated ML engineering teams.

Key tradeoff: Time-to-deployment typically 4-12 months according to Master of Code's 2026 analysis, plus ongoing maintenance burden. Upfront costs range from $50,000-$300,000+.

Managed Cloud Platform

When it makes sense: Teams without ML expertise that need fast deployment with minimal infrastructure management. Deployment typically takes days to weeks.

Key tradeoffs: Recurring platform fees ($0.13-$0.33 per minute at scale), vendor lock-in, and limited control over where voice data is processed and stored: all significant concerns for regulated industries.

Open-Source Self-Hosted Platform

When it makes sense: Businesses that need enterprise-grade capabilities without platform fees, require data sovereignty for compliance (HIPAA, GDPR), or want full configurability without being locked into one provider's pricing model.

Dograh AI, for example, offers an open-source platform under a BSD 2-Clause license with self-hosting options that satisfy HIPAA and GDPR requirements. Healthcare providers, legal firms, and financial services teams can get enterprise-grade voice AI without rebuilding from scratch or taking on unpredictable per-minute platform costs.

Frequently Asked Questions

Can I create an AI voice assistant for free?

Yes—using open-source components (Whisper for ASR, open LLMs like Llama, Mozilla TTS) or open-source platforms with no platform fees makes a functional voice assistant buildable at zero licensing cost. Compute costs (cloud hosting or on-device hardware) still apply.

Can anyone build their own AI?

Building a basic voice assistant is accessible with programming knowledge and the right tools. Production-grade systems with low latency, high accuracy, and compliance readiness require deeper technical expertise or a platform that abstracts the complexity.

Can I use ChatGPT as a voice assistant?

ChatGPT (via OpenAI's API) can serve as the LLM layer in a voice assistant pipeline, but it still requires a separate ASR layer to convert speech to text and a TTS layer to convert responses back to speech. On its own, it is not a complete voice assistant solution.

Which AI has the best voice recognition?

Accuracy varies by use case and environment. OpenAI's Whisper performs well on accented and multilingual speech, Deepgram is commonly cited for real-time transcription speed with sub-300ms latency, and Google Speech-to-Text is strong for general consumer applications. Evaluate on domain-specific audio samples before choosing.

What AI voice assistants are available?

Consumer assistants (Siri, Alexa, Google Assistant), developer platforms (Rasa, Voiceflow, Retell AI), and open-source self-hostable platforms like Dograh AI serve different needs. Choice depends on whether the use case is consumer-facing, enterprise-grade, or compliance-sensitive.

Are AI voices legal?

AI-generated voices are legal in most jurisdictions but subject to disclosure requirements. Some U.S. states and EU regulations require disclosure that a caller is speaking with an AI. Regulated industries must also ensure voice data handling complies with applicable privacy laws like GDPR and HIPAA.