Introduction

Primary care physicians spend a median of 36.2 minutes on EHR tasks per visit, including 6.2 minutes of after-hours "pajama time." This documentation burden directly reduces patient-facing hours and contributes to physician burnout.

Voice AI offers a direct fix — but building one for EHR systems is harder than it looks. Connect voice input to speech-to-text, process with an LLM, and write structured data back to the EHR: the architecture sounds simple. In practice, outcomes vary sharply based on pipeline design, HIPAA compliance architecture, EHR integration depth, and how patient data moves through each layer.

Get any of those wrong, and you're not just shipping a broken feature — you're shipping a liability.

TLDR

- Open-source EHR voice AI connects telephony to an STT → LLM → TTS pipeline that reads from and writes to EHR via FHIR/HL7 APIs

- HIPAA compliance requires self-hosting, access controls, PHI handling protocols, and BAAs — open source alone does not guarantee it

- Start with voice-driven pre-visit intake, appointment scheduling, or prescription refills for highest ROI

- Key success factors: STT accuracy for clinical terms, EHR API integration depth, sub-500ms latency, and emergency escalation design

What You Need Before Building an Open Source EHR Voice Assistant

Most EHR voice AI projects fail because teams skip preparation and jump directly to building the pipeline. The system only works as well as the stack, permissions, and data controls you establish upfront.

System and Infrastructure Requirements

You need a server environment capable of self-hosting the orchestration layer, microphone or telephony input, and network access to your EHR's API endpoints. Whether you choose cloud or on-premise hosting directly affects your HIPAA exposure—cloud deployments require Business Associate Agreements (BAAs) with every vendor touching Protected Health Information (PHI), while on-premise hosting reduces third-party exposure but increases your infrastructure responsibility.

Confirm API access is provisioned with your EHR vendor (Epic FHIR R4, Cerner Millennium, Athenahealth Open API, or HL7). API approval timelines range from 2-4 months for marketplace review alone, with per-site activation adding 1-3 months. Request credentials at project kickoff, not when you're ready to integrate.

EHR Integration and Compliance Readiness

Before connecting any real patient data, establish your compliance baseline:

- Sign BAAs with every vendor touching PHI—telephony, STT, TTS, LLM, hosting, logging

- Configure role-based access controls so only authorized personnel can access patient conversations

- Enable audit logging for all system access and data modifications

- Test PHI redaction to ensure identifiers don't leak to cloud services

Your open-source stack must support configurable data retention, transcript redaction, and least-privilege API keys for EHR tool calls. These controls are non-negotiable for HIPAA-aligned deployments. Get these foundations locked down before you build a single pipeline component — retrofitting compliance into a running system costs far more time than building it in from the start.

How to Build an Open Source AI Voice Assistant for EHR Systems

A working EHR voice assistant consists of a modular pipeline: telephony or wake-word input → STT → LLM reasoning → tool calls to EHR → TTS output → logging. Each component can be swapped independently in an open-source architecture.

Step 1: Define the EHR Workflow and Conversation Scope

Identify one high-volume, predictable workflow to start. Pre-visit intake, appointment scheduling, prescription refills, or lab result routing work well because they follow repeatable patterns with clear start and end conditions.

Starting with a single workflow reduces hallucination risk and makes safety testing tractable. For a pre-visit intake workflow, define:

- Entry conditions: Patient calls main line during business hours

- Required data fields: Name, date of birth, reason for visit, current symptoms, insurance verification, consent acknowledgment

- Stop conditions: Emergency keywords detected, identity verification fails after three attempts, patient requests human agent

- Structured output: JSON payload written to EHR intake form via FHIR API

This scoping prevents the agent from improvising responses outside its trained domain—critical when documentation errors carry patient safety implications.

Step 2: Select and Configure Your Open Source Stack

Choose components for each pipeline layer:

- STT: Whisper or a healthcare-tuned model

- LLM: GPT-4 via API or a local model for zero-cloud PHI processing

- TTS: Streaming synthesis engine for natural-sounding responses

- Telephony: SIP-compatible layer or Twilio integration

The trade-off: on-device processing minimizes PHI exposure but may sacrifice accuracy, while cloud APIs deliver higher accuracy with additional compliance overhead.

Add a custom medical vocabulary dictionary to your STT model. Generic STT models average 59% word error rates in healthcare settings, though recent benchmarks show narrower gaps — Google General ASR reached 8.8% WER versus 9.1% for its medical-tuned counterpart in primary care conversations. The practical difference shows up in drug names, dosage instructions, and specialty terminology, where transcription errors carry direct clinical consequences.

Platforms like Dograh AI support BYO STT/LLM/TTS configurations, so you can swap models without rewriting orchestration logic. This matters for teams with strict requirements around where patient data is processed — self-hosting keeps PHI entirely within your own infrastructure.

Step 3: Build the Orchestration and Conversational Flow

Structure the conversation as discrete decision nodes rather than a single long prompt. This architecture reduces hallucinations and makes escalation paths predictable:

- Greeting and consent acknowledgment

- Identity verification via date of birth and phone number

- Intent detection — schedule appointment, refill prescription, or report symptoms

- Data capture specific to detected intent

- EHR tool call to write structured data

- Confirmation read-back and handoff instructions

Implement PII/PHI sanitization before any text reaches a cloud LLM. Strip identifiers—names, phone numbers, date of birth patterns—from the transcript before the reasoning call. Log only sanitized text in debug outputs to prevent PHI leakage through logging infrastructure.

Step 4: Integrate With Your EHR via FHIR or HL7 APIs

Connect the agent's tool-call layer to your EHR using FHIR R4 APIs for bidirectional sync. The agent reads provider availability and patient identifiers, then writes structured intake data, appointment confirmations, or escalation notes back into the EHR.

FHIR R4 adoption has reached 92.4% among certified EHR vendors, and 70% of US non-federal acute care hospitals now enable FHIR-based patient access. Yet this integration step typically consumes 30-40% of total build time due to sandbox testing, credential provisioning, and data mapping complexities.

Once the core integration is stable, configure webhook support so voice interaction outcomes trigger downstream workflows in your ticketing system or CRM:

- Intake captured → notify care coordinator

- Appointment booked → sync to scheduling system

- Urgent flag raised → alert triage staff

Dograh AI's orchestration layer handles webhook-driven EHR tool calls and multi-agent decision flows natively. Because it supports full self-hosting, there are no per-minute platform fees and no PHI leaving your infrastructure.

Step 5: Configure HIPAA Controls, Escalation Logic, and Logging

Set up the emergency escalation path before connecting real patients:

- Detect urgent intent keywords: Chest pain, stroke symptoms, suicidal ideation, severe bleeding

- Trigger policy-defined response: Instruct caller to contact 911 or perform warm transfer to triage nurse

- Pass structured transcript summary to staff so the conversation doesn't restart from scratch

Enable audit logging for all workflow edits, prompt changes, tool calls, and transcript access. Set separate retention windows for raw audio versus text transcripts—purge raw audio after 30 days while text transcripts supporting billing stay for seven years. Enforce RBAC so only authorized roles can view recordings, and isolate dev/test/prod environments to prevent PHI from appearing in non-production systems.

When Should You Use an Open Source AI Voice Assistant for EHR?

Open source is not always the right fit. It requires internal technical capacity to deploy, maintain, and secure the infrastructure. Managed or proprietary solutions may launch faster for smaller teams without DevOps resources.

Open source is the right choice when:

- Data residency rules require PHI to stay within a specific jurisdiction

- Multi-tenant cloud platforms create unacceptable compliance risk

- Call volumes justify avoiding per-minute platform fees

- The organization needs full auditability of the model and pipeline

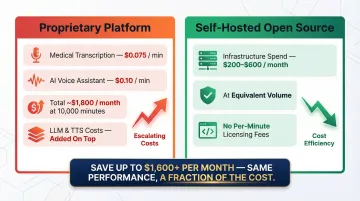

That last point — call volume — is where the cost case gets concrete. Proprietary platforms charge $0.075/min for medical transcription and $0.10/min for AI voice assistants. At 10,000 minutes per month, that's $1,800/month before LLM and TTS costs are added. Self-hosting shifts those platform fees into infrastructure spend — typically $200–$600/month for a production-grade setup, depending on your cloud provider and redundancy requirements.

Open source becomes risky when:

- No one owns infrastructure patching and secrets management

- BAA coverage for self-hosted components is unclear

- The team cannot build and maintain escalation and monitoring setups before going live

Key Parameters That Affect Voice AI Performance in EHR Environments

Even a technically correct pipeline can fail in production if the wrong values are set for latency, accuracy, context window, and escalation thresholds. In healthcare, these aren't just performance metrics — they affect documentation accuracy, patient safety, and regulatory exposure.

STT Accuracy and Medical Vocabulary Coverage

A misrecognized drug name or symptom term propagates an error through the LLM reasoning layer and into the EHR record. Errors in clinical documentation carry patient safety implications.

Using a healthcare-tuned STT model with a custom vocabulary dictionary improves clinical term recognition. Medical-specific models achieved 46% error rates on medical proper nouns versus 61% for general models in home healthcare settings.

That advantage isn't universal, though. Medical concept extraction F1 scores were 0.65 for general ASR versus 0.64 for medical-tuned ASR, indicating that medical tuning doesn't automatically guarantee better clinical understanding.

The takeaway: test your specific use case. Generic models may perform adequately for conversational intake, while specialized vocabularies matter most for medication names and procedure documentation.

Latency (End-to-End Response Time)

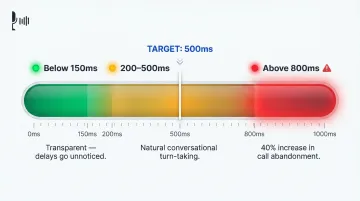

Voice conversations break down when response latency exceeds 1-2 seconds. Callers interpret delays as disconnections or system failure, leading to hang-ups and escalations.

The benchmarks are specific:

- Below 150ms feels transparent to the caller — delays go unnoticed

- 200–500ms aligns with natural conversational turn-taking

- Above 800ms triggers a 40% increase in call abandonment

Target sub-500ms end-to-end. On-device or co-located processing cuts latency compared to multi-hop cloud pipelines, and self-hosted orchestration eliminates round-trips to third-party platforms.

LLM Prompt Design and Decision-Node Structure

A single flat prompt with no branching logic gives the agent no guardrails — it can drift into responses outside its intended scope. In healthcare, that means appearing to offer medical advice or failing to trigger an emergency escalation.

A multi-node decision tree with strict guardrails produces more predictable, auditable behavior:

- The agent does not diagnose conditions

- The agent does not collect unnecessary PHI

- The agent always escalates on emergency keywords

This structure makes the agent's behavior testable and compliant with organizational policies.

EHR API Reliability and Fallback Behavior

If the EHR API is slow or down during a call, the agent must have a defined fallback. Failing silently or crashing mid-conversation damages patient trust and creates documentation gaps.

Design fallback responses: "I'm unable to access scheduling right now—a staff member will follow up within the hour." Create a ticket or alert in the background so no interaction is lost even when the integration is unavailable.

Common Mistakes When Building an Open Source EHR Voice Assistant

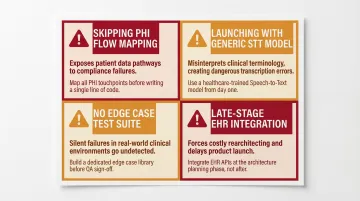

Most teams hit the same handful of pitfalls. Here's where builds go wrong—and how to avoid them.

Skipping PHI flow mapping before build. Teams often connect the pipeline to real patient calls before auditing where PHI appears in audio, transcripts, logs, and tool call payloads. This creates compliance exposure that's hard to fix after the fact. Map every PHI touchpoint first, then design redaction and access controls around it before a single real call goes through.

Launching with a generic STT model. Off-the-shelf speech models produce high transcription error rates on drug names, diagnoses, and procedure codes. Add a domain-specific vocabulary layer before any testing with real clinical language — not after you've already seen failures.

No pre-launch test suite for edge cases. If you haven't tested misheard names, verification failures, accented speech, noisy environments, and emergency symptom detection, patients will be the ones who find those gaps. Build a repeatable test suite covering all edge cases. Dograh AI's LoopTalk can stress-test with diverse patient personas — elderly callers, non-native speakers, callers with urgent symptoms — before real patients are ever on the line.

Treating EHR integration as a late-stage task. Teams that build the voice pipeline first and bolt on EHR integration at the end consistently run into API access delays, data mapping mismatches, and authentication issues. Epic marketplace approvals alone take 2-4 months, with per-site activation adding another 1-3 months. Request API credentials at project kickoff — not once the rest of the build is done.

Frequently Asked Questions

How is AI used in EHR?

AI is used in EHR systems for voice-driven documentation, hands-free data entry, appointment scheduling, pre-visit intake capture, clinical decision support, and automated follow-up workflows. The key value is reducing the time clinicians spend on manual documentation by letting the system capture and write structured data directly into the EHR record.

What HIPAA requirements apply to an open-source AI voice assistant integrated with EHR?

Core requirements include:

- Sign BAAs with every vendor that touches PHI

- Encrypt data in transit and at rest

- Maintain audit logs and enforce role-based access

- Retain compliance responsibility for all self-hosted components

Can I self-host an open-source AI voice assistant and still be HIPAA compliant?

Self-hosting supports HIPAA compliance by reducing third-party PHI exposure and enabling data residency control. However, the software alone does not make a deployment compliant—the organization must also implement secure hosting, RBAC, incident response, and retention controls.

What EHR systems support API integration with AI voice agents?

Major EHR platforms with API support include:

- Epic — FHIR R4 via App Orchard

- Oracle Health (Cerner) — Millennium APIs

- Athenahealth — Open API Platform

- Allscripts (Veradigm) — HL7/FHIR R4

API access approval timelines vary — initiate requests early in the project.

How do I prevent PHI from leaking through my open-source voice AI pipeline?

Key controls to enforce:

- Strip PII/PHI from transcripts before sending to any cloud LLM

- Avoid logging raw audio payloads in production

- Apply redaction to transcript storage and use least-privilege API keys for EHR calls

- Self-host the orchestration layer when PHI risk is high

OCR settled with MedEvolve for $350,000 after an unsecured server exposed 230,572 individuals' PHI.

How long does it take to build and deploy an open-source AI voice assistant for EHR?

A focused single-workflow deployment — such as appointment scheduling — can be prototyped quickly with an open-source platform. Production-ready deployment, including EHR API approval, compliance controls, and pre-launch testing, typically takes 6–12 weeks depending on EHR vendor responsiveness and internal security review cycles.