Introduction

Traditional IVR systems are losing ground fast. According to Gartner's 2024 Conversational AI Forecast, 37.6% of enterprises plan to fully replace legacy IVR with AI triage agents by 2026, pushing the conversational AI market toward a projected $41.39 billion by 2030. The open-source ecosystem lets you build and deploy a production-ready voice agent in under 30 minutes—without platform fees or surrendering data control.

This isn't just for large engineering teams. Healthcare practices, law firms, and customer support operations are shipping working agents on tight timelines and tighter budgets.

Results vary widely, though. Your agent's performance hinges on component selection (STT, LLM, TTS, VAD), configuration choices, and whether your deployment is actually production-ready. Most first builds that fail share a pattern:

- Skipped preparation steps before writing a line of code

- Misconfigured latency-sensitive parameters

- Deployed without structured conversation testing

This guide covers what you need before building, the step-by-step process, the parameters that matter most, and the mistakes that cause most early failures.

TLDR

- Building an open-source AI voice agent requires four core components: STT, an LLM, TTS, and VAD (voice activity detection)

- The 30-minute timeline is achievable when you start with a pre-configured open-source platform rather than stitching raw models together

- Open-source deployments give you full data sovereignty—critical for HIPAA, GDPR, and similar compliance requirements

- The biggest failure points are latency misconfiguration, vague system prompts, and skipping conversation testing

- Proprietary platforms ship faster initially, but unpredictable billing and lock-in become costly as call volume grows

What You Need Before Building Your Open Source Voice Agent

Preparation determines whether you hit the 30-minute target or spend days debugging. The most common failure points: environment mismatches, missing API credentials, and unresolved deployment decisions that surface mid-build.

System and Environment Requirements

You need a Linux-compatible system (or Mac/Windows WSL), CLI access, and the ability to run server-side applications. GPU is optional for cloud-hosted LLMs but required for local deployments. If you're hosting Whisper locally, expect these requirements:

| Model Size | Parameters | Required VRAM (FP16) |

|---|---|---|

| Tiny | 39M | ~1 GB |

| Base | 74M | ~1 GB |

| Small | 244M | ~2 GB |

| Medium | 769M | ~5 GB |

| Large-v3 | 1550M | ~10 GB |

For a faster first build, use cloud-hosted models — they let you skip model downloads and dependency setup while you validate your use case.

API Keys and Model Access

Before starting, gather credentials for:

- Fast LLM inference provider — Groq delivers sub-200ms Time-to-First-Token (TTFT) with models like Llama 3.3 70B, making it ideal for voice applications where latency matters

- STT service key — OpenAI Whisper via Groq offers 164x real-time speed with 10.3% Word Error Rate

- TTS provider key — Choose a streaming-capable provider; batch generation adds seconds of perceived latency

Compliance and Deployment Decisions

Decide upfront which deployment model fits your requirements:

- Self-hosted — Required for HIPAA, GDPR, and PCI DSS compliance; gives you full data sovereignty

- Cloud-only — Fastest to deploy; appropriate for non-regulated use cases

- Hybrid — Local LLM inference with cloud STT/TTS, or vice versa; balances control and speed

Voice data is inherently biometric and falls under strict privacy laws. GDPR Article 5 mandates data minimization and storage limitation. HIPAA requires Business Associate Agreements (BAAs) with any cloud provider processing Protected Health Information (PHI). For regulated industries, self-hosting is non-negotiable — this decision shapes every tool and configuration choice throughout the build.

How to Build and Deploy an Open Source AI Voice Agent in 30 Minutes

This guide uses an open-source STT → LLM → TTS pipeline with VAD rather than a closed end-to-end voice model. This approach gives you full customization, model swapping, and compliance controls that closed models can't match.

Step 1: Clone the Repository and Install Dependencies

Start by cloning an open-source voice agent framework. Platforms like Dograh AI provide a production-ready open-source stack under a BSD 2-Clause license that bundles the orchestration layer, so setup takes minutes instead of hours.

Clone the repository:

git clone https://github.com/dograh-hq/dograh

cd dograh

Install dependencies according to the repository's README. Most platforms use standard package managers (npm, pip, or Docker Compose) for one-command setup.

Once installation completes, launch the dashboard (typically at http://localhost:3000) to begin configuration. Review the config file structure to understand where each service (ASR, LLM, TTS, VAD) is declaredbefore moving to the next step.

Step 2: Configure the STT, LLM, and TTS Components

Configure Speech-to-Text (STT):

Point your STT block to an OpenAI-compatible ASR endpoint. For low-latency builds, use Whisper via Groq, which processes audio at 164x-299x real-time speed. Key parameters include:

- Model:

whisper-large-v3-turbo($0.04 per hour transcribed) - Language: Specify target language or leave as auto-detect

- Hallucination control: Disable

condition_on_previous_text— roughly 1% of Whisper transcriptions contain hallucinated phrases, with nearly 40% flagged as harmful or concerning

Configure the LLM:

Choose a fast inference provider optimized for speed over deep reasoning. For voice, a mid-size model with strong prompts outperforms slow frontier models.

| Provider / Model | Median TTFT | Output Speed |

|---|---|---|

| Groq (Llama 3.3 70B) | 120ms | 330 tokens/sec |

| OpenAI (GPT-4o) | 450ms | 85 tokens/sec |

Set these parameters:

- Model: Select a sub-200ms TTFT model

- API key: Your inference provider credential

- History window: Number of messages kept in context (start with 10-15 for typical calls)

- System prompt: Define agent persona, behavioral guardrails, and how to handle off-topic inputs

For compliance-sensitive industries, your system prompt should explicitly state rules like "do not provide medical advice" or "always direct the caller to a licensed professional for X."

Configure Text-to-Speech (TTS):

Select a voice provider and voice ID. Critical requirement: enable streaming TTS rather than batch generation. Streaming delivers audio incrementally as it's generated, achieving sub-150ms Time-to-First-Audio (TTFA) versus 2-5 seconds for batch processing.

Providers like Cartesia deliver 40ms TTFA, while ElevenLabs Flash v2.5 achieves ~75ms inference latency. Confirm your config uses the streaming endpoint, not the batch endpoint.

Step 3: Set Up Voice Activity Detection (VAD)

VAD detects when the user has finished speaking. Without it, your agent either responds before the user finishes their sentence or waits indefinitely, both of which kill the conversation.

Why VAD matters:

- Prevents STT hallucinations by filtering out silence and non-speech audio

- Enables natural turn-taking in conversations

- Reduces Word Error Rate (WER) by segmenting audio correctly

VAD accuracy by solution:

| VAD Solution | True Positive Rate (at 5% FPR) | CPU Usage |

|---|---|---|

| WebRTC VAD | 50% (misses 1 in 2 speech frames) | Extremely lightweight |

| Silero VAD | 87.7% (misses 1 in 8 speech frames) | 0.43% (RTF 0.004) |

| Cobra VAD | 98.9% (misses 1 in 100 speech frames) | 0.05% (RTF 0.0005) |

Choose Silero VAD for the best balance of accuracy and performance. Configure it in your agent's config file and verify it's receiving the audio stream correctly before proceeding.

Step 4: Define Conversational Logic and Test Locally

Two approaches for defining agent behavior:

Global system prompt (simpler): One comprehensive prompt defines all behaviors. Best for single-purpose agents like appointment scheduling or FAQ handling.

Structured conversation flow (advanced): Multi-node workflows with branching logic. Required for agents that handle verification, escalation, scheduling, or variable extraction across complex scenarios.

Run a local test call:

- Listen for latency issues (target: <800ms voice-to-voice)

- Evaluate whether responses are on-topic and contextually accurate

- Test interruption handling — can the user interrupt naturally?

AI-to-AI testing frameworks like Dograh AI's LoopTalk simulate real-world caller scenarios to surface failures before production. These tools reduce manual testing effort by running validation automatically across dozens of conversation paths.

Step 5: Deploy to Production

Three deployment paths:

Web widget: Embed a voice widget into your web application by pasting embed code before the closing body tag. Users click a button to start voice conversations directly in their browser.

Phone number: Assign an inbound phone number so the agent handles calls automatically. Configure the number in your dashboard and route it to the correct agent handler.

REST/WebSocket API: Expose your agent via API for integration into existing systems. This approach works for embedding voice into mobile apps, CRM workflows, or custom platforms.

Self-hosting for compliance:

Organizations in healthcare, finance, or legal must deploy on their own infrastructure to satisfy HIPAA/GDPR requirements. Open-source platforms designed for self-hosting eliminate compliance risk by keeping all voice data, transcripts, and PII within your own network.

When Should You Build an Open Source Voice Agent?

Not every use case benefits from the open-source approach. Teams needing something live in hours with no infrastructure investment may find a managed proprietary tool faster for early prototyping.

Open source is clearly the right choice when:

- Healthcare, finance, and legal teams can't send voice data to multi-tenant cloud APIs without violating HIPAA, GDPR, or SOC 2 requirements

- Per-minute SaaS fees become unmanageable at scale — proprietary platforms like OpenAI's Realtime API charge $32 per 1M audio input tokens and $64 per 1M output tokens

- You need to fine-tune models, swap components, or implement custom logic that proprietary APIs simply won't allow

- Your team has DevOps capacity and wants full control over deployment, security, and cost

That said, open source isn't the right fit for every team or project.

Open source becomes inefficient or risky when:

- Your team lacks infrastructure management, security patching, or monitoring expertise

- The use case is a basic FAQ bot where setup overhead outweighs the benefits

- No one owns ongoing maintenance — models, dependencies, and infrastructure all require periodic updates

Key Parameters That Affect Your Voice Agent's Performance

Once your agent is running, the difference between frustrating and production-ready almost always comes down to a handful of critical parameters.

Latency Budget Per Turn

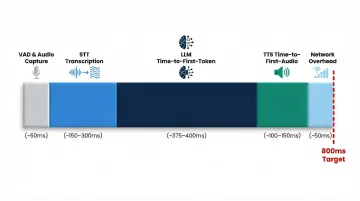

Each pipeline stage contributes its own latency. Research shows that human conversational tolerance breaks down past 500-800ms. For voice agents, 800ms voice-to-voice latency is the target threshold for natural conversation.

Target latency budget (800ms total):

- VAD & Audio Capture: ~50ms

- STT Transcription: ~150-300ms

- LLM Time-to-First-Token (TTFT): ~375-400ms

- TTS Time-to-First-Audio (TTFA): ~100-150ms

- Network Overhead: ~50ms

What happens when latency exceeds thresholds:

Callers interpret pauses as disconnection, interruption rates increase, and conversation completion rates drop. Streaming each stage in parallel rather than sequentially is the primary lever for hitting sub-500ms total response latency.

System Prompt Specificity

Vague system prompts produce off-topic or inconsistent agent behavior. A strong system prompt:

- Defines the agent's role explicitly

- Sets behavioral guardrails ("never ask for credit card numbers over voice")

- Specifies how to handle off-topic inputs ("redirect to a live agent")

- Provides domain context so the LLM doesn't guess intent

For compliance-sensitive industries, the system prompt enforces rules like "do not provide medical advice" or "always direct the caller to a licensed professional for diagnosis."

Conversation History Window

Larger context windows let the agent reference earlier conversation parts — critical for multi-turn booking, troubleshooting, or qualification flows. The cost is real: they increase both LLM inference cost and latency.

At 32K input tokens, Groq's TTFT increases from 120ms to 380ms. Audio inputs consume tokens roughly 10x faster than text for the same sentence.

Mitigation strategies:

- Prompt caching: Reuse previously processed portions to reduce TTFT by up to 80%

- Summarization: Distill older turns into concise summaries rather than resending full history

- Sliding windows: Drop the oldest turns to maintain a small, fast context window

For most deployments, 10-15 messages of history balances context retention with TTFT under 200ms — adjust up or down based on observed latency.

Barge-In and Interruption Handling

Barge-in (the agent stopping when the user speaks) is the single most important feature for making a voice agent feel natural rather than robotic. Without it, average conversation times increase by 40-60% and abandonment rates climb sharply.

Why server-side-only VAD falls short:

Server-side VAD creates 200-400ms of "audio bleed" where the AI talks over the user. By the time the server detects speech, halts generation, and stops transmitting audio, the client's jitter buffer is still playing queued audio.

Hybrid VAD architecture fixes this in two steps:

- A lightweight WebAssembly VAD runs client-side, instantly muting incoming TTS audio in <50ms

- The client simultaneously fires a "truncate" control message to the backend, halting LLM generation and TTS synthesis

Common Mistakes and Troubleshooting

Skipping VAD Configuration Entirely

Without VAD, the agent either responds before the user finishes speaking or waits indefinitely. Before any other debugging, verify VAD is actively receiving the audio stream and correctly detecting speech-end events.

Test with real audio input — silence, background noise, and overlapping speech — to confirm reliable detection across conditions.

Choosing an LLM Optimized for Quality Over Speed

Frontier models like GPT-4o and Claude 3.5 Sonnet have median TTFTs of 450-500ms — that's the majority of an 800ms latency budget gone before TTS even starts.

For voice, use a fast mid-size model paired with a strong system prompt. Groq's Llama 3.3 70B delivers 120ms TTFT with sufficient reasoning quality for most conversational tasks.

Deploying Without Simulated Conversation Testing

Many teams deploy after a single manual test call, missing edge cases like caller interruptions, unexpected phrasing, no-speech timeouts, and background noise.

Run at least 10-20 simulated scenarios before production release. AI-to-AI testing frameworks like Dograh's LoopTalk automate this by simulating real-world customer interactions, cutting manual test effort by 90% while improving coverage.

High Latency on TTS Audio Delivery

Generating full TTS audio before streaming it to the caller adds 2-5 seconds of perceived latency — the most common source of "laggy" voice agents post-deployment.

Confirm your TTS provider supports streaming output and that your agent config points to the streaming endpoint, not the batch endpoint. Streaming TTS delivers audio incrementally as it's generated, reaching sub-150ms time-to-first-audio for the caller.

Misconfigured Phone or WebSocket Integration

If your agent deploys but doesn't respond, configuration mismatches are usually the culprit. Run through these checks before escalating:

- Verify the phone number in the dashboard points to the correct agent handler

- Confirm the agent responds to a direct test call before broader release

- For web widgets, inspect the embed code for the correct agent ID and endpoint URL

Frequently Asked Questions

Can ChatGPT do voice AI?

OpenAI's Realtime API enables speech-to-speech interactions using GPT-4o, but it's a closed, cloud-only service with per-minute pricing ($32 per 1M audio input tokens, $64 per 1M output tokens) and no self-hosting option. Open-source alternatives give teams full control over data, voice customization, and deployment environment without usage-based billing surprises.

What is the difference between open source and proprietary voice AI platforms?

Open-source platforms allow self-hosting, model swapping, and full data control with no platform fees. Proprietary platforms offer faster setup but introduce vendor lock-in and usage-based billing that scales unpredictably. For regulated industries, proprietary APIs create compliance risks that self-hosted solutions eliminate entirely.

How long does it actually take to deploy an open source AI voice agent?

With a pre-built open-source orchestration platform and pre-obtained API keys, a basic agent can be running in 30 minutes. More complex agents with custom conversation flows, knowledge bases, or phone integrations typically take a few hours to a day, depending on workflow complexity and testing requirements.

What open source models can I use for speech-to-text in a voice agent?

The most widely used options are OpenAI Whisper (MIT License, Tiny through Large-v3), Deepgram self-hosted (requires NVIDIA GPUs), and NVIDIA NeMo Conformer models. For lowest latency, running Whisper on Groq's inference infrastructure — which delivers 164x–299x real-time speed — is a popular choice.

Do I need coding experience to build an open source voice agent?

Basic CLI comfort and the ability to edit a config file (TOML or JSON) are sufficient for getting a first agent running. More advanced flows with branching logic, webhooks, or custom integrations require scripting or API knowledge.

Can an open source voice agent be HIPAA or GDPR compliant?

Yes. Self-hosted open-source voice agents satisfy HIPAA and GDPR requirements because all voice data stays within your own infrastructure. Platforms with built-in HIPAA BAA support make compliance easier to achieve and audit than relying on vendor certifications alone.