Introduction

On-premise multilingual voice AI deployment is technically demanding — requiring coordination across speech recognition models, language processing layers, telephony infrastructure, and compliance controls. Between 88% and 95% of enterprise AI pilots fail to reach production, with failures driven by integration gaps, poor data readiness, and escalating costs rather than model limitations.

This work is realistically suited to three types of teams:

- Enterprise IT or DevOps engineers experienced in containerized deployments

- Infrastructure engineers familiar with GPU-accelerated workloads

- Compliance teams with working knowledge of HIPAA, GDPR, or PCI DSS data residency obligations

The cost of getting this wrong is substantial. Latency spikes break conversational naturalness, and language detection failures under code-switching degrade user experience. Data leaving controlled infrastructure creates compliance exposure. Skipping integration sequencing leads to months of rework.

Organizations spend an average of 29.3 weeks moving from idea to production for generative AI projects — and that timeline compounds quickly when foundational decisions are made incorrectly. This guide walks through every layer of that decision-making, from infrastructure sizing to language model selection to compliance controls.

TL;DR

- On-premise deployment requires configuring a full pipeline: ASR, LLM, TTS, language detection, telephony, and integration layers

- Before deployment, confirm GPU-grade hardware, network isolation, compliance sign-off, and language-specific training data are all in place

- Deployment follows five phases: infrastructure setup, model configuration, telephony integration, agent workflow build, and staged rollout

- Validate per-language accuracy, sub-500ms latency, code-switching behavior, and escalation paths before full go-live

- Regulated industries benefit most — full data sovereignty, custom audit trails, and compliance-aligned architecture with zero external data exposure

Deployment Guide for On-Premise Multilingual Voice AI

This deployment process spans three to six weeks for a first production environment and involves infrastructure, model, telephony, and compliance workstreams running in parallel. Cutting corners in any one workstream — especially staging validation — routinely causes failures that take longer to diagnose than the time saved.

Prerequisites and System Readiness

Infrastructure readiness comes first. The system must support containerized deployment (Docker or Kubernetes), GPU compute for real-time inference, and isolated network segments for voice data. Most enterprise-grade on-prem setups require separate staging and production environments from day one — testing in a staging environment that differs from production (different server specs, different security rules) produces results that don't transfer.

Confirm which regulatory frameworks apply (HIPAA, GDPR, PCI DSS) and ensure data residency policies are documented and signed off before any language model or audio pipeline is configured. Voice data qualifies as biometric data under GDPR Article 9 when used for identification, triggering specific consent and deletion obligations.

Do not proceed with deployment if:

- Infrastructure is shared multi-tenant (data isolation cannot be guaranteed)

- Networks have packet loss above 1% (ITU-T G.114 standards require this for VoIP quality)

- Compliance review has not been completed across all supported language regions

Hardware and Software Requirements

With prerequisites confirmed, the next step is verifying that your hardware and software stack can sustain real-time multilingual inference at production load.

Hardware requirements for real-time multilingual inference:

| Component | Specification | Rationale |

|---|---|---|

| GPU | NVIDIA H100, A100, or L40S data-center GPUs | H100 delivers up to 30x faster LLM inference compared to prior generations |

| VRAM | Minimum 10 GB per ASR instance | Whisper large-v3 (1.55B parameters) requires ~10 GB VRAM |

| RAM | 64 GB+ for concurrent sessions | Supports audio preprocessing and model loading |

| Storage | 500 GB+ SSD for model weights and logs | Faster read/write for real-time processing |

| Network | <1% packet loss, <100ms jitter | ITU-T G.114 VoIP quality standards |

Core software components to source or configure:

- Multilingual ASR model (e.g., Whisper under MIT License)

- Large language model capable of multilingual instruction following

- Multilingual TTS engine

- Voice Activity Detection (VAD) layer

- Translation layer for non-English responses

- Telephony integration (SIP/WebRTC)

- Orchestration layer

Dograh AI ships as a self-hostable open-source platform with ASR, LLM, TTS, VAD, and telephony layers already integrated and configurable — eliminating the need to wire each component individually. SOC 2, HIPAA, GDPR, and PCI DSS compliance are built into the platform architecture.

Language-specific readiness:

- Sufficient acoustic training data exists for each target language

- Regional dialect variants have been identified (e.g., Brazilian vs. European Portuguese)

- Native-speaker validation resources are available before production testing

Critical audio preprocessing note: Telephony audio typically uses G.711 codec at 8 kHz, while modern ASR models expect 16 kHz wideband input. Upsampling is required, adding compute overhead and potentially degrading accuracy if not handled properly.

With hardware validated and software components staged, deployment proceeds through five defined phases.

How to Deploy On-Premise Multilingual Voice AI: Step-by-Step

Deployment follows a defined five-phase sequence. Skipping or compressing any phase — particularly staging validation — is the most common cause of production failures in multilingual voice AI systems.

Phase 1 — Infrastructure provisioning:

- Deploy containerized environments using Docker or Kubernetes per your infrastructure policy

- Configure network isolation and set up separate staging and production namespaces

- Confirm GPU drivers and CUDA compatibility with selected model versions

- Establish logging and monitoring pipelines before models are loaded

Phase 2 — Model pipeline configuration:

- Load and configure ASR, LLM, TTS, VAD, and translation model components in sequence

- Establish the audio preprocessing chain (input PCM normalization, upsampling to 16 kHz)

- Configure language detection to operate mid-sentence for code-switching support

- Set confidence thresholds that trigger fallback or escalation behaviors per language

Joint ASR and Language Identification architectures can reduce word error rate by 6.4–9.2% and language confusion by 53.9–56.1% while reducing memory footprints by up to 46%.

Phase 3 — Telephony and system integration:

- Connect the voice AI pipeline to your SIP trunk or WebRTC layer

- Integrate with CRM, authentication systems, ticketing platforms, and internal databases

- Enforce Unicode support across the integration layer to avoid encoding failures with non-Latin scripts

- Validate that entity extraction output maps correctly to downstream systems for each supported language

Phase 4 — Agent workflow configuration:

- Define conversational flows, escalation logic, and per-locale tone and phrasing rules

- Build multilingual consent flows and audit logging as required by applicable compliance frameworks

- Configure warm transfer paths for each supported language so escalations to human agents preserve conversation context and language preference

Phase 5 — Staging validation:

- Test the full pipeline in an isolated staging environment using scripted calls in each supported language, including code-switching scenarios

- Validate latency end-to-end (target sub-500ms)

- Measure word error rate per language variant

- Confirm audit logs capture required data fields

- Stress-test concurrent session limits before any production traffic is moved over

Post-Deployment Validation and Testing

Skipping structured production validation means ASR degradation, latency regressions, and compliance logging gaps tend to reveal themselves only after real callers hit them — not before.

Latency and Responsiveness Checks

Measure end-to-end response latency per language in production conditions — not staging — accounting for codec compression and telephony packet variance. Conversational voice agents require end-to-end latency below 500–800 ms to maintain natural conversation flow.

Flag any language route where round-trip exceeds 500ms. That threshold signals an infrastructure optimization requirement before you scale up traffic on that route.

Per-Language Accuracy and Code-Switching Validation

Run representative test scenarios from real caller interactions in each supported language and across code-switching boundaries. Track three core metrics:

- Word error rate (WER)

- Intent recognition accuracy

- Fallback trigger rate

Industry benchmarks for acceptable ASR accuracy:

- 5–10% WER: Generally considered ready to use

- 20–30% WER: Optimization necessary

- Above 30% WER: Poor quality, unacceptable for production

- Clinical/regulated settings: Require 97–98%+ accuracy

G.711 8kHz telephony compression can nearly double WER compared to wideband audio, making codec configuration critical.

Compliance and Audit Trail Verification

- Confirm all consent flows, transcript storage, and deletion workflows function correctly in each supported language

- Verify voice data is stored within designated infrastructure boundaries

- Ensure audit logs contain required fields for applicable regulatory frameworks (HIPAA requires access controls, audit controls, integrity controls, and transmission security)

Common On-Premise Deployment Problems and Fixes

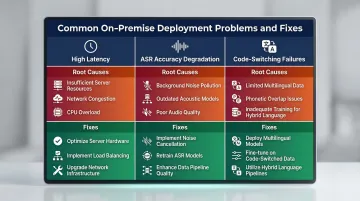

These three problems account for the majority of on-premise voice AI failures in production. Each has a distinct root cause and a targeted fix.

High Latency Breaking Conversational Naturalness

When end-to-end response time exceeds 700ms, callers talk over the agent or experience unnatural pauses that erode trust fast. The usual culprits: full-precision model inference running without quantization, translation layers adding sequential round-trips, or compute nodes not co-located with telephony infrastructure.

Fixes:

- Apply model quantization or distillation for inference components (INT8 quantization can reduce memory by 4x and accelerate inference by up to 2.35x with <1% WER degradation)

- Implement response caching for high-frequency prompts

- Review network topology to minimize round-trip distance between telephony and model inference nodes

- Co-locate GPU infrastructure directly with SIP trunks to eliminate external API calls

ASR Accuracy Degrading Across Accents or Dialects

Latency is often the first symptom teams notice — but accuracy is where deployments quietly lose users. Speech recognition accuracy drops sharply for regional accents or dialect variants even when the base language is technically supported.

Three causes typically combine here:

- Acoustic models trained primarily on standard dialect data

- Insufficient phonetic lexicon coverage for regional pronunciation variants

- Telephony codec compression (8 kHz) degrading audio quality before it reaches the ASR model

Fixes:

- Expand phonetic lexicons for identified regional variants

- Augment training data with real interaction samples (synthetic TTS data can supplement where real samples are sparse)

- Test audio preprocessing steps to ensure upsampling is not introducing artifacts

Code-Switching Causing Intent Recognition Failures

Accent and dialect degradation are manageable with model tuning. Code-switching — callers shifting languages mid-sentence — introduces a harder structural problem. Callers who do this often receive incorrect intent classifications or trigger hard error states entirely.

The core issue: ASR models that are monolingual by design cannot handle mixed-language input. Language detection operates at the utterance level rather than mid-sentence.

Fixes:

- Configure real-time language detection that operates sub-sentence

- Build fallback conversation paths for ambiguous or mixed-language input

- Train dialogue understanding models on code-switched examples from real interaction data (CS-FLEURS dataset covers 113 unique code-switched language pairs)

Pro Tips for Effective On-Premise Multilingual Voice AI Deployment

These operational practices address the failure points that consistently catch on-premise multilingual deployments off guard — before they become production incidents.

Capacity plan for at least 3x your initial volume estimates. Multilingual workloads spike unevenly across languages based on business hours and seasonal demand. Your containerized architecture must scale horizontally without requiring redeployment — build that headroom in from day one.

Keep test and production environments structurally identical. Mismatched staging environments are the most common source of go-live compliance failures. Any compliance validation run in a staging environment that differs from production will produce results that don't transfer.

Version language-specific assets separately from core conversation logic. Tone guides, per-locale phrasing rules, confidence thresholds, and escalation paths should each be versioned independently. This lets you update, retrain, or roll back individual language deployments without touching other language routes or the underlying model pipeline.

Conclusion

On-premise multilingual voice AI deployment quality is determined by infrastructure decisions made in the first two weeks. Hardware provisioning, compliance framework alignment, and model pipeline architecture choices create technical debt or capability headroom that shapes every subsequent update and language expansion.

Building that headroom requires discipline from the start. A phased, validation-first approach keeps expansion manageable:

- Start with two or three high-volume languages before scaling to additional ones

- Validate each language fully — accuracy, latency, and fallback behavior — before moving to the next

- Implement per-language monitoring from day one so performance regressions surface in dashboards, not in caller complaints

Teams that follow this pattern consistently outpace those who attempt broad multi-language rollouts upfront. The infrastructure investment compounds: each validated language adds a tested template for the next.

Frequently Asked Questions

What hardware is required to run multilingual voice AI on-premises?

GPU-accelerated servers are essential for real-time multilingual inference. Data-center-grade GPUs like NVIDIA H100, A100, or L40S are required, with at least 10 GB VRAM per ASR instance. Exact specifications depend on concurrent session volume and model size.

Can on-premise multilingual voice AI handle real-time code-switching mid-conversation?

Yes, but this requires ASR models trained on mixed-language input and sub-sentence language detection. Most default ASR configurations are monolingual. Handling mid-sentence language shifts requires joint ASR and Language Identification architectures configured specifically for that purpose.

How do I ensure HIPAA or GDPR compliance when deploying multilingual voice AI on-prem?

Voice data is biometric under GDPR, so data residency controls, per-language consent flows, audit logging, and deletion workflows must be built into the architecture from the start. HIPAA mandates access controls, audit controls, integrity protections, and transmission security. Neither framework's requirements can be retrofitted after deployment.

What latency is achievable with an on-premise multilingual voice AI system?

Sub-500ms end-to-end latency is achievable with proper GPU infrastructure and quantized models. However, latency varies by language and telephony codec configuration. Each language route must be validated separately in production, as G.711 8kHz compression and network topology significantly impact performance.

How many languages can an on-premise multilingual voice AI system realistically support?

The practical limit depends on available acoustic training data and hardware capacity. High-resource languages (English, Spanish, Mandarin) deploy well with standard models, while low-resource languages require additional fine-tuning effort and native-speaker validation. Start with 2-3 languages and expand based on validated performance.

What is the key difference between on-premise and cloud-based multilingual voice AI deployment?

On-prem delivers full data sovereignty, custom security controls, and no vendor dependency, though it requires in-house infrastructure management. Cloud platforms reduce operational overhead but introduce data residency risks and platform lock-in that 94% of IT leaders are concerned about. For regulated industries, that trade-off is typically not viable.