This isn't a task reserved for large engineering teams. Support ops managers, product teams, and developers with access to a modern AI platform can deploy functional multilingual agents in days rather than months—provided they understand the prerequisites and avoid the common shortcuts that lead to expensive rework.

This guide walks through the complete deployment process: prerequisites, platform selection, step-by-step configuration, validation, and common pitfalls. Follow this roadmap to launch a working multilingual agent as quickly as possible.

TL;DR

- Multilingual AI agents detect language automatically, hold context across language switches, and adapt tone per culture — not just translate.

- Start with 2–3 languages and one core use case (order status, appointment booking), then expand after validating the rollout.

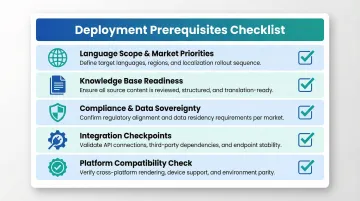

- Define language scope, knowledge base readiness, and integration points before configuration begins — prerequisites determine deployment speed.

- Real multilingual test scenarios catch cultural errors that internal QA consistently misses.

- Platforms with native multilingual NLP, no-code builders, and built-in compliance certifications deploy faster than custom-built alternatives.

What You Need Before Deploying a Multilingual AI Support Agent

Skipping prerequisites is the primary reason multilingual deployments either stall or go live broken. This section establishes the foundation for a fast, correct rollout.

Prerequisites

Language scope and market priorities: Analyze support ticket data, website traffic by region, and purchase geography to identify which 2–3 languages to prioritize first. English, Spanish, German, French, and Italian are the most common support languages globally, but fastest-growing demand is in Tagalog (28% YoY growth), Arabic (25%), and Hindi (22%). Trying to support 10 languages at launch creates tokenizer inefficiencies and English-centric reasoning failures that account for 70–80% of non-English performance issues.

Knowledge base readiness: "Ready" means structured FAQ content, help articles, and policy documents that are accurate, chunked into focused sections (not long PDFs), and tagged by topic. Break content into 200–400 word focused articles with clear headings. Recursive chunking paired with TF-IDF weighted embeddings yields 82.5% precision in retrieval systems, compared to 73.3% for semantic-only approaches.

Compliance and data sovereignty requirements: Regulated industries (healthcare, finance, legal) must determine upfront whether the platform needs to be HIPAA-compliant, GDPR-compliant, or self-hosted. Italy's Garante fined OpenAI €15 million for GDPR violations regarding AI training data. These requirements affect platform selection, deployment model, and where customer conversation data is stored. Self-hosted deployments — where your data never leaves your infrastructure — are the lowest-risk path for regulated industries.

Integration checkpoints: List the systems the agent will connect to—CRM, ticketing platform, product catalog, order management—and confirm API access exists before build begins. Missing integrations are the most common cause of delayed go-lives. Document required data fields and authentication methods.

Platform compatibility check: Confirm the chosen AI platform natively supports multilingual NLP (not just translation bolted on), automatic language detection, mid-conversation language switching, and the deployment channel (web chat, voice, WhatsApp) your customers actually use.

How to Deploy a Multilingual AI Support Agent Step-by-Step

Deployment follows a defined sequence: platform setup, knowledge base configuration, language and conversation logic, integration, and testing. Skipping steps in the middle creates quality problems that are expensive to fix after launch.

Step 1: Choose a Platform Built for Multilingual Support

Look for native multilingual NLP (not translation-layer-only), real-time language detection, support for mid-conversation language switching, pre-integrated AI models, and flexible deployment options (cloud or self-hosted for compliance-sensitive environments).

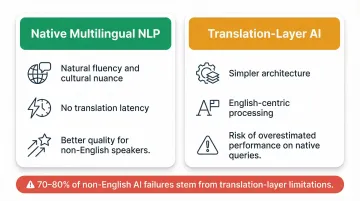

Native vs. translation-layer architectures:

| Criteria | Native Multilingual NLP | Translation-Layer AI |

|---|---|---|

| Core Strengths | Natural fluency, cultural nuance, no translation latency | Simpler architecture, one core language model |

| Quality Impact | Better quality for non-English speakers | Processes everything in English, uses translation APIs |

| Failure Modes | Tokenizer inefficiencies, English-centric reasoning (70–80% of failures) | Translated test sets overestimate performance on noisy native queries |

Platforms like Dograh AI offer agents deployable in minutes, no-code/low-code workflow builders, and built-in HIPAA/GDPR compliance with self-hosting options—cutting deployment timelines from weeks to days compared to building custom.

Step 2: Structure Your Multilingual Knowledge Base

Two main approaches exist:

Single-language source with dynamic translation at query time:

- Faster to maintain

- Lower overhead

- Risk: Quality-Aware Translation Tagging research shows translation layers can introduce 11.5% entity hallucination rates

Parallel content in each target language:

- Better accuracy for high-volume markets

- Culturally appropriate phrasing

- Higher maintenance cost

Specific guidance:

- Break content into 200–400 word focused articles

- Tag by category and language

- Use clear headings

- Protect product names/SKUs from translation

- Add brief summaries after headings to improve semantic coverage

Step 3: Configure Language Detection and the System Prompt

The system prompt is where most teams underinvest. It must explicitly instruct the agent on:

- How to detect and respond in the user's language

- What to do when language is unclear or mixed (code-switching like Hinglish or Spanglish)

- How to maintain formality levels appropriate to each language (Japanese and Korean expect more formal tone than American English)

A well-structured multilingual system prompt includes:

- Language detection logic that evaluates every message, not just the first

- Fallback logic to maintain previously detected language when a message is too short to classify

- Cultural tone expectations per language (e.g., Japanese omotenashi requires formal keigo and avoiding direct "no" responses)

- Explicit rules for which phrases to avoid and how to handle regional variants (Brazilian vs. European Portuguese, Castilian vs. Latin American Spanish)

Step 4: Set Up Conversation Flows and Human Handoff Logic

Even with AI handling language processing, conversation flows for common support scenarios (order lookups, returns, appointment booking, escalations) need mapping before deployment. These flows should be language-agnostic at the logic level, with the AI handling language at the output layer.

Human handoff triggers:

- Customer explicitly requests a human

- Sentiment turns negative

- Issue is unresolvable by the agent

- Confidence falls below 60–70% (hard floor at 40%)

- Conversation loops without resolution

Critical handoff requirement: Pass full conversation context and the customer's detected language to the receiving agent. "Invisible handoffs" that preserve context keep CSAT within 5 points of fully human interactions, while forcing customers to repeat themselves drives 54% to abandon getting help.

Step 5: Integrate with Core Business Systems

Without system integrations, your agent can hold a conversation but can't resolve anything. Connect to:

- CRM to pull customer history and personalize responses

- Ticketing system to create and update support cases in real time

- Backend data sources for live order, inventory, and appointment lookups

Security and compliance best practice: Only pull the data the agent needs per conversation—don't store it beyond session. This is both a security best practice and a compliance requirement under GDPR/HIPAA. Adopt field-level redaction so agents see necessary context while PII is masked.

Step 6: Test Across Target Languages Before Go-Live

Once integrations are confirmed, the final gate before launch is testing—and it must cover every supported language, not just English.

What to test:

- Language detection accuracy (including short messages and mixed-language input)

- Response accuracy and tone per language

- Correct formality levels

- Entity preservation (product names, order IDs shouldn't get mangled in translation)

- Escalation triggers

Critical requirement: Have at least one native speaker review agent responses per language before launch. ISO 17100 standards require translators to be native speakers of the target language. Automation cannot fully understand tone, nuance, or cultural appropriateness—sentences can pass automated checks but sound unnatural to native speakers.

Post-Deployment Checks and Validation

Multilingual agents often pass internal testing cleanly, then stumble on real customers using regional dialects, slang, or unusual query patterns. A limited rollout catches these gaps before they affect your full user base.

Soft launch protocol: Deploy to a subset of users first (employees, loyal customers, or a single region). Monitor conversation logs, resolution rates, and escalation frequency by language during this phase before expanding.

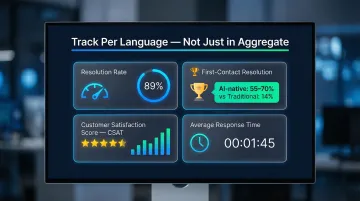

Key metrics to track by language:

- Resolution rate (conversations resolved without human handoff)

- First-contact resolution

- Customer satisfaction score (CSAT)

- Average response time

AI-native platforms achieve 55–70% FCR rates, compared to 14% for traditional self-service. Track these metrics per language — not just in aggregate — to identify exactly where to focus improvement effort.

Signs of incorrect or incomplete deployment:

- Responses in the wrong language

- Entity names getting mistranslated

- Conversations looping without resolution

- Escalation rates significantly higher in one language than another

Each symptom points to a specific upstream cause — a misconfigured system prompt, a gap in the knowledge base, or a model limitation for that language. Diagnosing by symptom speeds up fixes considerably before you expand to the next region.

Common Deployment Problems and How to Fix Them

Language Misdetection for Short or Mixed-Language Inputs

Problem: The agent defaults to the wrong language when a customer opens with "Hi" or mixes two languages in a single sentence.

Likely cause: Language detection is configured at session start only, not per message; or the model lacks handling for code-switched input.

High-level fix:

- Configure language detection to evaluate every message, not just session start

- Use an NLP model that handles multilingual and mixed-language inputs natively

- Set fallback logic to hold the previously detected language when a message is too short to classify

- Fine-tune language identification models with accented English samples via low-rank adaptation — this measurably improves code-switched detection

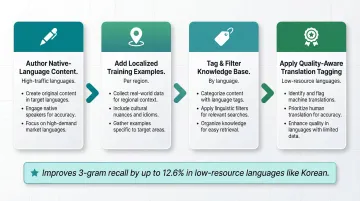

Knowledge Base Gaps Producing Off-Target Responses

Problem: The agent gives generic, low-quality, or irrelevant answers in specific languages, even though it performs well in the primary language.

Likely cause: The knowledge base was built in one language and relies on real-time translation, but the translation layer loses critical context or nuance for that specific language pair.

High-level fix:

- Author or review native-language content for high-traffic languages rather than relying on auto-translation

- Add localized training examples that reflect how customers in each region actually phrase requests

- Tag and filter knowledge base content by language to sharpen RAG retrieval precision

- Quality-Aware Translation Tagging improves 3-gram recall by up to 12.6% in low-resource languages like Korean

Compliance Issues Surfacing Post-Deployment

Problem: Legal or security team flags that customer conversation data is being processed or stored in regions that conflict with GDPR, HIPAA, or local data residency laws.

Likely cause: Compliance requirements weren't mapped to deployment architecture during the prerequisites phase. Cloud-hosted platforms may store data in regions not permitted for specific customer types.

High-level fix:

- Switch to a self-hosted or on-premise deployment model — this resolves most data residency conflicts directly

- Confirm the platform holds the certifications your use case requires (SOC 2, GDPR, HIPAA) and verify where conversation logs are stored and for how long

- Replace identifiable data with anonymized or pseudonymized alternatives immediately after collection

These three issues account for most post-launch rollbacks in multilingual deployments. Catching them during setup, rather than after go-live, cuts remediation time substantially.

Pro Tips for a Faster, Smarter Multilingual Rollout

Three practices consistently separate fast, reliable multilingual rollouts from slow, painful ones:

- Start with 2–3 priority languages and one focused use case — order tracking, appointment booking — before expanding. Validate quality and build internal confidence first. Most successful rollouts grow incrementally.

- Run AI-to-AI testing rather than relying solely on manual QA. Platforms with built-in simulation frameworks (Dograh AI's LoopTalk, for example) surface conversation edge cases across language variants that human reviewers routinely miss at scale.

- Maintain a per-language style guide covering formality level, cultural tone, phrases to avoid, and regional variant handling — Brazilian vs. European Portuguese, Castilian vs. Latin American Spanish. Every future update or language addition costs significantly less time when these rules are already documented.

Conclusion

The quality of a multilingual AI support agent's deployment directly determines whether it reduces support overhead or creates new problems. Agents that skip prerequisites, rush knowledge base setup, or avoid per-language testing typically underperform and require expensive rework—74% of enterprise CX AI programs fail to deliver.

The approach that works is straightforward: define your scope before you build, validate thoroughly before you scale, and treat multilingual deployment as an ongoing process rather than a one-time launch.

Platforms with pre-integrated AI models and compliance-ready architectures — covering HIPAA, GDPR, and SOC 2 — have compressed deployment timelines significantly. But speed only holds when teams invest upfront in the decisions that prevent rework: language scope, knowledge base quality, and per-language testing protocols.

Frequently Asked Questions

How fast can I build an AI agent?

With modern platforms offering pre-integrated AI models and no-code workflow builders, a basic agent can be configured and deployed in a few hours to a couple of days. Multilingual agents take longer primarily due to knowledge base preparation and per-language testing, not platform setup.

How to integrate AI into customer service?

Integration starts with connecting the AI agent to systems your support team already uses: CRM, ticketing platform, and knowledge base. Define conversation flows and escalation rules before deploying to customer-facing channels. Done well, this setup positions AI-native platforms to resolve the majority of inquiries without human escalation.

Which AI tools offer multilingual support?

Several platforms offer multilingual AI support agents, including Dograh AI (open-source, self-hostable, compliance-ready), as well as cloud-based options. The key differentiator is whether multilingual capability is native to the NLP engine or added as a translation layer—native support performs significantly better.

What is multilingual AI customer support?

Multilingual AI customer support is distinct from translation. The agent detects a customer's language automatically, understands intent and tone in that language, and retains conversational context even if the language shifts mid-conversation. Responses are culturally appropriate, not just word-for-word conversions.

What is the difference between a multilingual AI agent and a translation tool?

A translation tool converts words from one language to another without understanding context, intent, or emotion. A multilingual AI agent understands what the customer means, maintains conversation memory, handles mixed-language input, and adapts tone per culture. Customers notice the difference from the first interaction.

How do I test a multilingual AI agent before launch?

Test each supported language separately with real customer scenarios, checking language detection accuracy, response tone, entity preservation (names, order numbers, dates), and escalation triggers. Have at least one native speaker per language review responses before go-live—this catches cultural errors that automated testing misses entirely.