AI agents are no longer the exclusive domain of developers. No-code platforms have made it possible to build and deploy agents that plan, reason, and act without writing a single line of code. This guide covers exactly what separates a true AI agent from basic automation, who should build one, what to prepare, the step-by-step build process, the variables that make or break performance, and the most common mistakes that cause agents to underdeliver.

TL;DR

- No-code AI agents use visual interfaces and natural language prompts to automate multi-step workflows

- Performance depends on clear goal definition, prompt quality, and platform suitability

- Real AI agents reason and adapt; rule-based if-then automation is not the same thing

- Regulated industries must verify platform compliance certifications before connecting live customer or patient data

- Start with one high-repetition task, not complex multi-agent systems

How to Build AI Agents Without Coding: Step by Step

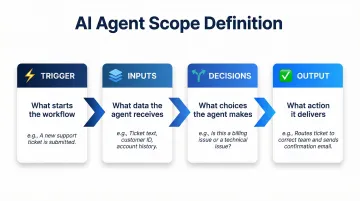

Step 1: Define the Agent's Goal and Scope

Write a one-sentence mission statement for your agent. For example: "Answer inbound customer calls and book appointments" or "Qualify real estate leads and route hot prospects to sales." Vague goals produce vague agents—specificity here directly determines output quality.

Next, identify four critical elements:

- Trigger — What starts the workflow (incoming call, form submission, scheduled time)

- Inputs — What data the agent receives (caller information, form fields, CRM records)

- Decisions — What choices the agent must make (qualify vs. disqualify, escalate vs. resolve)

- Output — What action it delivers (booked appointment, ticket created, call transferred)

Confirm the task is repetitive and well-defined. If the process requires frequent human judgment calls or shifts frequently, build in checkpoints where the agent hands off to a person.

Step 2: Choose the Right No-Code Platform

Select your platform based on use case type:

General workflow agents: n8n (self-hosting available), Zapier (7,000+ integrations), Wordware (natural language programming)

Document-processing agents: V7 Go (chain-of-thought reasoning)

Voice agents: Purpose-built voice AI platforms like Dograh AI, which deploys production-ready agents in under two minutes and carries SOC 2, HIPAA, GDPR, and PCI DSS certifications out of the box—useful for regulated industries like healthcare or financial services

Before committing, verify the platform supports the tools and integrations your agent needs—CRM, calendar, ticketing system, telephony. Missing integrations are the most common reason deployments stall before go-live.

Step 3: Configure Inputs, Prompts, and Tools

Set up inputs: Define what the agent receives (text, files, audio, structured data fields) and specify each input's type and purpose within the platform interface.

Write the agent's prompt: Use plain language to describe:

- Who the agent is (role and context)

- What it should do (specific actions and decision criteria)

- What context it has access to (knowledge base, CRM fields, past interactions)

- What it should never do (constraints and guardrails)

- Examples of ideal outputs (few-shot learning improves consistency)

Chain-of-thought prompting increased accuracy on math tasks from 17.9% to 56.9% in a 2022 Google Brain study—include worked examples in your prompt wherever possible.

Connect tools: Use the platform's pre-built integrations to connect the agent to web search, databases, calendar APIs, messaging channels, and other systems it needs to take action.

Step 4: Test, Iterate, and Deploy

Once configured, run the agent against realistic test inputs and review outputs for accuracy, tone, and decision quality—then adjust the prompt or settings wherever it falls short.

Define "good enough" before going live—set a minimum accuracy or task-completion threshold the agent must consistently clear. For example, if the agent qualifies leads, it should correctly categorize at least 85% of test cases before deployment.

Deploy to the appropriate channel (web, phone, Slack, CRM embed) and establish a review cadence to monitor performance and catch edge cases the agent wasn't designed for.

When Should You Build a No-Code AI Agent?

No-code agents deliver the most ROI when applied to high-frequency, well-defined tasks currently consuming staff time.

Most suitable use cases:

- Answering repetitive inbound inquiries (customer support, FAQ bots, call triage)

- Lead qualification and routing

- Appointment scheduling

- Document review and extraction

- Data entry from unstructured sources

These tasks share a trait: they're rule-governed but not fully predictable. That predictability is where no-code agents excel — AI scheduling tools, for instance, reduce coordination time by 95% (from 15+ minutes to 49 seconds) and cut costs by 99%.

Conditions where no-code agents underperform:

- Tasks requiring nuanced human empathy

- Processes with highly variable, unpredictable inputs

- Situations demanding real-time creative judgment

- Regulated decisions requiring documented human sign-off

What You Need Before You Start

Preparation quality directly determines agent performance. Teams that skip this step almost always rebuild their agents after the first deployment fails.

Platform and Integration Readiness

Confirm that the tools the agent will connect to—CRM, calendar, ticketing, phone system—have APIs or native integrations with your chosen platform. Zapier connects to over 7,000 apps, while n8n offers 400+ nodes with self-hosting for data sovereignty.

Check this before writing a single prompt — a missing integration discovered mid-build can force a platform switch and cost days of rework.

Data and Prompt Materials

Once your integration layer is confirmed, gather examples of ideal agent interactions to shape the agent's behavior:

- Prior customer conversations

- FAQ documents

- Scripts and email templates

- Common objections and responses

These become the training material embedded in the agent's prompt. An agent built with 20 real support transcripts will handle edge cases far better than one built from a generic description of the job.

Compliance and Access Checks

For industries handling personal, financial, or health data, verify the platform's compliance certifications before connecting any live data sources.

Critical compliance requirements:

- HIPAA (healthcare)

- GDPR (EU data)

- SOC 2 (enterprise security)

- PCI DSS (payment data)

Zapier explicitly does not support HIPAA compliance or sign Business Associate Agreements, meaning it should not be used for protected health information. Self-hosted solutions like n8n — or purpose-built platforms such as Dograh AI, which carries SOC 2, HIPAA, GDPR, and PCI DSS certifications and supports full on-premise deployment — are the appropriate choice for regulated data environments.

Key Variables That Shape Your AI Agent's Results

Two organizations can build agents for the same use case on the same platform and get vastly different outcomes. The variables below explain why—controlling them is the difference between an agent that reliably delivers and one that constantly needs human correction.

Prompt Quality

The prompt is the agent's only instruction set. Ambiguous prompts produce inconsistent outputs because the model fills gaps with its own interpretation, which may not match your intended behavior.

Adding four elements typically improves consistency significantly:

- Role context — define who the agent is and what it's responsible for

- Clear constraints — specify what it should and shouldn't do

- Output format requirements — structure matters for downstream processing

- Worked examples — show the model what "correct" looks like

A study comparing zero-shot and few-shot prompting found that simply adding "Let's think step by step" significantly improved performance on reasoning tasks. The words you choose shape everything downstream.

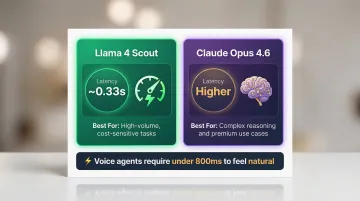

LLM Selection

Not all large language models are equal for every task. Some excel at reasoning and multi-step planning; others at factual retrieval or natural conversation. The model you choose must match the cognitive demand of the task.

Model selection affects both accuracy and latency—sometimes in ways that directly impact the user experience:

| Model | Latency | Best For |

|---|---|---|

| Llama 4 Scout | ~0.33s | Cost-sensitive, high-volume tasks |

| Claude Opus 4.6 | Higher | Complex reasoning, premium use cases |

Voice agents require under 800ms latency to feel natural. Specialized platforms hit 420ms; stitched-together API solutions average 950ms. For voice use cases, model choice is inseparable from platform architecture.

Tool and Integration Configuration

An agent that can't successfully query its connected tools will hallucinate or fall back to generic responses. Correct tool configuration—authentication, field mapping, and error handling—determines whether the agent can actually act in the real world.

Broken integrations don't always fail loudly. They manifest as wrong data pulled, silent API errors, or responses that seem plausible but are fabricated. Test every integration with live credentials before deployment. Production teams using intent-based routing report 40% cost reductions and 35% latency improvements—most of those gains come from getting integrations right the first time.

Memory and Context Window

Agents without memory treat every interaction as brand new—breaking multi-turn workflows and forcing users to repeat themselves. Context retention directly affects conversational quality.

Context window limits vary by platform and model. Gemini 1.5 Pro supports up to 2 million tokens, enabling single-pass processing of entire long documents or transcripts.

For voice agents specifically, this matters more than it might seem. Some platforms retain conversation history across 45+ minutes, while the average contact center handle time is 7.8 minutes. An agent that loses context mid-call doesn't just frustrate users—it breaks the interaction entirely.

Common Mistakes and How to Troubleshoot Them

Most no-code agent builds fail for the same handful of reasons — and the fixes are straightforward once you know what to look for.

- Over-scoping the first agent — Trying to automate a complex, multi-exception process before validating a simple version almost always leads to failure. Start with a single, narrow task and expand only after the baseline agent performs reliably.

- Writing vague or contradictory prompts — Instructions that leave the agent uncertain about priorities or edge cases produce inconsistent outputs. Test the prompt against 10–15 representative inputs before deployment and refine any cases where the agent breaks.

- Skipping compliance and data access checks — Connecting a live patient, financial, or customer database to a non-compliant platform is a regulatory and reputational risk. Audit the platform's certifications before connecting any live data.

Troubleshooting: Agent gives wrong or irrelevant responses

Likely cause: Prompt is ambiguous or missing key context; the LLM is filling in gaps incorrectly.

Fix: Add explicit examples of correct outputs to the prompt, tighten the scope of the instruction, and test with varied inputs to isolate where the agent breaks down.

Troubleshooting: Agent fails to trigger connected tools

Likely cause: API authentication has expired, field names are mismatched, or the tool connection was not tested with live credentials.

Fix: Re-authenticate the integration and check field mapping in the platform's connector settings. Then run a manual test of the tool call in isolation before embedding it back into the agent workflow.

Troubleshooting: Agent performs well in testing but degrades in production

Likely cause: Real-world inputs are more varied than test cases, or the agent lacks sufficient context memory for multi-turn interactions.

Fix: Address this on three fronts:

- Expand the test set to include edge cases and unusual inputs

- Add fallback instructions in the prompt for unrecognized scenarios

- Verify whether the platform's context retention window is long enough for actual conversation length

Frequently Asked Questions

Can I really build a functional AI agent without any coding knowledge?

Yes. Modern no-code platforms handle all technical complexity behind visual interfaces. The primary skills needed are clear goal-setting and good prompt writing—not programming. Gartner projects that by 2026, 80% of low-code tool users will be outside IT departments — a shift already underway.

How is a no-code AI agent different from a regular chatbot?

A chatbot responds to prompts conversationally, while a true AI agent reasons through multi-step tasks, uses tools, accesses external data, and takes real-world actions. The key differentiator is autonomous decision-making and action, not just response generation.

What types of tasks are no-code AI agents best suited for?

High-frequency, well-defined tasks where inputs vary but decision logic is consistent: customer inquiry handling, appointment scheduling, lead qualification, document extraction, and call triage.

How long does it take to build and deploy a no-code AI agent?

A simple, single-task agent can be configured and deployed in under an hour on most platforms. More complex multi-step agents with several integrations typically take one to three days of configuration and testing.

Can no-code AI agents handle sensitive or regulated data safely?

Safety depends entirely on the platform. Confirm it holds relevant certifications (HIPAA, GDPR, SOC 2, PCI DSS) and clarify whether data runs on shared cloud infrastructure or can be self-hosted for full data sovereignty. For regulated industries, platforms like Dograh AI support self-hosting with built-in HIPAA and GDPR compliance.