Introduction

Most teams confuse basic automation with genuine AI agent workflows—and that misunderstanding builds brittle, expensive systems that break at the first edge case. A simple "if-this-then-that" automation executes predefined steps without deviation. An AI agent workflow combines structured automation with LLM-driven reasoning, allowing systems to handle unexpected inputs, make context-aware decisions, and respond to conditions no rule set anticipated.

According to Anthropic's research on building effective agents, the distinction matters: workflows follow predefined code paths, while agents dynamically direct their own processes and tool usage. Most production systems need both—structured automations for repeatable tasks and agent reasoning for judgment calls.

That balance is especially consequential for regulated industries. Healthcare, legal, and finance teams can't hand their data to a closed platform and hope for the best—63% of organizations in these sectors are shifting toward sovereign cloud services to maintain data residency and compliance requirements. This guide covers foundational concepts, a step-by-step build process, the parameters that determine success or failure, and common pitfalls—with specific focus on open-source approaches that give teams full control over data and infrastructure.

TLDR

- AI agent workflows pair rigid automation (predictable steps) with LLM reasoning (context-aware decisions) — production systems typically need both working together

- Map your system logic before touching any platform—this document becomes the foundation for every prompt you write

- Open-source platforms matter most for regulated industries where data sovereignty and compliance are non-negotiable

- Prompt engineering quality determines success more than platform or model choice

- Test with real data from day one; dummy inputs mask the edge cases that surface only under actual load

AI Agents vs. AI Workflows: Understanding the Difference

Anthropic distinguishes two architectural categories within agentic systems:

- Workflows orchestrate LLMs and tools through predefined code paths — each step follows a set sequence with clear start and end points

- Agents dynamically direct their own processes, deciding how to accomplish tasks without hardcoded fallbacks

The productive middle ground for most business teams is agentic AI workflows: structured automations handle predictable, repeatable steps while an AI agent manages reasoning, context judgment, and edge cases.

This hybrid approach reduces risk compared to full autonomy. Instead of granting an agent unlimited decision-making power, you define explicit workflow paths for common scenarios and let the agent handle only the judgment calls.

Five Core Workflow Patterns

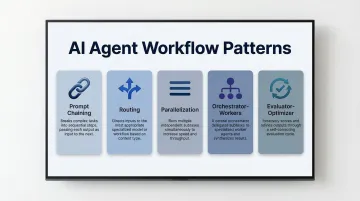

Choosing the right pattern is what separates a brittle prototype from a production-ready system. Anthropic's engineering team identifies five foundational options:

- Prompt Chaining — Decompose tasks into sequential steps where each LLM call processes the previous output

- Routing — Classify input and direct it to specialized downstream processes

- Parallelization — Run independent subtasks simultaneously or execute the same task multiple times for diverse outputs

- Orchestrator-Workers — A central LLM breaks down tasks and delegates to specialized worker LLMs

- Evaluator-Optimizer — One LLM generates responses while another provides evaluation and feedback in a loop

A customer support voice agent routing inbound calls, for example, needs both routing (to direct call types) and orchestrator-workers (to handle multi-step resolution). Map your use case to its pattern before writing a single line of code.

What You Need Before Building

System Design Requirements

Before selecting any tool or writing code, map your workflow logic in plain language. Identify which steps have rigid, predictable rules versus which require agent-level reasoning. This document becomes your blueprint — it shapes every prompt, instruction set, and tool integration decision that follows.

Define three prompt layers upfront:

- System instructions — How the agent should think and operate (role, decision framework, priorities)

- Execution prompts — Specific tasks triggered during a workflow run

- Context inputs — External data, tool access, documents that give the agent grounding

Agents working from incomplete or stale context produce unreliable outputs — no amount of prompt tuning fixes a data access problem. If the agent can't reach the right information at the right time, the workflow fails regardless of how well the prompts are written.

Platform and Compliance Requirements

Identify infrastructure constraints before choosing a platform. Cloud-hosted platforms offer speed of setup but create data sovereignty risks. Two regulations create hard limits here:

- HIPAA requires a Business Associate Agreement whenever a Cloud Service Provider processes protected health information on your behalf

- GDPR imposes strict restrictions on transferring personal data outside the EEA, which applies to any cloud vendor processing EU resident data

Self-hosting eliminates the third-party data transfer problem entirely — no BAA negotiation, no EEA transfer mechanism required. Platforms like Dograh AI are built for exactly this tradeoff: open-source, self-hostable infrastructure where teams in healthcare, legal, and finance control where data lives and how compliance requirements like HIPAA, GDPR, and SOC 2 are enforced — without depending on what a vendor claims to comply with.

How to Build AI Agent Workflows: Step-by-Step

Step 1: Map Your System Logic Before Touching Any Tool

Document every step as if training a new hire. Specify how decisions get made, what edge cases exist, and what "good output" looks like. The more precisely you articulate your own process, the more accurately an LLM can replicate it.

Identify which parts are rigid (can be automated with defined rules) versus which require judgment (need agent reasoning). This split determines your architecture. For example, extracting data from a form is rigid; deciding whether a customer qualifies as "high-priority" requires judgment.

Step 2: Choose Your Platform and Connect Your Tools

Select a platform based on compliance requirements, deployment model (cloud vs. self-hosted), and LLM flexibility needs. For teams building voice AI workflows in regulated industries, prioritize platforms that support self-hosting, offer pre-integrated LLM configurability, and avoid double-billing for STT/TTS/LLM usage.

Connect the tools your agent will use—CRMs, knowledge bases, APIs, communication channels—and document each tool's purpose clearly. Anthropic's research shows that tool documentation quality directly affects how reliably an agent invokes the right tool at the right time. Use unambiguous parameter names, clear descriptions, and actionable error messages.

Step 3: Build the Structured Automation Layer First

Build the workflow automation layer before introducing agent reasoning. Create individual process steps, connect the APIs, and validate that each step produces expected output in isolation. This makes the entire system debuggable and reduces compounding errors.

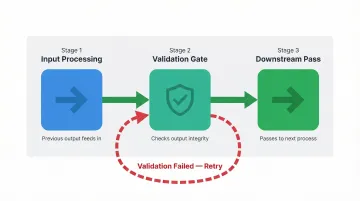

Use prompt chaining as the foundational pattern, with programmatic validation gates at critical decision points:

- Each step takes the previous output as input and transforms it

- Validation runs after every step before passing data downstream

- Format checks catch failures early (e.g., validate an extracted email address before the next step consumes it)

Step 4: Layer in Agent Reasoning and Orchestration

Once individual workflow steps are validated, introduce an agent layer that can access and orchestrate those workflows. The agent decides which workflow to trigger, in what sequence, and when to request human review. This flexibility is what separates an agent from a rigid automation script.

Configure the agent's system instructions using the full logic mapped in Step 1. At minimum, include:

- Role definition — what the agent is and what it's responsible for

- Decision-making framework — how it should weigh competing options

- Priorities and constraints — what to pursue and what to avoid

- Edge case handling — specific guidance for situations you've already encountered

Step 5: Test with Real Data and Iterate

Run the agent on actual business data from the first test. Real data immediately exposes ambiguous instructions and gaps in tool access that dummy data never reveals.

When output quality falls short, treat it as an instructions problem before anything else:

- Ask the agent what additional context or constraints would improve its output

- Revise the system prompt based on that feedback

- Re-test against the same data before switching to a different LLM model

Key Parameters That Affect Workflow Performance

Even well-designed workflows underperform when the wrong variables are misconfigured. These four parameters account for the majority of quality and reliability issues in production deployments.

LLM Model Selection

Different models have measurably different strengths. Some perform better at structured reasoning tasks, others at semantic understanding or natural language generation. Using a single model for every step in a complex workflow is a common source of inconsistent output.

Claude Opus 4.6 currently leads in complex reasoning and agentic coding tasks with 97% on HumanEval and 91.3% on GPQA benchmarks. However, routing Opus to simple tasks can increase input token costs by 5x compared to smaller models like Haiku.

Research on model routing demonstrates that trained routers can reduce costs by up to 85% while maintaining 95% of GPT-4's performance. Platforms that support multiple LLM providers let teams assign the best-fit model per step rather than being locked into one provider.

Prompt and Instruction Quality

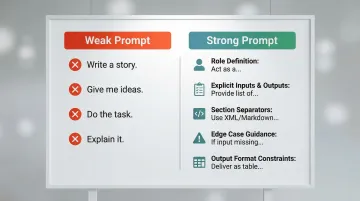

Every system prompt functions like a job description. Vague prompts produce inconsistent output; well-structured prompts with explicit output format requirements, role definitions, and edge case handling produce verifiable results.

Prompt quality is the single highest-leverage variable in workflow performance — responsible for the majority of agent failures. Treat prompts with the same rigor as function signatures in code:

- Define expected inputs, outputs, and constraints explicitly

- Use XML tags or Markdown headers to separate background info, instructions, and tool guidance

- For modern reasoning models, use "developer messages" rather than system messages — OpenAI explicitly warns against "chain-of-thought" prompts since these models reason internally

Tool Documentation and Agent-Computer Interface

Agents perform exactly as well as their tools are documented — no better. Ambiguous tool definitions cause agents to invoke the wrong tool, pass incorrect parameters, or fail silently. Anthropic's engineering team reports spending more time optimizing tool definitions than overall prompts for complex agent systems.

Strong tool documentation includes:

- Clear, distinct tool names with explicit boundaries between similar tools

- Parameter descriptions with expected types, formats, and example values

- Pagination or filtering on tool responses to avoid flooding the context window

- Specific, actionable error messages — not opaque stack traces

Latency and Infrastructure Configuration

For real-time agent workflows — particularly voice AI — latency between reasoning steps determines whether the experience feels natural or broken. Human conversations have response gaps of 200-400ms, and pauses as short as 300ms feel unnatural. Sub-500ms end-to-end response latency is the threshold that preserves natural conversation flow.

Self-hosted open-source deployments allow teams to optimize at the component level — LLM inference, STT, TTS, and API calls — whereas proprietary cloud platforms abstract this control away. Edge computing cuts network latency to 1-10ms versus 50-200ms or more for standard cloud. Running a local Whisper ASR model combined with a cloud LLM, for instance, can keep total latency under 200ms.

Common Mistakes and Troubleshooting

Skipping the system design phase and building directly in a platform: This is the most common reason AI agent workflows fail. Without documented logic, every prompt is a guess and every edge case breaks the system. The fix is always to pause and map the process before resuming.

Your workflow logic document should articulate decision criteria, edge case handling, and output requirements in plain language before you write a single line of code.

Writing vague, role-generic system prompts: "Act as an expert in X" without specificity produces generic, inconsistent output. Effective prompts encode your actual decision-making process, not a generic archetype.

Extract domain expertise into structured prompts by asking three questions: How would you personally make this decision? What information would you need? What edge cases would you watch for?

Adding framework complexity before validating simple prompt chains: Most real-world failures come from adding orchestration complexity before individual steps are reliable. Start with direct LLM API calls or simple chained prompts, then add agent layers only when simpler solutions can't handle the load.

Troubleshooting degraded output: When agent output quality drops after initial deployment, check in this order:

- Has the input data format changed?

- Have any connected tool APIs updated their response schema?

- Has a model version been updated silently by the provider?

For self-hosted open-source deployments, version-pinning models and dependencies eliminates that failure mode entirely. After OpenAI rolled out an update that made GPT-4o noticeably more sycophantic, organizations learned to use API version-pinning to maintain agent stability.

Frequently Asked Questions

What is the difference between an AI agent and an AI workflow?

AI workflows follow rigid predefined logic paths with clear start and end points, while AI agents dynamically reason through decisions and adapt to unexpected inputs. "Agentic AI workflows" combine both: structured automations handle repeatable steps, while agent reasoning takes over for edge cases that require interpretation.

Do I need to know how to code to build AI agent workflows?

No-code and low-code platforms make basic workflow building accessible, but understanding core patterns like prompt chaining and writing precise system instructions is essential regardless of the tool used.

What open-source tools are best for building AI agent workflows?

Popular open-source options include n8n for workflow automation, LangChain for LLM orchestration, and LlamaIndex for agentic retrieval. The right choice depends on your use case — compliance-heavy teams should prioritize platforms with self-hosting support and pre-integrated model configurability.

How do I make my AI agent workflow compliant with HIPAA or GDPR?

Compliance requires self-hosting on your own infrastructure so data never leaves your control, choosing a platform with compliant architecture, and maintaining a full audit trail of agent decisions. Platforms like Dograh AI are purpose-built for this, offering SOC 2, HIPAA, GDPR, and PCI DSS compliance out of the box.

How long does it take to build and deploy an AI agent workflow?

A simple prompt-chained workflow can be built and tested in hours. A multi-agent orchestration system with real-world edge case coverage typically requires days to weeks of iteration on prompts, tool definitions, and model selection before production deployment.

What are the most common AI agent workflow patterns I should know?

The five patterns from Anthropic's research are prompt chaining, routing, parallelization, orchestrator-workers, and evaluator-optimizer. Most production workflows combine two or more of these patterns rather than relying on a single one.