Introduction

With 59% of finance leaders now using AI and 85% of customer service leaders exploring conversational GenAI, voice AI has moved from experiment to infrastructure—without the compliance frameworks catching up. Unlike email or chat systems, voice interactions passively capture unstructured sensitive data: spoken account numbers, verbal loan authorizations, credit inquiries, and investment instructions, all in a single call. That creates a regulatory exposure generic AI compliance approaches aren't built to handle.

Two frameworks govern this space: GLBA's Safeguards Rule (the federal floor for financial data security) and SOC 2 (the industry trust standard for vendor verification). GLBA obligations fall on the institution, while SOC 2 certification is held by vendors. Understanding both together is essential.

A 2020 FTC enforcement action against Ascension Data & Analytics made that clear: institutions bear liability for their vendors' security failures. That ruling makes SOC 2 a practical mechanism to satisfy GLBA's third-party oversight requirement—not just a nice-to-have vendor checkbox.

TLDR:

- Voice AI creates three-layer risk: audio files, real-time transcripts, and LLM inference logs

- GLBA's 2023 Safeguards Rule mandates nine controls, including vendor oversight and 30-day breach notification

- SOC 2 Type 2 certification is the clearest way to document GLBA vendor due diligence

- Self-hosted deployments remove third-party data residency risk entirely

- Every sub-processor in your voice AI stack must meet adequate safeguards—map the full data flow

The Compliance Stakes: Why Voice AI in Finance Is Different

The Three-Layer Risk Exposure

Voice AI creates a distinct data risk profile that doesn't fit standard data classification schemas. The FTC's Safeguards Rule defines "customer information" as nonpublic personal information "whether in paper, electronic, or other form," explicitly covering audio recordings and transcripts containing financial data.

Every customer voice interaction generates three separate data artifacts:

- The audio file itself – raw recording containing spoken account numbers, SSNs, verbal consent

- The real-time transcript – text conversion that may be stored separately from audio

- The LLM inference log – full conversation context sent to the model provider, often retained independently

Each layer may be stored on different systems, processed by different vendors, and subject to different retention schedules—yet all contain covered customer financial information under GLBA.

The Regulatory Consequence of Gaps

Both the FTC (for non-bank financial institutions) and prudential regulators can take action under GLBA's Safeguards Rule for inadequate third-party oversight. The institution—not just the vendor—bears liability.

In 2020, the FTC settled with Ascension Data & Analytics after its vendor (OpticsML) left mortgage holder information unsecured on a cloud server. The case confirmed that GLBA's vendor oversight requirements are directly enforceable—institutions must actively vet vendors and mandate safeguards by contract, not just assume compliance.

Market Growth Accelerates Exposure

Adoption is moving faster than most compliance programs can track:

- CCaaS voice traffic is projected to grow from 24 billion interactions in 2025 to over 39 billion by 2029

- IDC forecasts a 10x increase in AI agent usage by 2027

Regulators are already responding. Institutions that deploy first and govern later are the ones that end up in consent orders.

GLBA's Safeguards Rule: What It Means for Voice AI Systems

Who GLBA Covers

The Safeguards Rule applies to financial institutions subject to FTC jurisdiction, including:

- Mortgage lenders and brokers

- Payday lenders and finance companies

- Auto dealers offering financing

- Tax preparation firms

- Credit counselors and collection agencies

- Fintechs handling customer financial information

Traditional banks are regulated by prudential regulators (OCC, FDIC, FRB) under parallel standards.

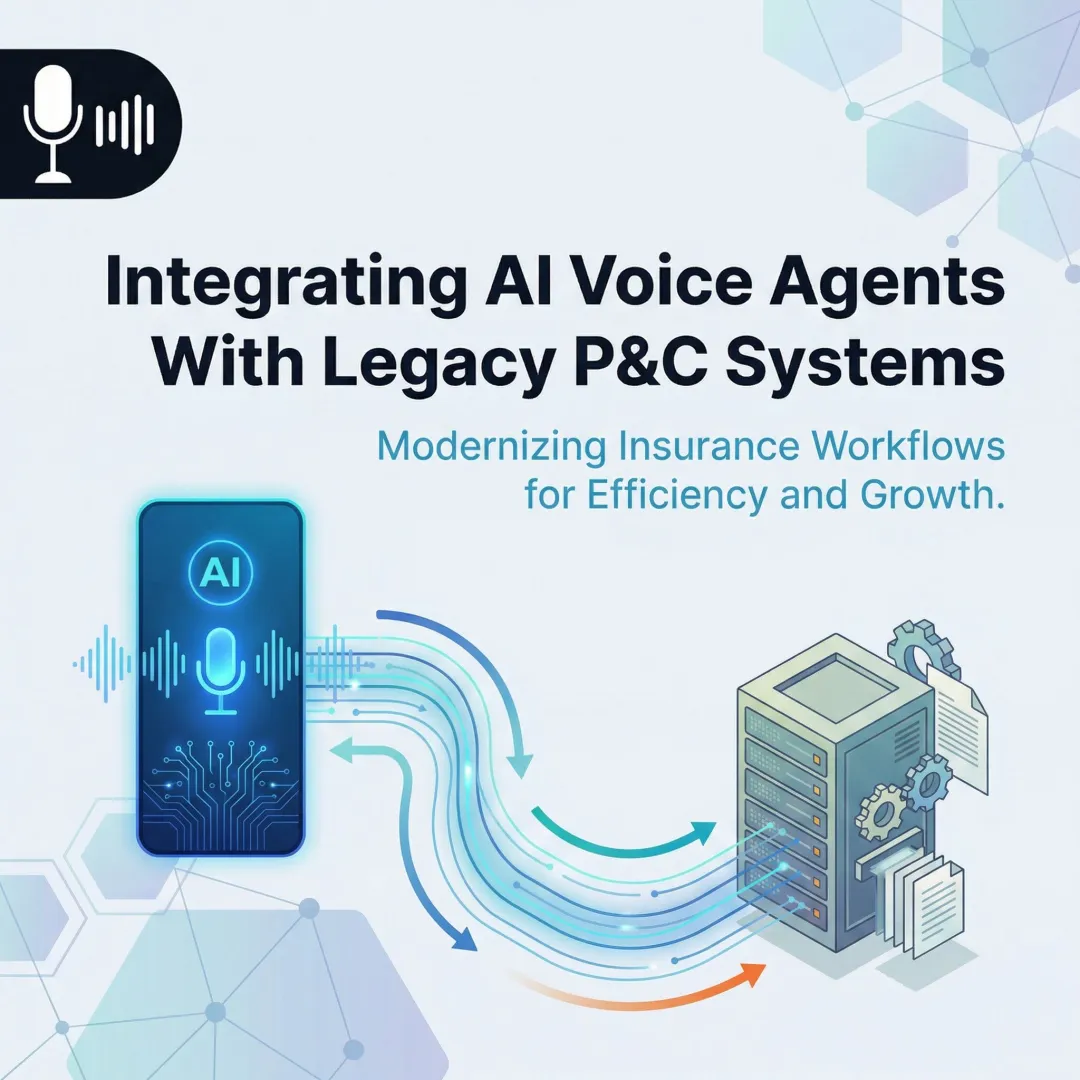

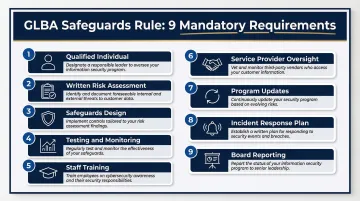

The Nine Mandatory Safeguards

The 2023 FTC update strengthened the Safeguards Rule with specific technical requirements. Every financial institution must implement:

- Qualified Individual – Designate someone responsible for overseeing the security program

- Written Risk Assessment – Document foreseeable internal and external risks to customer information

- Safeguards Design – Implement access controls, encryption (transit and rest), data inventory, MFA, and secure disposal procedures

- Testing and Monitoring – Regularly test or monitor safeguard effectiveness

- Staff Training – Ensure personnel can enact the security program

- Service Provider Oversight – Select capable vendors and require safeguards by contract

- Program Updates – Evaluate and adjust based on testing, monitoring, or changes

- Incident Response Plan – Establish written procedures for security events

- Board Reporting – The Qualified Individual must report at least annually to leadership

Voice AI deployments map each requirement to specific technical controls:

- Encrypt audio streams in transit using TLS 1.3; store data at rest with AES-256

- Restrict access to recordings and transcripts through role-based permissions

- Require MFA for all administrative access to voice data stores

- Log every access event — human, API, or system — with timestamp and actor identity

Vendor Oversight Obligation

GLBA requires institutions to select service providers that maintain appropriate safeguards and to contractually require implementation of those safeguards. This creates a three-step due diligence process:

- Take reasonable steps to select capable vendors

- Require them by contract to implement safeguards

- Periodically assess vendors based on risk

SOC 2 Type 2 certification directly satisfies this requirement — it gives institutions audited, third-party proof that a vendor designed and operated controls effectively over a 6–12 month period, not just at a point in time.

Data Retention and Disposal

Institutions must define policies for secure disposal of customer information. Applied to voice AI, that means establishing:

- Defined retention schedules for audio files, transcripts, and conversation logs

- Documented deletion procedures at end of retention windows

- Verification that vendors enforce identical disposal practices

FINRA Rule 4511 requires broker-dealers to preserve records for at least six years. SEC Rule 17a-4 mandates a complete time-stamped audit trail for any modifications or deletions. State laws may impose additional requirements.

30-Day Breach Notification

The 2023 amendment requires notification to the FTC within 30 days after discovery of a "notification event" — unauthorized acquisition of unencrypted customer information affecting at least 500 consumers.

This creates a practical readiness requirement. Institutions need to know in advance:

- Which systems hold customer voice data

- How to contain a breach quickly

- What the vendor's incident response SLA looks like

Since prudential regulators require 36-hour notice and NYDFS requires 72-hour notice, institutions should contractually require vendors to provide breach notification within 72 hours or less.

SOC 2 for Financial Voice AI: The Controls That Actually Matter

Understanding the AICPA Framework

SOC 2 is an AICPA-developed auditing standard that has become the standard enterprise procurement requirement. It reports on controls at a service organization relevant to security, availability, processing integrity, confidentiality, or privacy.

Type 1 vs. Type 2:

- Type 1 validates control design at a single point in time

- Type 2 validates operational effectiveness over 6-12 months

Financial services deployments should require Type 2 as the minimum standard. Type 1 only confirms controls were designed appropriately on audit day—not that they actually worked throughout the year.

Security Common Criteria

Security is the mandatory baseline (Common Criteria) in every SOC 2 audit. Auditors specifically examine:

Access Management (CC6.1-6.8):

- Logical access to audio storage is restricted

- Identification and authentication (MFA) are managed

- Encryption protects data in transit and at rest

Operations Monitoring (CC7.1-7.5):

- Detection and monitoring procedures identify vulnerabilities

- Anomalies are analyzed for security events

- Incident response programs are executed

Change Control (CC8.1):

- Changes to infrastructure, data, software, and procedures are authorized, tested, and approved

For voice AI deployments, these controls translate directly to audited verification of encryption key management, network security for real-time audio streams, and vulnerability management across the STT/LLM/TTS pipeline. That last point matters: each handoff between components is a potential exposure point that a Security-only audit should cover.

Confidentiality Trust Principle

Financial services deployments should request the Confidentiality principle in addition to Security. Auditors verify that covered data is identified, protected, and disposed of according to commitments.

Voice conversations routinely capture information your institution classifies as confidential — account details, loan discussions, customer grievances — that a generic voice AI vendor may never have independently flagged. Without the Confidentiality principle in scope, no auditor checks whether the vendor's disposal and classification commitments match your data's actual sensitivity.

What a SOC 2 Report Produces

The audit report documents:

- Specific controls tested by the auditor

- Any exceptions noted (failures in design or operation)

- The auditor's opinion on control effectiveness

What to review:

- Does the scope cover production systems processing your voice data — or only the vendor's corporate IT infrastructure?

- Do any exceptions touch encryption, access control, or logging? Even minor exceptions in these areas warrant follow-up.

- What Complementary User Entity Controls (CUECs) does the vendor require you to implement on your end?

Availability for Financial Voice AI

Financial services SLAs often require high availability for customer-facing systems. A SOC 2 report with the Availability trust principle gives documented evidence that the vendor has tested uptime, redundancy, and failover procedures.

Without this documentation, a vendor's uptime claims are self-reported. For customer-facing voice AI where downtime means missed calls and unresolved transactions, that distinction matters at contract time.

How GLBA and SOC 2 Complement Each Other

The Key Conceptual Distinction

GLBA is an obligation on the financial institution. SOC 2 is a certification a vendor holds.

Deploying a SOC 2 Type 2-certified voice AI vendor does not transfer the institution's GLBA obligations. But it does provide the documented third-party assurance that satisfies GLBA's vendor oversight requirement.

Practical Mapping

| GLBA Requirement | SOC 2 Trust Principle | What It Covers |

|---|---|---|

| Encryption in transit and at rest | Security CC6 | Validates encryption implementation and key management |

| Disposal requirements | Confidentiality C1.2 | Verifies data deletion and retention commitments |

| Monitoring and testing | Security CC7 | Confirms detection, monitoring, and incident response |

| Access controls | Security CC6 | Documents RBAC and authentication controls |

| Change management | Security CC8 | Validates authorization and testing of system changes |

The Residual Compliance Gap

SOC 2 doesn't cover GLBA-specific obligations that institutions must own internally:

What SOC 2 does NOT replace:

- Designation of a Qualified Individual

- The institution's own written information security program (WISP)

- Entity-specific annual risk assessment

- Staff security awareness training

- Board reporting requirements

Even with a fully SOC 2 Type 2-certified vendor, the institution retains these non-transferable statutory obligations.

Regulatory Acceptance of SOC 2

Federal banking regulators explicitly state in their Interagency Guidance on Third-Party Relationships that banking organizations may review SOC reports and independent certifications to assess a third party's operational risk management and internal controls.

Federal regulators explicitly endorse SOC 2 reports as a valid tool for vendor due diligence. Institutions should treat this as a starting point for oversight, not a replacement for the internal obligations covered above.

Key Compliance Risks in Financial Voice AI

Third-Party Data Flow Risk

Most voice AI systems involve multiple vendors in the pipeline:

Telephony provider → STT provider → LLM → TTS → CRM integration

Each handoff is a potential GLBA exposure point. Financial institutions must map the complete data flow and verify that every sub-processor handling customer financial information meets adequate safeguards—not just the primary vendor.

One underappreciated issue: voice AI vendors often use the "carve-out" method in SOC 2 audits, excluding cloud hosts and LLM sub-processors from audit scope entirely. When a vendor carves out a sub-processor, your compliance team must obtain and review that sub-processor's own SOC 2 report separately.

Verbal Consent and Recording Law Risk

Beyond GLBA and SOC 2, voice AI intersects with state wiretapping laws. California, Florida, and Illinois require all-party consent for recording confidential communications.

In Ambriz v. Google, a federal court allowed California Invasion of Privacy Act claims to proceed against an AI contact center vendor. The court applied the "capability test," finding that because the AI had the technical capability to use intercepted call data for its own purposes, it could be classified as an unauthorized third-party eavesdropper—regardless of whether it actually used the data.

To reduce exposure under these statutes, voice AI deployments should include:

- Configurable consent disclosure scripts triggered at call start

- Call recording consent logs with timestamps and actor identity

- Contractual prohibitions on using customer data to train foundational models

Model Inference Log Risk

Many institutions don't realize that LLM conversation logs—which capture the full transcript context sent to the model for inference—may be retained by the model provider separately from the voice AI vendor's own storage.

Before deploying any LLM-backed voice system, verify:

- Whether the LLM provider retains inference logs and for how long

- Whether that data is used to train future models

- Whether sub-processor data processing agreements are in place and prohibit training on customer data without explicit consent

Implementing Compliant Voice AI: A Practical Framework

Architecture Decision: Self-Hosted vs. Cloud

For the most sensitive financial voice use cases where GLBA exposure is highest, self-hosting the voice AI stack on institution-controlled or private cloud infrastructure is the strongest compliance posture.

Why self-hosting matters:

- Eliminates third-party data residency ambiguity entirely

- Gives institutions direct control over audio files, transcripts, and inference logs

- Simplifies GLBA compliance by reducing the number of vendors in data flow diagrams

- Enables enforcement of institution-specific retention policies aligned to FINRA, SEC, and state law requirements

Dograh AI supports self-hosted, on-premise deployment with full data sovereignty, directly addressing GLBA's requirement that institutions control how customer information is safeguarded.

Encryption and Access Control Requirements

Compliant deployments require:

Encryption Standards:

- TLS 1.3 for audio streams in transit — mandated for government systems under NIST SP 800-52 Rev. 2

- AES-256 for audio and transcript storage at rest, per the FIPS 197 federal encryption standard

Access Controls:

- Role-based access controls (RBAC) limiting who can retrieve recordings or transcripts

- MFA for any administrative access to voice data stores

- Least-privilege access policies

Audit Logging Requirement

Every access to a voice recording or transcript — by human, by API, or by another system — requires a log entry capturing:

- Timestamp

- Actor identity

- Action performed

This supports both SOC 2 Security monitoring criteria and GLBA's monitoring and testing requirement. The FFIEC Information Security booklet requires network and host activities to be recorded and sent to a central logging repository, with strict access controls to log files.

Dograh AI includes a complete audit trail as a built-in feature — and those logs themselves are subject to retention rules, which makes the next workflow equally critical.

Data Retention and Deletion Workflow

Steps to implement:

- Define retention periods driven by state law, FINRA rules (6 years minimum), SEC rules (with time-stamped audit trail), or internal policy

- Implement automated deletion at the end of retention windows

- Document deletion execution with audit logs confirming data was securely disposed

- Verify vendor enforcement of the same retention and deletion policies

Voice AI platforms that don't support configurable retention policies create compliance risk that compounds over time. When evaluating vendors, confirm they can accommodate your specific regulatory retention requirements.

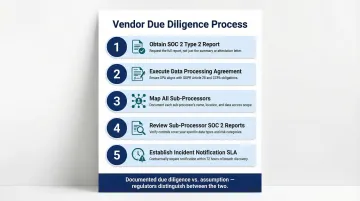

Vendor Due Diligence Process

Five-step framework:

- Obtain SOC 2 Type 2 report – Review scope and exceptions, especially for encryption, access control, and logging

- Execute a Data Processing Agreement (DPA) – Include GLBA-compliant service provider language requiring safeguards

- Map all sub-processors – Identify every vendor in the data flow, including cloud hosts and LLM providers

- Review sub-processor SOC 2 reports – If the vendor uses the carve-out method, obtain independent reports for each critical sub-processor

- Establish incident notification SLA – Contractually require 72 hours or less to allow for GLBA's 30-day breach notification requirement

Walking through this process before signing any vendor contract is what separates documented due diligence from assumption — and regulators distinguish between the two.

Frequently Asked Questions

Does GLBA apply to voice AI systems used by financial institutions?

Yes. GLBA applies to the financial institution deploying the voice AI, not directly to the AI vendor. However, institutions must contractually require their voice AI vendors to implement appropriate safeguards, making vendor compliance a GLBA obligation for the institution.

What's the difference between SOC 2 Type 1 and Type 2 for voice AI vendors?

Type 1 validates that controls were designed appropriately at a single point in time. Type 2 validates that those controls operated effectively over a 6-12 month period. Financial services buyers should require Type 2 as the minimum standard.

Can a cloud-hosted voice AI platform be GLBA-compliant?

Yes, if the vendor is contractually bound, holds SOC 2 Type 2 certification, and a data flow map confirms no uncontrolled third-party sub-processor access. However, self-hosted deployments offer stronger data sovereignty by eliminating third-party data residency ambiguity entirely.

Is SOC 2 compliance sufficient for financial services voice AI deployments?

No. SOC 2 satisfies the vendor oversight documentation requirement under GLBA, but does not replace the institution's own obligations. Those include written security programs, annual risk assessments, designation of a Qualified Individual, and staff training requirements.

What voice data does GLBA's Safeguards Rule cover?

GLBA covers "customer financial information"—any information provided by a customer in connection with a financial product or service. In voice AI contexts, this includes spoken account numbers, verbal authorizations, credit inquiries, and transcripts of those interactions.

How should financial institutions handle LLM sub-processor data retention under GLBA?

Institutions must identify every sub-processor that receives customer voice data (including LLM providers used for inference), execute DPAs with each, and contractually prohibit those providers from retaining or training on that data. Verify compliance annually through vendor attestations and contractual audit rights.