Introduction

Agent turnover in contact centers runs between 31.2% and 45% annually, with replacement costs of $10,000–$20,000 per agent. That math alone creates a chronic staffing problem—but the deeper issue is demand: seasonal surges, product launches, and service outages generate call volumes that no hiring cycle can absorb fast enough. Every dropped connection or extended queue translates directly into lost revenue and eroded customer trust.

Traditional scaling strategies make the problem worse. Adding headcount is slow and expensive. Expanding rigid IVR menus frustrates callers without actually resolving more issues. Operations teams end up stuck between two bad options: overstaff year-round and inflate budgets, or understaff and watch abandonment rates spike during the moments that matter most.

This guide covers what scalable voice automation means at a technical level, the non-negotiable platform requirements for production-grade deployment, and how to evaluate solutions that maintain performance under real concurrent load—not just in controlled demo environments.

TLDR

- Scalable voice AI demands sustained accuracy under load, sub-500ms latency, and predictable per-unit costs as volume grows

- Legacy IVRs route but don't resolve, while human-only staffing creates fixed-capacity bottlenecks that fail during demand spikes

- Evaluate platforms on concurrency limits, compliance certifications, CRM integration depth, and pricing transparency before committing

- Open-source platforms like Dograh AI offer cost control and data sovereignty advantages without vendor lock-in or unpredictable platform fees

- Pilot-first deployment starting at 100–500 concurrent calls, then scaling incrementally, is the most reliable path to production success

Why High-Volume Contact Centers Struggle to Scale Voice

The Finite Capacity Problem

Human agent staffing operates under a hard constraint: each agent handles exactly one call at a time. Rapid scale-out during demand spikes depends entirely on hiring and training cycles that take weeks or months, creating a gap between demand and available capacity.

Contact centers face 30% to 45% annual turnover rates, with projections hitting 31.2% in 2024. For a 100-agent operation, that translates to over $700,000 in annual replacement costs when factoring in recruitment, training, and lost productivity during ramp periods.

The financial calculus becomes impossible: overstaffing for peak periods inflates budgets year-round, while understaffing causes abandoned calls and customer churn during the moments when reliability matters most.

Why Legacy IVRs Fail as a Scaling Solution

Legacy IVR systems deflect calls through scripted menus but cannot resolve customer issues. Their rigid navigation trees break down when callers use natural language or vary how they express intent — and that friction compounds quickly.

Industry benchmarks put acceptable abandonment rates below 5%, with 2% considered best-in-class performance. Outdated IVRs that trap customers in endless loops push those numbers in the wrong direction and directly damage CSAT scores. IVRs shift the bottleneck; they don't remove it.

Compounding Operational Risks

High call volumes create cascading failures beyond simple capacity constraints:

- Agent burnout from sustained overload accelerates turnover, creating knowledge loss precisely when institutional expertise matters most

- Inconsistent service quality across shifts and geographies undermines brand trust during peak demand events

- Training gaps from rapid hiring produce agents who lack the expertise to handle complex queries, forcing escalations that consume senior staff time

- Revenue leakage from dropped calls and extended wait times hits hardest during product launches and service recovery events

What "Scalable" Actually Means for Contact Center Voice AI

Defining Scalability in Measurable Terms

Scalable voice AI maintains three performance dimensions simultaneously as concurrent call volume grows from hundreds to tens of thousands: accuracy (measured by Word Error Rate), latency (end-to-end response time), and resolution quality (successful issue resolution without escalation). Simple "cloud capacity" addresses compute provisioning — it says nothing about conversation quality degradation under load.

Most vendors optimize for demo environments with clean audio and controlled scenarios. Production environments process spontaneous conversation at 8kHz telephony bandwidth with background noise, cross-talk, and accent variation. Independent benchmarks consistently show ASR accuracy degrading 2.8x to 5.7x in production telephony environments compared to controlled lab conditions.

Concurrent Call Handling and WER Degradation

Accuracy degradation under load is the most common hidden failure mode in voice AI deployments. Buyers should demand NDA-protected load testing results showing Word Error Rate at 1,000, 5,000, and 10,000+ concurrent calls before signing any contract.

A platform that delivers 95% accuracy in testing may drop to 78% at 5,000 concurrent sessions if its architecture cannot maintain compute resources per conversation as load increases. That 17-point drop translates directly into misunderstood intents, failed resolutions, and frustrated callers.

Key questions to ask vendors:

- What is your documented WER at our target concurrent call volume?

- How does latency change between 100 and 10,000 concurrent calls?

- Can you provide customer references who have sustained our target volume in production?

Latency: The Conversational Breaking Point

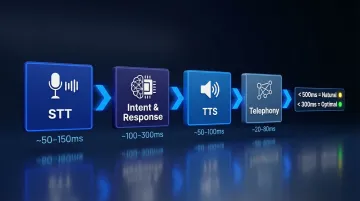

Psycholinguistic research shows natural human turn-taking operates with median inter-turn gaps between 0 and 300ms. The ITU-T G.114 standard recommends one-way delay should not exceed 400ms, noting that delays above 300ms cause noticeable difficulties. Sub-500ms end-to-end latency is the minimum threshold for natural conversation flow; sub-300ms delivers optimal performance.

When voice AI exceeds this window, callers perceive the system as broken or unresponsive — leading to abandonment or frustrated demands for human transfer. The latency budget spans four components:

- Speech-to-Text (STT): Transcription time from audio to text

- LLM processing: Intent recognition and response generation

- Text-to-Speech (TTS): Audio synthesis and playback

- Network transit: Round-trip time across telephony infrastructure

Each component may pass its individual spec — but cumulative latency breaches the 500ms threshold and wrecks the caller experience regardless. Architects must budget for the full chain, not each link in isolation.

Cost Predictability at Scale

Per-minute pricing models produce wildly unpredictable monthly costs as volume scales. A platform charging $0.15 per minute for a 50,000-call-per-month operation (averaging 6 minutes per call) generates $45,000 in monthly costs—but that same pricing at 200,000 calls jumps to $180,000 without volume discounts.

Comparative Pricing Models (50,000+ Monthly Calls):

| Vendor | Telephony/Voice | AI Processing | Notes |

|---|---|---|---|

| Amazon Connect | $0.018/min inbound | $0.015/min analytics | Base rates; custom quotes for volume |

| Google CCAI | $0.06/min voice agents | $0.016/min STT | Separate charges compound quickly |

| Twilio | $0.0085/min inbound | $0.07/min AI routing | Bundled AI simplifies billing |

Hidden platform fees and separate charges for STT, TTS, and LLM orchestration compound rapidly. Platforms offering per-conversation flat fees or transparent passthrough pricing — no markup on underlying AI services — deliver more predictable budgets for high-volume operations.

Elastic Cloud Infrastructure vs. Fixed Capacity

Cloud-native architectures use microservices and auto-scaling groups to handle demand spikes dynamically. Genesys Cloud, for example, uses Elastic Load Balancers and auto-scaling groups to add or remove resources when threshold policies are exceeded, maintaining performance without pre-provisioning excess capacity year-round.

On-premises systems face physical trunk and port limits, requiring weeks or months of hardware procurement to scale capacity. For contact centers dealing with seasonal surges or rapid growth, that procurement lag is a direct revenue risk.

Must-Have Technical Requirements for Scalable Voice AI

Compliance and Data Sovereignty

Regulated industries require verified compliance certifications before voice AI can process customer conversations:

Critical certifications:

- SOC 2 Type II: Independent audit over 6–12 months verifying security controls are operating effectively

- HIPAA BAA: Cloud providers maintaining ePHI must execute a Business Associate Agreement—even for "no-view" encrypted services

- GDPR (Article 9): Biometric data processing for unique identification requires explicit consent or a strict enumerated exception; cross-border transfers need appropriate safeguards

- PCI DSS v4.0.1: Prohibits storage of Sensitive Authentication Data (including CVV) after authorization regardless of encryption—audio recordings must not capture card verification details

Encryption standards:

- AES-128, AES-192, or AES-256 at rest (NIST SP 800-57)

- TLS 1.2 minimum with TLS 1.3 support in transit (NIST SP 800-52 Rev 2)

For teams handling particularly sensitive data, self-hosted or on-premises deployment options offer additional data sovereignty control. Dograh AI holds SOC 2, HIPAA, GDPR, and PCI DSS certifications and supports both cloud and self-hosted deployment for organizations requiring full on-premise data control.

Deep CRM and Telephony Integration

Voice AI that cannot access live CRM context during a call cannot personalize interactions or resolve issues requiring account data. Effective integration requires four layers:

1. Telephony/CCaaS platform compatibility:

- Genesys, Five9, Talkdesk, Amazon Connect

- SIP trunk connectivity for self-hosted deployments

- Inbound/outbound call handling with call control APIs

2. Bidirectional CRM sync:

- Salesforce, HubSpot, Zendesk

- Real-time data retrieval during active calls

- Post-call activity logging and contact updates

3. Knowledge base access:

- Internal documentation repositories

- FAQ databases

- Product catalogs and technical specifications

4. Backend system connectivity:

- Order management and billing APIs (REST, webhooks)

- Authentication and identity verification services

- Payment processing integrations

Platforms offering pre-built connectors reduce implementation timelines from 14–28 weeks to 6–12 weeks for typical deployments.

Concurrency Limits and SLA Commitments

Default concurrency limits vary dramatically across platforms. Amazon Connect defaults to 10 concurrent active calls per instance (adjustable via service quotas), while enterprise CCaaS platforms may cap at 25–100 simultaneous sessions without custom negotiation.

Contractual considerations:

- Negotiate explicit concurrency commitments in writing before signing

- Clarify uptime SLAs: 99.9% allows 43 minutes downtime per month; 99.99% allows 4.3 minutes; 99.999% allows 26 seconds

- Define remedies for SLA breaches—service credits should be tiered (10% credit below 99.99%, 100% credit below 97%)

- Verify whether concurrency billing uses peak sustained load or instantaneous spikes (some platforms ignore peaks shorter than 30 minutes)

Natural Conversation Quality and Context Retention

Effective voice AI for high-volume environments must handle interruptions, accents, background noise, and long multi-turn conversations without losing context. Context windows vary significantly—some platforms maintain full context for 5–10 minutes, while others extend to 45+ minutes.

Losing context mid-call forces customers to repeat information already provided, driving CSAT scores down and pushing handle time up. For complex scenarios like technical troubleshooting or multi-step account changes, platforms with shorter context windows will create measurable gaps in resolution quality.

Dograh AI maintains context for over 45 minutes in multi-turn conversations, enabling complex issue resolution without forcing customers to restart explanations after transfers or system lookups.

Pre-Deployment Testing Frameworks

Testing before live deployment is where most voice AI implementations cut corners—and pay for it in production. AI-driven simulation frameworks validate real-world customer scenarios at scale, catching edge cases that manual demo testing consistently misses.

Dograh AI includes LoopTalk, an AI-to-AI testing framework where synthetic customer personas interact with voice agents autonomously before deployment. LoopTalk covers three critical pre-launch checks:

- Validates conversation flows against expected agent behavior

- Identifies response gaps and edge-case failures before they reach customers

- Stress-tests agents under concurrent load without manual test case execution

Top Voice AI Solutions for Scalable, High-Volume Contact Centers

These platforms were assessed on concurrency capacity, latency, compliance coverage, integration depth, pricing transparency, and deployment flexibility. All-in-one CCaaS platforms get you live in 6–12 weeks; standalone APIs offer maximum customization but typically require 14–28 weeks to integrate.

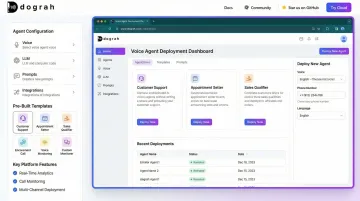

Dograh AI

Dograh AI is an open-source, self-hostable voice AI platform built for production-grade deployments without platform fees or vendor lock-in. The platform delivers sub-500ms latency and enables voice agents to be deployed in under 2 minutes using pre-built templates or simple natural language descriptions.

Key differentiators:

- Multi-agent conversational flows with 45+ minute context retention

- No double billing on STT/TTS/LLM usage — transparent passthrough pricing with no platform markups

- LoopTalk AI-to-AI testing framework simulates real-world call scenarios before deployment

- Compliance certifications: SOC 2, HIPAA, GDPR, PCI DSS

- Flexible deployment: cloud-hosted or self-hosted open source under BSD 2-Clause license

- Direct support from founders and engineers via Slack community

Best suited for: Teams requiring full data sovereignty, regulated deployment environments (healthcare, finance, defense), or cost-transparent scaling without unpredictable platform fees.

Enterprise CCaaS Platforms (Genesys, NICE CXone, Five9)

For organizations that need a fully managed stack, all-in-one contact center solutions bundle native voice AI, pre-integrated telephony, and enterprise governance into a single contract.

Comparative overview:

| Feature | Genesys Cloud CX | NICE CXone | Five9 |

|---|---|---|---|

| SLA Uptime | 100% target; credits <99.99% | 99.99% guaranteed | 99.999% targeted |

| Service Credits | 10% <99.99%; 100% <97% | Tiered contractual | 5% <99.99%; 100% <97% |

| Pricing | $75–$240/user/month | $71–$209/user/month | $119–$159/user/month |

| Gartner Position | Leader (11th consecutive year) | Leader (Highest Execution) | Leader (8th year) |

Strengths: Rapid deployment, broad omnichannel support, strong uptime SLAs, enterprise governance frameworks.

Trade-offs: Opaque pricing for AI features, vendor lock-in, limited self-serve customization, concurrency limits often require custom negotiation.

Voice-First Specialist Platforms (PolyAI, Replicant)

Purpose-built for phone channel automation, these platforms prioritize voice quality engineered for interruption-handling and natural conversation flow.

Comparative overview:

| Feature | PolyAI | Replicant |

|---|---|---|

| Performance | 50% call volume reduction; 95% CSAT | 80% call resolution; 50% cost-per-call reduction |

| Multilingual | 75+ languages with native fluency | 30+ languages |

| Scale | 2,000+ live deployments | 500M+ minutes automated |

| Pricing | Custom enterprise (quote-only) | Flat annual or pay-as-you-go (quote-only) |

Strengths: Exceptional voice quality, high resolution rates, strong multilingual support, brand-sensitive customization.

Trade-offs: Quote-only pricing limits early cost benchmarking, high-touch implementation, longer deployment timelines for complex scenarios.

Conversation Intelligence and Agent Assist Platforms (Cresta, Observe AI, Level AI)

Unlike the categories above, these platforms augment human agents in real time rather than replacing them with full call automation — a meaningful distinction for contact centers running hybrid models.

Use cases:

- Real-time agent coaching and next-best-action recommendations during live calls

- Post-call quality assurance and compliance monitoring

- Performance analytics and trend identification across agent populations

- Training gap identification and personalized coaching workflows

Best suited for: Contact centers implementing hybrid human-AI models where human agents handle complex interactions with AI providing real-time guidance, rather than replacing human-handled calls entirely.

How to Evaluate and Roll Out Voice Automation at Scale

Pilot-First Principle

Never commit to enterprise-wide deployment without running a scoped pilot at 100–500 concurrent calls. Define measurable success criteria before the pilot begins: specific targets like "resolve 70% of billing inquiries autonomously with <3% escalation rate" work far better than vague goals.

Key validation points for pilot success:

- System integration works without manual intervention

- Responses are accurate, on-brand, and compliant

- Non-technical staff can manage workflows and adjust prompts

- Core metrics move positively: AHT reduction, FCR improvement, cost-per-call decrease

Phased Scaling Approach

After validating the pilot, progress incrementally: 500 → 1,000 → 5,000 → 10,000+ concurrent calls. Monitor Word Error Rate degradation and latency spikes at each tier before advancing.

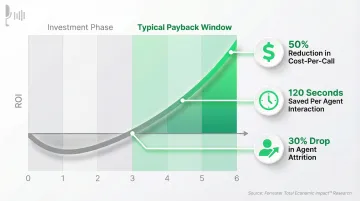

Budget for the full picture: Enterprise teams routinely underestimate AI agent Total Cost of Ownership by 40–60%. A $100K vendor quote can become $140K–$160K in Year 1 once integration, data preparation, compliance review, and ongoing monitoring are factored in. Budget 25–30% above vendor quotes for implementation overhead.

ROI timeline expectations: According to Forrester Total Economic Impact research, well-scoped deployments typically achieve payback in under six months. Documented impacts include:

- Up to 50% reduction in cost-per-call

- 120 seconds saved per agent interaction

- 30% drop in agent attrition, driven by fewer repetitive tasks

Organizational Change Management

The largest barriers to scaling AI-native contact center services are integration with legacy systems (42%) and organizational resistance to change (41%). Position voice AI to internal teams as a tool that frees agents for more complex, high-value work — not a headcount replacement.

Implementation best practices:

- Route routine data retrieval and verification to AI; reserve human agents for empathy-driven, complex problem-solving

- Share live dashboards tracking AHT, FCR, CSAT, cost-per-call, and agent productivity across teams

- Set honest expectations: measurable ROI typically materializes at the 3–6 month mark

- Involve frontline staff in conversation design and workflow refinement — their input reduces resistance and improves accuracy

Frequently Asked Questions

What are the top voice AI solutions for scalable voice automation in high-volume contact centers?

Top solutions fall into four categories:

- Open-source self-hosted (e.g., Dograh AI) — cost transparency and data sovereignty

- All-in-one CCaaS (e.g., Genesys, NICE CXone) — integrated telephony and enterprise governance

- Voice-first specialists (e.g., PolyAI, Replicant) — optimized for conversational quality

- Agent-assist platforms (e.g., Cresta) — augments human agents rather than replacing them

Your best fit depends on call volume, compliance requirements, and whether pricing transparency matters to your procurement process.

What software do most call centers use?

Most large call centers use a CCaaS platform such as Genesys, NICE CXone, Five9, or Amazon Connect as their core infrastructure. These platforms are increasingly layered with AI voice automation tools for call deflection, agent assist, and analytics to handle growing call volumes without proportional headcount increases.

How does voice AI handle sudden call volume spikes without performance degradation?

Cloud-native voice AI platforms use elastic compute and microservices architecture to spin up additional processing capacity in real time. Auto-scaling groups detect when concurrent call volume exceeds thresholds and automatically provision additional instances, maintaining sub-500ms latency and consistent accuracy even when concurrent volume doubles or triples.

What compliance certifications should I look for in a contact center voice AI platform?

Regulated industries should verify these certifications at minimum:

- SOC 2 Type II — independent audit of security controls

- HIPAA BAA — required for healthcare data handling

- GDPR alignment — for data protection in EU-adjacent deployments

- PCI DSS — required for payment card environments

Also confirm AES-256 encryption at rest and TLS 1.2+ in transit before signing any contract.

How long does it take to deploy a voice AI solution in a contact center?

Embedded CCaaS platforms with native voice AI typically deploy in 6–12 weeks. Standalone voice AI APIs take 14–28 weeks due to custom middleware and telephony integration. Platforms like Dograh AI can deploy template-based agents in as little as 2 minutes for straightforward use cases using pre-built, no-code workflows.